UX And Product Designer’s Career Paths In 2026

How to shape your career path for 2026, with decision trees for designers and a UX skills self-assessment matrix. The only limits for tomorrow are the doubts we have today. Brought to you by Smart Int

Ux

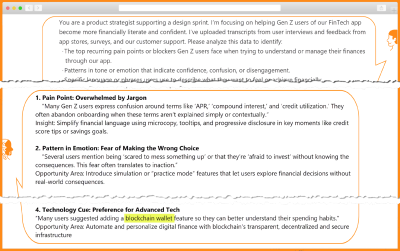

From Prompt To Partner: Designing Your Custom AI Assistant

What if your best AI prompts didn’t disappear into your unorganized chat history, but came back tomorrow as a reliable assistant? In this article, you’ll learn how to turn one-off “aha” prompt

Ux

Generating Unique Random Numbers In JavaScript Using Sets

Want to create more randomized effects in your JavaScript code? The Math.random() method alone, with its limitations, won’t cut it for generating unique random numbers. Amejimaobari Ollornwi explain

Javascript

Intent Prototyping: The Allure And Danger Of Pure Vibe Coding In Enterprise UX (Part 1)

Intent Prototyping: The Allure And Danger Of Pure Vibe Coding In Enterprise UX (Part 1) Intent Prototyping: The Allure And Danger Of Pure Vibe Coding In Enterprise UX (Part 1) Yegor Gilyov 2025-09-24T17:00:00+00:00 2025-10-01T15:02:43+00:00 There is a spectrum of opinions on how dramatically all creative […]

Accessibility

Intent Prototyping: The Allure And Danger Of Pure Vibe Coding In Enterprise UX (Part 1)

Yegor Gilyov 2025-09-24T17:00:00+00:00

2025-10-01T15:02:43+00:00

There is a spectrum of opinions on how dramatically all creative professions will be changed by the coming wave of agentic AI, from the very skeptical to the wildly optimistic and even apocalyptic. I think that even if you are on the “skeptical” end of the spectrum, it makes sense to explore ways this new technology can help with your everyday work. As for my everyday work, I’ve been doing UX and product design for about 25 years now, and I’m always keen to learn new tricks and share them with colleagues. Right now, I’m interested in AI-assisted prototyping, and I’m here to share my thoughts on how it can change the process of designing digital products.

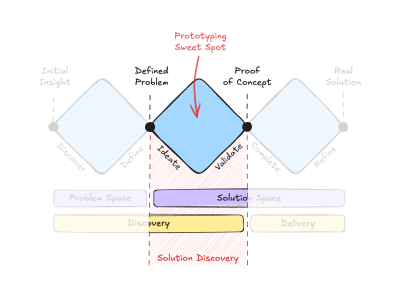

To set your expectations up front: this exploration focuses on a specific part of the product design lifecycle. Many people know about the Double Diamond framework, which shows the path from problem to solution. However, I think it’s the Triple Diamond model that makes an important point for our needs. It explicitly separates the solution space into two phases: Solution Discovery (ideating and validating the right concept) and Solution Delivery (engineering the validated concept into a final product). This article is focused squarely on that middle diamond: Solution Discovery.

How AI can help with the preceding (Problem Discovery) and the following (Solution Delivery) stages is out of the scope of this article. Problem Discovery is less about prototyping and more about research, and while I believe AI can revolutionize the research process as well, I’ll leave that to people more knowledgeable in the field. As for Solution Delivery, it is more about engineering optimization. There’s no doubt that software engineering in the AI era is undergoing dramatic changes, but I’m not an engineer — I’m a designer, so let me focus on my “sweet spot”.

And my “sweet spot” has a specific flavor: designing enterprise applications. In this world, the main challenge is taming complexity: dealing with complicated data models and guiding users through non-linear workflows. This background has had a big impact on my approach to design, putting a lot of emphasis on the underlying logic and structure. This article explores the potential of AI through this lens.

I’ll start by outlining the typical artifacts designers create during Solution Discovery. Then, I’ll examine the problems with how this part of the process often plays out in practice. Finally, we’ll explore whether AI-powered prototyping can offer a better approach, and if so, whether it aligns with what people call “vibe coding,” or calls for a more deliberate and disciplined way of working.

What We Create During Solution Discovery

The Solution Discovery phase begins with the key output from the preceding research: a well-defined problem and a core hypothesis for a solution. This is our starting point. The artifacts we create from here are all aimed at turning that initial hypothesis into a tangible, testable concept.

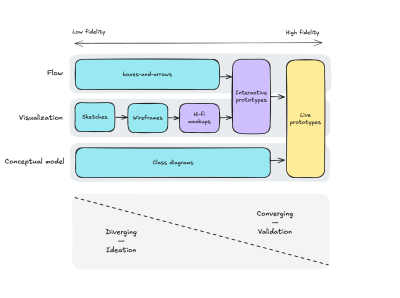

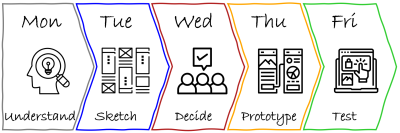

Traditionally, at this stage, designers can produce artifacts of different kinds, progressively increasing fidelity: from napkin sketches, boxes-and-arrows, and conceptual diagrams to hi-fi mockups, then to interactive prototypes, and in some cases even live prototypes. Artifacts of lower fidelity allow fast iteration and enable the exploration of many alternatives, while artifacts of higher fidelity help to understand, explain, and validate the concept in all its details.

It’s important to think holistically, considering different aspects of the solution. I would highlight three dimensions:

- Conceptual model: Objects, relations, attributes, actions;

- Visualization: Screens, from rough sketches to hi-fi mockups;

- Flow: From the very high-level user journeys to more detailed ones.

One can argue that those are layers rather than dimensions, and each of them builds on the previous ones (for example, according to Semantic IxD by Daniel Rosenberg), but I see them more as different facets of the same thing, so the design process through them is not necessarily linear: you may need to switch from one perspective to another many times.

This is how different types of design artifacts map to these dimensions:

As Solution Discovery progresses, designers move from the left part of this map to the right, from low-fidelity to high-fidelity, from ideating to validating, from diverging to converging.

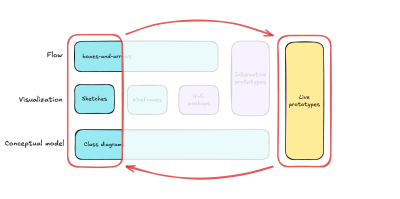

Note that at the beginning of the process, different dimensions are supported by artifacts of different types (boxes-and-arrows, sketches, class diagrams, etc.), and only closer to the end can you build a live prototype that encompasses all three dimensions: conceptual model, visualization, and flow.

This progression shows a classic trade-off, like the difference between a pencil drawing and an oil painting. The drawing lets you explore ideas in the most flexible way, whereas the painting has a lot of detail and overall looks much more realistic, but is hard to adjust. Similarly, as we go towards artifacts that integrate all three dimensions at higher fidelity, our ability to iterate quickly and explore divergent ideas goes down. This inverse relationship has long been an accepted, almost unchallenged, limitation of the design process.

The Problem With The Mockup-Centric Approach

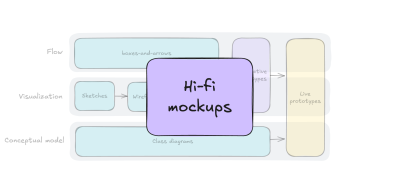

Faced with this difficult trade-off, often teams opt for the easiest way out. On the one hand, they need to show that they are making progress and create things that appear detailed. On the other hand, they rarely can afford to build interactive or live prototypes. This leads them to over-invest in one type of artifact that seems to offer the best of both worlds. As a result, the neatly organized “bento box” of design artifacts we saw previously gets shrunk down to just one compartment: creating static high-fidelity mockups.

This choice is understandable, as several forces push designers in this direction. Stakeholders are always eager to see nice pictures, while artifacts representing user flows and conceptual models receive much less attention and priority. They are too high-level and hardly usable for validation, and usually, not everyone can understand them.

On the other side of the fidelity spectrum, interactive prototypes require too much effort to create and maintain, and creating live prototypes in code used to require special skills (and again, effort). And even when teams make this investment, they do so at the end of Solution Discovery, during the convergence stage, when it is often too late to experiment with fundamentally different ideas. With so much effort already sunk, there is little appetite to go back to the drawing board.

It’s no surprise, then, that many teams default to the perceived safety of static mockups, seeing them as a middle ground between the roughness of the sketches and the overwhelming complexity and fragility that prototypes can have.

As a result, validation with users doesn’t provide enough confidence that the solution will actually solve the problem, and teams are forced to make a leap of faith to start building. To make matters worse, they do so without a clear understanding of the conceptual model, the user flows, and the interactions, because from the very beginning, designers’ attention has been heavily skewed toward visualization.

The result is often a design artifact that resembles the famous “horse drawing” meme: beautifully rendered in the parts everyone sees first (the mockups), but dangerously underdeveloped in its underlying structure (the conceptual model and flows).

While this is a familiar problem across the industry, its severity depends on the nature of the project. If your core challenge is to optimize a well-understood, linear flow (like many B2C products), a mockup-centric approach can be perfectly adequate. The risks are contained, and the “lopsided horse” problem is unlikely to be fatal.

However, it’s different for the systems I specialize in: complex applications defined by intricate data models and non-linear, interconnected user flows. Here, the biggest risks are not on the surface but in the underlying structure, and a lack of attention to the latter would be a recipe for disaster.

Transforming The Design Process

This situation makes me wonder:

How might we close the gap between our design intent and a live prototype, so that we can iterate on real functionality from day one?

“

If we were able to answer this question, we would:

- Learn faster.

By going straight from intent to a testable artifact, we cut the feedback loop from weeks to days. - Gain more confidence.

Users interact with real logic, which gives us more proof that the idea works. - Enforce conceptual clarity.

A live prototype cannot hide a flawed or ambiguous conceptual model. - Establish a clear and lasting source of truth.

A live prototype, combined with a clearly documented design intent, provides the engineering team with an unambiguous specification.

Of course, the desire for such a process is not new. This vision of a truly prototype-driven workflow is especially compelling for enterprise applications, where the benefits of faster learning and forced conceptual clarity are the best defense against costly structural flaws. But this ideal was still out of reach because prototyping in code took so much work and specialized talents. Now, the rise of powerful AI coding assistants changes this equation in a big way.

The Seductive Promise Of “Vibe Coding”

And the answer seems to be obvious: vibe coding!

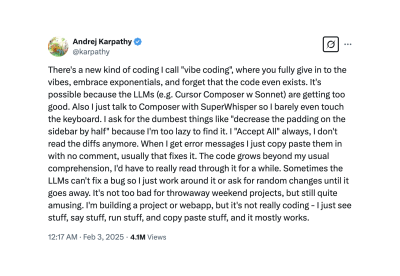

“Vibe coding is an artificial intelligence-assisted software development style popularized by Andrej Karpathy in early 2025. It describes a fast, improvisational, collaborative approach to creating software where the developer and a large language model (LLM) tuned for coding is acting rather like pair programmers in a conversational loop.”

The original tweet by Andrej Karpathy:

The allure of this approach is undeniable. If you are not a developer, you are bound to feel awe when you describe a solution in plain language, and moments later, you can interact with it. This seems to be the ultimate fulfillment of our goal: a direct, frictionless path from an idea to a live prototype. But is this method reliable enough to build our new design process around it?

The Trap: A Process Without A Blueprint

Vibe coding mixes up a description of the UI with a description of the system itself, resulting in a prototype based on changing assumptions rather than a clear, solid model.

The pitfall of vibe coding is that it encourages us to express our intent in the most ambiguous way possible: by having a conversation.

“

This is like hiring a builder and telling them what to do one sentence at a time without ever presenting them a blueprint. They could make a wall that looks great, but you can’t be sure that it can hold weight.

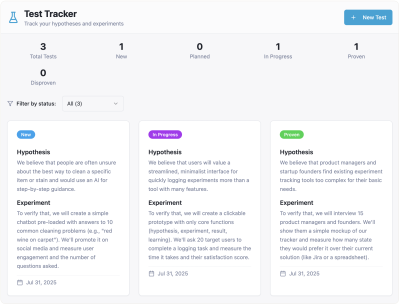

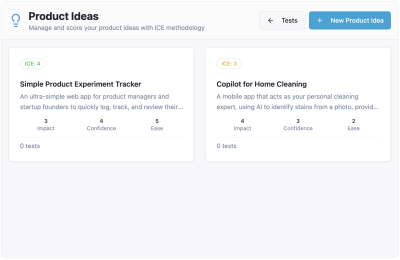

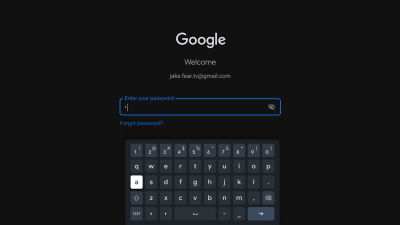

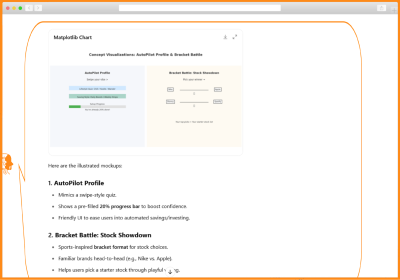

I’ll give you one example illustrating problems you may face if you try to jump over the chasm between your idea and a live prototype relying on pure vibe coding in the spirit of Andrej Karpathy’s tweet. Imagine I want to prototype a solution to keep track of tests to validate product ideas. I open my vibe coding tool of choice (I intentionally don’t disclose its name, as I believe they all are awesome yet prone to similar pitfalls) and start with the following prompt:

I need an app to track tests. For every test, I need to fill out the following data:

- Hypothesis (we believe that...)

- Experiment (to verify that, we will...)

- When (a single date, or a period)

- Status (New/Planned/In Progress/Proven/Disproven)

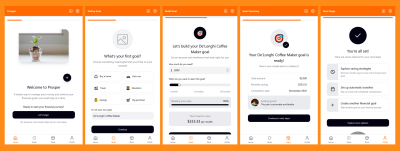

And in a minute or so, I get a working prototype:

Inspired by success, I go further:

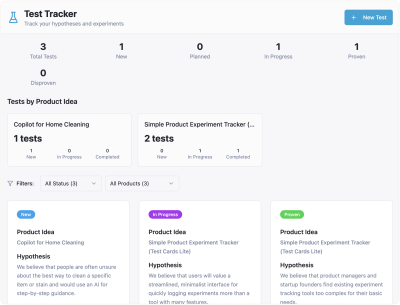

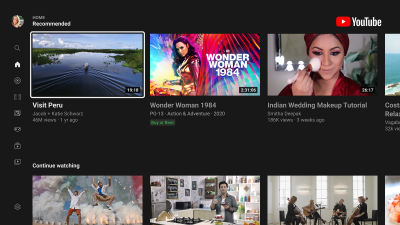

Please add the ability to specify a product idea for every test. Also, I want to filter tests by product ideas and see how many tests each product idea has in each status.

And the result is still pretty good:

But then I want to extend the functionality related to product ideas:

Okay, one more thing. For every product idea, I want to assess the impact score, the confidence score, and the ease score, and get the overall ICE score. Perhaps I need a separate page focused on the product idea, with all the relevant information and related tests.

And from this point on, the results are getting more and more confusing.

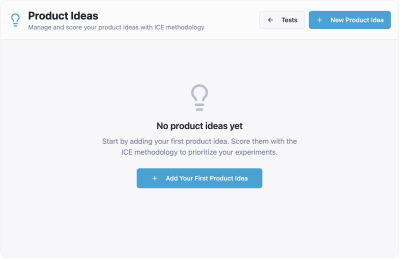

The flow of creating tests hasn’t changed much. I can still create a bunch of tests, and they seem to be organized by product ideas. But when I click “Product Ideas” in the top navigation, I see nothing:

I need to create my ideas from scratch, and they are not connected to the tests I created before:

Moreover, when I go back to “Tests”, I see that they are all gone. Clearly something went wrong, and my AI assistant confirms that:

No, this is not expected behavior — it’s a bug! The issue is that tests are being stored in two separate places (local state in the Index page and App state), so tests created on the main page don’t sync with the product ideas page.

Sure, eventually it fixed that bug, but note that we encountered this just on the third step, when we asked to slightly extend the functionality of a very simple app. The more layers of complexity we add, the more roadblocks of this sort we are bound to face.

Also note that this specific problem of a not fully thought-out relationship between two entities (product ideas and tests) is not isolated at the technical level, and therefore, it didn’t go away once the technical bug was fixed. The underlying conceptual model is still broken, and it manifests in the UI as well.

For example, you can still create “orphan” tests that are not connected to any item from the “Product Ideas” page. As a result, you may end up with different numbers of ideas and tests on different pages of the app:

Let’s diagnose what really happened here. The AI’s response that this is a “bug” is only half the story. The true root cause is a conceptual model failure. My prompts never explicitly defined the relationship between product ideas and tests. The AI was forced to guess, which led to the broken experience. For a simple demo, this might be a fixable annoyance. But for a data-heavy enterprise application, this kind of structural ambiguity is fatal. It demonstrates the fundamental weakness of building without a blueprint, which is precisely what vibe coding encourages.

Don’t take this as a criticism of vibe coding tools. They are creating real magic. However, the fundamental truth about “garbage in, garbage out” is still valid. If you don’t express your intent clearly enough, chances are the result won’t fulfill your expectations.

Another problem worth mentioning is that even if you wrestle it into a state that works, the artifact is a black box that can hardly serve as reliable specifications for the final product. The initial meaning is lost in the conversation, and all that’s left is the end result. This makes the development team “code archaeologists,” who have to figure out what the designer was thinking by reverse-engineering the AI’s code, which is frequently very complicated. Any speed gained at the start is lost right away because of this friction and uncertainty.

From Fast Magic To A Solid Foundation

Pure vibe coding, for all its allure, encourages building without a blueprint. As we’ve seen, this results in structural ambiguity, which is not acceptable when designing complex applications. We are left with a seemingly quick but fragile process that creates a black box that is difficult to iterate on and even more so to hand off.

This leads us back to our main question: how might we close the gap between our design intent and a live prototype, so that we can iterate on real functionality from day one, without getting caught in the ambiguity trap? The answer lies in a more methodical, disciplined, and therefore trustworthy process.

In Part 2 of this series, “A Practical Guide to Building with Clarity”, I will outline the entire workflow for Intent Prototyping. This method places the explicit intent of the designer at the forefront of the process while embracing the potential of AI-assisted coding.

Thank you for reading, and I look forward to seeing you in Part 2.

(yk)

The Psychology Of Trust In AI: A Guide To Measuring And Designing For User Confidence

The Psychology Of Trust In AI: A Guide To Measuring And Designing For User Confidence The Psychology Of Trust In AI: A Guide To Measuring And Designing For User Confidence Victor Yocco 2025-09-19T10:00:00+00:00 2025-09-24T15:02:52+00:00 Misuse and misplaced trust of AI is becoming an unfortunate common […]

Accessibility

The Psychology Of Trust In AI: A Guide To Measuring And Designing For User Confidence

Victor Yocco 2025-09-19T10:00:00+00:00

2025-09-24T15:02:52+00:00

Misuse and misplaced trust of AI is becoming an unfortunate common event. For example, lawyers trying to leverage the power of generative AI for research submit court filings citing multiple compelling legal precedents. The problem? The AI had confidently, eloquently, and completely fabricated the cases cited. The resulting sanctions and public embarrassment can become a viral cautionary tale, shared across social media as a stark example of AI’s fallibility.

This goes beyond a technical glitch; it’s a catastrophic failure of trust in AI tools in an industry where accuracy and trust are critical. The trust issue here is twofold — the law firms are submitting briefs in which they have blindly over-trusted the AI tool to return accurate information. The subsequent fallout can lead to a strong distrust in AI tools, to the point where platforms featuring AI might not be considered for use until trust is reestablished.

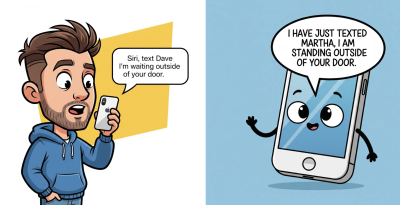

Issues with trusting AI aren’t limited to the legal field. We are seeing the impact of fictional AI-generated information in critical fields such as healthcare and education. On a more personal scale, many of us have had the experience of asking Siri or Alexa to perform a task, only to have it done incorrectly or not at all, for no apparent reason. I’m guilty of sending more than one out-of-context hands-free text to an unsuspecting contact after Siri mistakenly pulls up a completely different name than the one I’d requested.

With digital products moving to incorporate generative and agentic AI at an increasingly frequent rate, trust has become the invisible user interface. When it works, our interactions are seamless and powerful. When it breaks, the entire experience collapses, with potentially devastating consequences. As UX professionals, we’re on the front lines of a new twist on a common challenge. How do we build products that users can rely on? And how do we even begin to measure something as ephemeral as trust in AI?

Trust isn’t a mystical quality. It is a psychological construct built on predictable factors. I won’t dive deep into academic literature on trust in this article. However, it is important to understand that trust is a concept that can be understood, measured, and designed for. This article will provide a practical guide for UX researchers and designers. We will briefly explore the psychological anatomy of trust, offer concrete methods for measuring it, and provide actionable strategies for designing more trustworthy and ethical AI systems.

The Anatomy of Trust: A Psychological Framework for AI

To build trust, we must first understand its components. Think of trust like a four-legged stool. If any one leg is weak, the whole thing becomes unstable. Based on classic psychological models, we can adapt these “legs” for the AI context.

1. Ability (or Competence)

This is the most straightforward pillar: Does the AI have the skills to perform its function accurately and effectively? If a weather app is consistently wrong, you stop trusting it. If an AI legal assistant creates fictitious cases, it has failed the basic test of ability. This is the functional, foundational layer of trust.

2. Benevolence

This moves from function to intent. Does the user believe the AI is acting in their best interest? A GPS that suggests a toll-free route even if it’s a few minutes longer might be perceived as benevolent. Conversely, an AI that aggressively pushes sponsored products feels self-serving, eroding this sense of benevolence. This is where user fears, such as concerns about job displacement, directly challenge trust—the user starts to believe the AI is not on their side.

3. Integrity

Does AI operate on predictable and ethical principles? This is about transparency, fairness, and honesty. An AI that clearly states how it uses personal data demonstrates integrity. A system that quietly changes its terms of service or uses dark patterns to get users to agree to something violates integrity. An AI job recruiting tool that has subtle yet extremely harmful social biases, existing in the algorithm, violates integrity.

4. Predictability & Reliability

Can the user form a stable and accurate mental model of how the AI will behave? Unpredictability, even if the outcomes are occasionally good, creates anxiety. A user needs to know, roughly, what to expect. An AI that gives a radically different answer to the same question asked twice is unpredictable and, therefore, hard to trust.

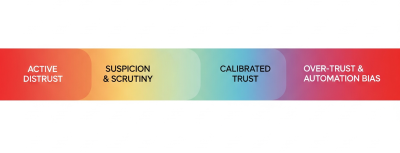

The Trust Spectrum: The Goal of a Well-Calibrated Relationship

Our goal as UX professionals shouldn’t be to maximize trust at all costs. An employee who blindly trusts every email they receive is a security risk. Likewise, a user who blindly trusts every AI output can be led into dangerous situations, such as the legal briefs referenced at the beginning of this article. The goal is well-calibrated trust.

Think of it as a spectrum where the upper-mid level is the ideal state for a truly trustworthy product to achieve:

- Active Distrust

The user believes the AI is incompetent or malicious. They will avoid it or actively work against it. - Suspicion & Scrutiny

The user interacts cautiously, constantly verifying the AI’s outputs. This is a common and often healthy state for users of new AI. - Calibrated Trust (The Ideal State)

This is the sweet spot. The user has an accurate understanding of the AI’s capabilities—its strengths and, crucially, its weaknesses. They know when to rely on it and when to be skeptical. - Over-trust & Automation Bias

The user unquestioningly accepts the AI’s outputs. This is where users follow flawed AI navigation into a field or accept a fictional legal brief as fact.

Our job is to design experiences that guide users away from the dangerous poles of Active Distrust and Over-trust and toward that healthy, realistic middle ground of Calibrated Trust.

“

The Researcher’s Toolkit: How to Measure Trust In AI

Trust feels abstract, but it leaves measurable fingerprints. Academics in the social sciences have done much to define both what trust looks like and how it might be measured. As researchers, we can capture these signals through a mix of qualitative, quantitative, and behavioral methods.

Qualitative Probes: Listening For The Language Of Trust

During interviews and usability tests, go beyond “Was that easy to use?” and listen for the underlying psychology. Here are some questions you can start using tomorrow:

- To measure Ability:

“Tell me about a time this tool’s performance surprised you, either positively or negatively.” - To measure Benevolence:

“Do you feel this system is on your side? What gives you that impression?” - To measure Integrity:

“If this AI made a mistake, how would you expect it to handle it? What would be a fair response?” - To measure Predictability:

“Before you clicked that button, what did you expect the AI to do? How closely did it match your expectation?”

Investigating Existential Fears (The Job Displacement Scenario)

One of the most potent challenges to an AI’s Benevolence is the fear of job displacement. When a participant expresses this, it is a critical research finding. It requires a specific, ethical probing technique.

Imagine a participant says, “Wow, it does that part of my job pretty well. I guess I should be worried.”

An untrained researcher might get defensive or dismiss the comment. An ethical, trained researcher validates and explores:

“Thank you for sharing that; it’s a vital perspective, and it’s exactly the kind of feedback we need to hear. Can you tell me more about what aspects of this tool make you feel that way? In an ideal world, how would a tool like this work with you to make your job better, not to replace it?”

This approach respects the participant, validates their concern, and reframes the feedback into an actionable insight about designing a collaborative, augmenting tool rather than a replacement. Similarly, your findings should reflect the concern users expressed about replacement. We shouldn’t pretend this fear doesn’t exist, nor should we pretend that every AI feature is being implemented with pure intention. Users know better than that, and we should be prepared to argue on their behalf for how the technology might best co-exist within their roles.

Quantitative Measures: Putting A Number On Confidence

You can quantify trust without needing a data science degree. After a user completes a task with an AI, supplement your standard usability questions with a few simple Likert-scale items:

- “The AI’s suggestion was reliable.” (1-7, Strongly Disagree to Strongly Agree)

- “I am confident in the AI’s output.” (1-7)

- “I understood why the AI made that recommendation.” (1-7)

- “The AI responded in a way that I expected.” (1-7)

- “The AI provided consistent responses over time.” (1-7)

Over time, these metrics can track how trust is changing as your product evolves.

Note: If you want to go beyond these simple questions that I’ve made up, there are numerous scales (measurements) of trust in technology that exist in academic literature. It might be an interesting endeavor to measure some relevant psychographic and demographic characteristics of your users and see how that correlates with trust in AI/your product. Table 1 at the end of the article contains four examples of current scales you might consider using to measure trust. You can decide which is best for your application, or you might pull some of the items from any of the scales if you aren’t looking to publish your findings in an academic journal, yet want to use items that have been subjected to some level of empirical scrutiny.

Behavioral Metrics: Observing What Users Do, Not Just What They Say

People’s true feelings are often revealed in their actions. You can use behaviors that reflect the specific context of use for your product. Here are a few general metrics that might apply to most AI tools that give insight into users’ behavior and the trust they place in your tool.

- Correction Rate

How often do users manually edit, undo, or ignore the AI’s output? A high correction rate is a powerful signal of low trust in its Ability. - Verification Behavior

Do users switch to Google or open another application to double-check the AI’s work? This indicates they don’t trust it as a standalone source of truth. It can also potentially be positive that they are calibrating their trust in the system when they use it up front. - Disengagement

Do users turn the AI feature off? Do they stop using it entirely after one bad experience? This is the ultimate behavioral vote of no confidence.

Designing For Trust: From Principles to Pixels

Once you’ve researched and measured trust, you can begin to design for it. This means translating psychological principles into tangible interface elements and user flows.

Designing for Competence and Predictability

- Set Clear Expectations

Use onboarding, tooltips, and empty states to honestly communicate what the AI is good at and where it might struggle. A simple “I’m still learning about [topic X], so please double-check my answers” can work wonders. - Show Confidence Levels

Instead of just giving an answer, have the AI signal its own uncertainty. A weather app that says “70% chance of rain” is more trustworthy than one that just says “It will rain” and is wrong. An AI could say, “I’m 85% confident in this summary,” or highlight sentences it’s less sure about.

The Role of Explainability (XAI) and Transparency

Explainability isn’t about showing users the code. It’s about providing a useful, human-understandable rationale for a decision.

Instead of:

“Here is your recommendation.”Try:

“Because you frequently read articles about UX research methods, I’m recommending this new piece on measuring trust in AI.”

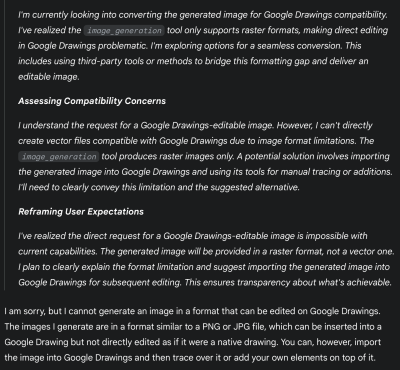

This addition transforms AI from an opaque oracle to a transparent logical partner.

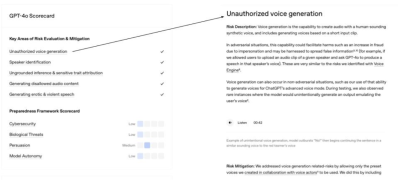

Many of the popular AI tools (e.g., ChatGPT and Gemini) show the thinking that went into the response they provide to a user. Figure 3 shows the steps Gemini went through to provide me with a non-response when I asked it to help me generate the masterpiece displayed above in Figure 2. While this might be more information than most users care to see, it provides a useful resource for a user to audit how the response came to be, and it has provided me with instructions on how I might proceed to address my task.

Figure 4 shows an example of a scorecard OpenAI makes available as an attempt to increase users’ trust. These scorecards are available for each ChatGPT model and go into the specifics of how the models perform as it relates to key areas such as hallucinations, health-based conversations, and much more. In reading the scorecards closely, you will see that no AI model is perfect in any area. The user must remain in a trust but verify mode to make the relationship between human reality and AI work in a way that avoids potential catastrophe. There should never be blind trust in an LLM.

Designing For Trust Repair (Graceful Error Handling) And Not Knowing an Answer

Your AI will make mistakes.

Trust is not determined by the absence of errors, but by how those errors are handled.

- Acknowledge Errors Humbly.

When the AI is wrong, it should be able to state that clearly. “My apologies, I misunderstood that request. Could you please rephrase it?” is far better than silence or a nonsensical answer. - Provide an Easy Path to Correction.

Make feedback mechanisms (like thumbs up/down or a correction box) obvious. More importantly, show that the feedback is being used. A “Thank you, I’m learning from your correction” can help rebuild trust after a failure. As long as this is true.

Likewise, your AI can’t know everything. You should acknowledge this to your users.

UX practitioners should work with the product team to ensure that honesty about limitations is a core product principle.

“

This can include the following:

- Establish User-Centric Metrics: Instead of only measuring engagement or task completion, UXers can work with product managers to define and track metrics like:

- Hallucination Rate: The frequency with which the AI provides verifiably false information.

- Successful Fallback Rate: How often the AI correctly identifies its inability to answer and provides a helpful, honest alternative.

- Prioritize the “I Don’t Know” Experience: UXers should frame the “I don’t know” response not as an error state, but as a critical feature. They must lobby for the engineering and content resources needed to design a high-quality, helpful fallback experience.

UX Writing And Trust

All of these considerations highlight the critical role of UX writing in the development of trustworthy AI. UX writers are the architects of the AI’s voice and tone, ensuring that its communication is clear, honest, and empathetic. They translate complex technical processes into user-friendly explanations, craft helpful error messages, and design conversational flows that build confidence and rapport. Without thoughtful UX writing, even the most technologically advanced AI can feel opaque and untrustworthy.

The words and phrases an AI uses are its primary interface with users. UX writers are uniquely positioned to shape this interaction, ensuring that every tooltip, prompt, and response contributes to a positive and trust-building experience. Their expertise in human-centered language and design is indispensable for creating AI systems that not only perform well but also earn and maintain the trust of their users.

A few key areas for UX writers to focus on when writing for AI include:

- Prioritize Transparency

Clearly communicate the AI’s capabilities and limitations, especially when it’s still learning or if its responses are generated rather than factual. Use phrases that indicate the AI’s nature, such as “As an AI, I can…” or “This is a generated response.” - Design for Explainability

When the AI provides a recommendation, decision, or complex output, strive to explain the reasoning behind it in an understandable way. This builds trust by showing the user how the AI arrived at its conclusion. - Emphasize User Control

Empower users by providing clear ways to provide feedback, correct errors, or opt out of certain AI features. This reinforces the idea that the user is in control and the AI is a tool to assist them.

The Ethical Tightrope: The Researcher’s Responsibility

As the people responsible for understanding and advocating for users, we walk an ethical tightrope. Our work comes with profound responsibilities.

The Danger Of “Trustwashing”

We must draw a hard line between designing for calibrated trust and designing to manipulate users into trusting a flawed, biased, or harmful system. For example, if an AI system designed for loan approvals consistently discriminates against certain demographics but presents a user interface that implies fairness and transparency, this would be an instance of trustwashing.

Another example of trustwashing would be if an AI medical diagnostic tool occasionally misdiagnoses conditions, but the user interface makes it seem infallible. To avoid trustwashing, the system should clearly communicate the potential for error and the need for human oversight.

Our goal must be to create genuinely trustworthy systems, not just the perception of trust. Using these principles to lull users into a false sense of security is a betrayal of our professional ethics.

To avoid and prevent trustwashing, researchers and UX teams should:

- Prioritize genuine transparency.

Clearly communicate the limitations, biases, and uncertainties of AI systems. Don’t overstate capabilities or obscure potential risks. - Conduct rigorous, independent evaluations.

Go beyond internal testing and seek external validation of system performance, fairness, and robustness. - Engage with diverse stakeholders.

Involve users, ethics experts, and impacted communities in the design, development, and evaluation processes to identify potential harms and build genuine trust. - Be accountable for outcomes.

Take responsibility for the societal impact of AI systems, even if unintended. Establish mechanisms for redress and continuous improvement. - Be accountable for outcomes.

Establish clear and accessible mechanisms for redress when harm occurs, ensuring that individuals and communities affected by AI decisions have avenues for recourse and compensation. - Educate the public.

Help users understand how AI works, its limitations, and what to look for when evaluating AI products. - Advocate for ethical guidelines and regulations.

Support the development and implementation of industry standards and policies that promote responsible AI development and prevent deceptive practices. - Be wary of marketing hype.

Critically assess claims made about AI systems, especially those that emphasize “trustworthiness” without clear evidence or detailed explanations. - Publish negative findings.

Don’t shy away from reporting challenges, failures, or ethical dilemmas encountered during research. Transparency about limitations is crucial for building long-term trust. - Focus on user empowerment.

Design systems that give users control, agency, and understanding rather than just passively accepting AI outputs.

The Duty To Advocate

When our research uncovers deep-seated distrust or potential harm — like the fear of job displacement — our job has only just begun. We have an ethical duty to advocate for that user. In my experience directing research teams, I’ve seen that the hardest part of our job is often carrying these uncomfortable truths into rooms where decisions are made. We must champion these findings and advocate for design and strategy shifts that prioritize user well-being, even when it challenges the product roadmap.

I personally try to approach presenting this information as an opportunity for growth and improvement, rather than a negative challenge.

For example, instead of stating “Users don’t trust our AI because they fear job displacement,” I might frame it as “Addressing user concerns about job displacement presents a significant opportunity to build deeper trust and long-term loyalty by demonstrating our commitment to responsible AI development and exploring features that enhance human capabilities rather than replace them.” This reframing can shift the conversation from a defensive posture to a proactive, problem-solving mindset, encouraging collaboration and innovative solutions that ultimately benefit both the user and the business.

It’s no secret that one of the more appealing areas for businesses to use AI is in workforce reduction. In reality, there will be many cases where businesses look to cut 10–20% of a particular job family due to the perceived efficiency gains of AI. However, giving users the opportunity to shape the product may steer it in a direction that makes them feel safer than if they do not provide feedback. We should not attempt to convince users they are wrong if they are distrustful of AI. We should appreciate that they are willing to provide feedback, creating an experience that is informed by the human experts who have long been doing the task being automated.

Conclusion: Building Our Digital Future On A Foundation Of Trust

The rise of AI is not the first major technological shift our field has faced. However, it presents one of the most significant psychological challenges of our current time. Building products that are not just usable but also responsible, humane, and trustworthy is our obligation as UX professionals.

Trust is not a soft metric. It is the fundamental currency of any successful human-technology relationship. By understanding its psychological roots, measuring it with rigor, and designing for it with intent and integrity, we can move from creating “intelligent” products to building a future where users can place their confidence in the tools they use every day. A trust that is earned and deserved.

Table 1: Published Academic Scales Measuring Trust In Automated Systems

| Survey Tool Name | Focus | Key Dimensions of Trust | Citation |

|---|---|---|---|

| Trust in Automation Scale | 12-item questionnaire to assess trust between people and automated systems. | Measures a general level of trust, including reliability, predictability, and confidence. | Jian, J. Y., Bisantz, A. M., & Drury, C. G. (2000). Foundations for an empirically determined scale of trust in automated systems. International Journal of Cognitive Ergonomics, 4(1), 53–71. |

| Trust of Automated Systems Test (TOAST) | 9-items used to measure user trust in a variety of automated systems, designed for quick administration. | Divided into two main subscales: Understanding (user’s comprehension of the system) and Performance (belief in the system’s effectiveness). | Wojton, H. M., Porter, D., Lane, S. T., Bieber, C., & Madhavan, P. (2020). Initial validation of the trust of automated systems test (TOAST). (PDF) The Journal of Social Psychology, 160(6), 735–750. |

| Trust in Automation Questionnaire | A 19-item questionnaire capable of predicting user reliance on automated systems. A 2-item subscale is available for quick assessments; the full tool is recommended for a more thorough analysis. | Measures 6 factors: Reliability, Understandability, Propensity to trust, Intentions of developers, Familiarity, Trust in automation | Körber, M. (2018). Theoretical considerations and development of a questionnaire to measure trust in automation. In Proceedings 20th Triennial Congress of the IEA. Springer. |

| Human Computer Trust Scale | 12-item questionnaire created to provide an empirically sound tool for assessing user trust in technology. | Divided into two key factors:

|

Siddharth Gulati, Sonia Sousa & David Lamas (2019): Design, development and evaluation of a human-computer trust scale, (PDF) Behaviour & Information Technology |

Appendix A: Trust-Building Tactics Checklist

To design for calibrated trust, consider implementing the following tactics, organized by the four pillars of trust:

1. Ability (Competence) & Predictability

- ✅ Set Clear Expectations: Use onboarding, tooltips, and empty states to honestly communicate the AI’s strengths and weaknesses.

- ✅ Show Confidence Levels: Display the AI’s uncertainty (e.g., “70% chance,” “85% confident”) or highlight less certain parts of its output.

- ✅ Provide Explainability (XAI): Offer useful, human-understandable rationales for the AI’s decisions or recommendations (e.g., “Because you frequently read X, I’m recommending Y”).

- ✅ Design for Graceful Error Handling:

- ✅ Acknowledge errors humbly (e.g., “My apologies, I misunderstood that request.”).

- ✅ Provide easy paths to correction (e. ] g., prominent feedback mechanisms like thumbs up/down).

- ✅ Show that feedback is being used (e.g., “Thank you, I’m learning from your correction”).

- ✅ Design for “I Don’t Know” Responses:

- ✅ Acknowledge limitations honestly.

- ✅ Prioritize a high-quality, helpful fallback experience when the AI cannot answer.

- ✅ Prioritize Transparency: Clearly communicate the AI’s capabilities and limitations, especially if responses are generated.

2. Benevolence

- ✅ Address Existential Fears: When users express concerns (e.g., job displacement), validate their concerns and reframe the feedback into actionable insights about collaborative tools.

- ✅ Prioritize User Well-being: Advocate for design and strategy shifts that prioritize user well-being, even if it challenges the product roadmap.

- ✅ Emphasize User Control: Provide clear ways for users to give feedback, correct errors, or opt out of AI features.

3. Integrity

- ✅ Adhere to Ethical Principles: Ensure the AI operates on predictable, ethical principles, demonstrating fairness and honesty.

- ✅ Prioritize Genuine Transparency: Clearly communicate the limitations, biases, and uncertainties of AI systems; avoid overstating capabilities or obscuring risks.

- ✅ Conduct Rigorous, Independent Evaluations: Seek external validation of system performance, fairness, and robustness to mitigate bias.

- ✅ Engage Diverse Stakeholders: Involve users, ethics experts, and impacted communities in the design and evaluation processes.

- ✅ Be Accountable for Outcomes: Establish clear mechanisms for redress and continuous improvement for societal impacts, even if unintended.

- ✅ Educate the Public: Help users understand how AI works, its limitations, and how to evaluate AI products.

- ✅ Advocate for Ethical Guidelines: Support the development and implementation of industry standards and policies that promote responsible AI.

- ✅ Be Wary of Marketing Hype: Critically assess claims about AI “trustworthiness” and demand verifiable data.

- ✅ Publish Negative Findings: Be transparent about challenges, failures, or ethical dilemmas encountered during research.

4. Predictability & Reliability

- ✅ Set Clear Expectations: Use onboarding, tooltips, and empty states to honestly communicate what the AI is good at and where it might struggle.

- ✅ Show Confidence Levels: Instead of just giving an answer, have the AI signal its own uncertainty.

- ✅ Provide Explainability (XAI) and Transparency: Offer a useful, human-understandable rationale for AI decisions.

- ✅ Design for Graceful Error Handling: Acknowledge errors humbly and provide easy paths to correction.

- ✅ Prioritize the “I Don’t Know” Experience: Frame “I don’t know” as a feature and design a high-quality fallback experience.

- ✅ Prioritize Transparency (UX Writing): Clearly communicate the AI’s capabilities and limitations, especially when it’s still learning or if responses are generated.

- ✅ Design for Explainability (UX Writing): Explain the reasoning behind AI recommendations, decisions, or complex outputs.

(yk)

How To Minimize The Environmental Impact Of Your Website

How To Minimize The Environmental Impact Of Your Website How To Minimize The Environmental Impact Of Your Website James Chudley 2025-09-18T10:00:00+00:00 2025-09-24T15:02:52+00:00 Climate change is the single biggest health threat to humanity, accelerated by human activities such as the burning of fossil fuels, which generate […]

Accessibility

How To Minimize The Environmental Impact Of Your Website

James Chudley 2025-09-18T10:00:00+00:00

2025-09-24T15:02:52+00:00

Climate change is the single biggest health threat to humanity, accelerated by human activities such as the burning of fossil fuels, which generate greenhouse gases that trap the sun’s heat.

The average temperature of the earth’s surface is now 1.2°C warmer than it was in the late 1800’s, and projected to more than double by the end of the century.

The consequences of climate change include intense droughts, water shortages, severe fires, melting polar ice, catastrophic storms, and declining biodiversity.

The Internet Is A Significant Part Of The Problem

Shockingly, the internet is responsible for higher global greenhouse emissions than the aviation industry, and is projected to be responsible for 14% of all global greenhouse gas emissions by 2040.

If the internet were a country, it would be the 4th largest polluter in the world and represents the largest coal-powered machine on the planet.

But how can something digital like the internet produce harmful emissions?

Internet emissions come from powering the infrastructure that drives the internet, such as the vast data centres and data transmission networks that consume huge amounts of electricity.

Internet emissions also come from the global manufacturing, distribution, and usage of the estimated 30.5 billion devices (phones, laptops, etc.) that we use to access the internet.

Unsurprisingly, internet related emissions are increasing, given that 60% of the world’s population spend, on average, 40% of their waking hours online.

We Must Urgently Reduce The Environmental Impact Of The Internet

As responsible digital professionals, we must act quickly to minimise the environmental impact of our work.

It is encouraging to see the UK government encourage action by adding “Minimise environmental impact” to their best practice design principles, but there is still too much talking and not enough corrective action taking place within our industry.

The reality of many tightly constrained, fast-paced, and commercially driven web projects is that minimising environmental impact is far from the agenda.

So how can we make the environment more of a priority and talk about it in ways that stakeholders will listen to?

A eureka moment on a recent web optimisation project gave me an idea.

My Eureka Moment

I led a project to optimise the mobile performance of www.talktofrank.com, a government drug advice website that aims to keep everyone safe from harm.

Mobile performance is critically important for the success of this service to ensure that users with older mobile devices and those using slower network connections can still access the information they need.

Our work to minimise page weights focused on purely technical changes that our developer made following recommendations from tools such as Google Lighthouse that reduced the size of the webpages of a key user journey by up to 80%. This resulted in pages downloading up to 30% faster and the carbon footprint of the journey being reduced by 80%.

We hadn’t set out to reduce the carbon footprint, but seeing these results led to my eureka moment.

I realised that by minimising page weights, you improve performance (which is a win for users and service owners) and also consume less energy (due to needing to transfer and store less data), creating additional benefits for the planet — so everyone wins.

This felt like a breakthrough because business, user, and environmental requirements are often at odds with one another. By focussing on minimising websites to be as simple, lightweight and easy to use as possible you get benefits that extend beyond the triple bottom line of people, planet and profit to include performance and purpose.

So why is ‘minimising’ such a great digital sustainability strategy?

- Profit

Website providers win because their website becomes more efficient and more likely to meet its intended outcomes, and a lighter site should also lead to lower hosting bills. - People

People win because they get to use a website that downloads faster, is quick and easy to use because it’s been intentionally designed to be as simple as possible, enabling them to complete their tasks with the minimum amount of effort and mental energy. - Performance

Lightweight webpages download faster so perform better for users, particularly those on older devices and on slower network connections. - Planet

The planet wins because the amount of energy (and associated emissions) that is required to deliver the website is reduced. - Purpose

We know that we do our best work when we feel a sense of purpose. It is hugely gratifying as a digital professional to know that our work is doing good in the world and contributing to making things better for people and the environment.

In order to prioritise the environment, we need to be able to speak confidently in a language that will resonate with the business and ensure that any investment in time and resources yields the widest range of benefits possible.

So even if you feel that the environment is a very low priority on your projects, focusing on minimising page weights to improve performance (which is generally high on the agenda) presents the perfect trojan horse for an environmental agenda (should you need one).

Doing the right thing isn’t always easy, but we’ve done it before when managing to prioritise issues such as usability, accessibility, and inclusion on digital projects.

Many of the things that make websites easier to use, more accessible, and more effective also help to minimise their environmental impact, so the things you need to do will feel familiar and achievable, so don’t worry about it all being another new thing to learn about!

So this all makes sense in theory, but what’s the master plan to use when putting it into practice?

The Masterplan

The masterplan for creating websites that have minimal environmental impact is to focus on offering the maximum value from the minimum input of energy.

It’s an adaptation of Buckminister Fuller’s ‘Dymaxion’ principle, which is one of his many progressive and groundbreaking sustainability strategies for living and surviving on a planet with finite resources.

Inputs of energy include both the electrical energy that is required to operate websites and also the mental energy that is required to use them.

You can achieve this by minimising websites to their core content, features, and functionality, ensuring that everything can be justified from the perspective of meeting a business or user need. This means that anything that isn’t adding a proportional amount of value to the amount of energy it requires to provide it should be removed.

So that’s the masterplan, but how do you put it into practice?

Decarbonise Your Highest Value User Journeys

I’ve developed a new approach called ‘Decarbonising User Journeys’ that will help you to minimise the environmental impact of your website and maximise its performance.

Note: The approach deliberately focuses on optimising key user journeys and not entire websites to keep things manageable and to make it easier to get started.

The secret here is to start small, demonstrate improvements, and then scale.

The approach consists of five simple steps:

- Identify your highest value user journey,

- Benchmark your user journey,

- Set targets,

- Decarbonise your user journey,

- Track and share your progress.

Here’s how it works.

Step 1: Identify Your Highest Value User Journey

Your highest value user journey might be the one that your users value the most, the one that brings you the highest revenue, or the one that is fundamental to the success of your organisation.

You could also focus on a user journey that you know is performing particularly badly and has the potential to deliver significant business and user benefits if improved.

You may have lots of important user journeys, and it’s fine to decarbonise multiple journeys in parallel if you have the resources, but I’d recommend starting with one first to keep things simple.

To bring this to life, let’s consider a hypothetical example of a premiership football club trying to decarbonise its online ticket-buying journey that receives high levels of traffic and is responsible for a significant proportion of its weekly income.

Step 2: Benchmark Your User Journey

Once you’ve selected your user journey, you need to benchmark it in terms of how well it meets user needs, the value it offers your organisation, and its carbon footprint.

It is vital that you understand the job it needs to do and how well it is doing it before you start to decarbonise it. There is no point in removing elements of the journey in an effort to reduce its carbon footprint, for example, if you compromise its ability to meet a key user or business need.

You can benchmark how well your user journey is meeting user needs by conducting user research alongside analysing existing customer feedback. Interviews with business stakeholders will help you to understand the value that your journey is providing the organisation and how well business needs are being met.

You can benchmark the carbon footprint and performance of your user journey using online tools such as Cardamon, Ecograder, Website Carbon Calculator, Google Lighthouse, and Bioscore. Make sure you have your analytics data to hand to help get the most accurate estimate of your footprint.

To use these tools, simply add the URL of each page of your journey, and they will give you a range of information such as page weight, energy rating, and carbon emissions. Google Lighthouse works slightly differently via a browser plugin and generates a really useful and detailed performance report as opposed to giving you a carbon rating.

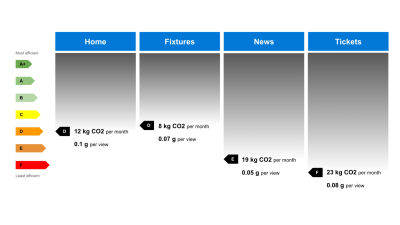

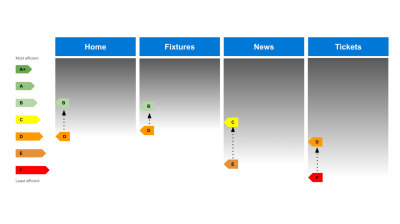

A great way to bring your benchmarking scores to life is to visualise them in a similar way to how you would present a customer journey map or service blueprint.

This example focuses on just communicating the carbon footprint of the user journey, but you can also add more swimlanes to communicate how well the journey is performing from a user and business perspective, too, adding user pain points, quotes, and business metrics where appropriate.

I’ve found that adding the energy efficiency ratings is really effective because it’s an approach that people recognise from their household appliances. This adds a useful context to just showing the weights (such as grams or kilograms) of CO2, which are generally meaningless to people.

Within my benchmarking reports, I also add a set of benchmarking data for every page within the user journey. This gives your stakeholders a more detailed breakdown and a simple summary alongside a snapshot of the benchmarked page.

Your benchmarking activities will give you a really clear picture of where remedial work is required from an environmental, user, and business point of view.

In our football user journey example, it’s clear that the ‘News’ and ‘Tickets’ pages need some attention to reduce their carbon footprint, so they would be a sensible priority for decarbonising.

Step 3: Set Targets

Use your benchmarking results to help you set targets to aim for, such as a carbon budget, energy efficiency, maximum page weight, and minimum Google Lighthouse performance targets for each individual page, in addition to your existing UX metrics and business KPIs.

There is no right or wrong way to set targets. Choose what you think feels achievable and viable for your business, and you’ll only learn how reasonable and achievable they are when you begin to decarbonise your user journeys.

Setting targets is important because it gives you something to aim for and keeps you focused and accountable. The quantitative nature of this work is great because it gives you the ability to quickly demonstrate the positive impact of your work, making it easier to justify the time and resources you are dedicating to it.

Step 4: Decarbonise Your User Journey

Your objective now is to decarbonise your user journey by minimising page weights, improving your Lighthouse performance rating, and minimising pages so that they meet both user and business needs in the most efficient, simple, and effective way possible.

It’s up to you how you approach this depending on the resources and skills that you have, you can focus on specific pages or addressing a specific problem area such as heavyweight images or videos across the entire user journey.

Here’s a list of activities that will all help to reduce the carbon footprint of your user journey:

- Work through the recommendations in the ‘diagnostics’ section of your Google Lighthouse report to help optimise page performance.

- Switch to a green hosting provider if you are not already using one. Use the Green Web Directory to help you choose one.

- Work through the W3C Web Sustainability Guidelines, implementing the most relevant guidelines to your specific user journey.

- Remove anything that is not adding any user or business value.

- Reduce the amount of information on your webpages to make them easier to read and less overwhelming for people.

- Replace content with a lighter-weight alternative (such as swapping a video for text) if the lighter-weight alternative provides the same value.

- Optimise assets such as photos, videos, and code to reduce file sizes.

- Remove any barriers to accessing your website and any distractions that are getting in the way.

- Re-use familiar components and design patterns to make your websites quicker and easier to use.

- Write simply and clearly in plain English to help people get the most value from your website and to help them avoid making mistakes that waste time and energy to resolve.

- Fix any usability issues you identified during your benchmarking to ensure that your website is as easy to use and useful as possible.

- Ensure your user journey is as accessible as possible so the widest possible audience can benefit from using it, offsetting the environmental cost of providing the website.

Step 5: Track And Share Your Progress

As you decarbonise your user journeys, use the benchmarking tools from step 2 to track your progress against the targets you set in step 3 and share your progress as part of your wider sustainability reporting initiatives.

All being well at this point, you will have the numbers to demonstrate how the performance of your user journey has improved and also how you have managed to reduce its carbon footprint.

Share these results with the business as soon as you have them to help you secure the resources to continue the work and initiate similar work on other high-value user journeys.

You should also start to communicate your progress with your users.

It’s important that they are made aware of the carbon footprint of their digital activity and empowered to make informed choices about the environmental impact of the websites that they use.

Ideally, every website should communicate the emissions generated from viewing their pages to help people make these informed choices and also to encourage website providers to minimise their emissions if they are being displayed publicly.

Often, people will have no choice but to use a specific website to complete a specific task, so it is the responsibility of the website provider to ensure the environmental impact of using their website is as small as possible.

You can also help to raise awareness of the environmental impact of websites and what you are doing to minimise your own impact by publishing a digital sustainability statement, such as Unilever’s, as shown below.

A good digital sustainability statement should acknowledge the environmental impact of your website, what you have done to reduce it, and what you plan to do next to minimise it further.

As an industry, we should normalise publishing digital sustainability statements in the same way that accessibility statements have become a standard addition to website footers.

“

Useful Decarbonising Principles

Keep these principles in mind to help you decarbonise your user journeys:

- More doing and less talking.

Start decarbonising your user journeys as soon as possible to accelerate your learning and positive change. - Start small.

Starting small by decarbonising an individual journey makes it easier to get started and generates results to demonstrate value faster. - Aim to do more with less.

Minimise what you offer to ensure you are providing the maximum amount of value for the energy you are consuming. - Make your website as useful and as easy to use as possible.

Useful websites can justify the energy they consume to provide them, ensuring they are net positive in terms of doing more good than harm. - Focus on progress over perfection.

Websites are never finished or perfect but they can always be improved, every small improvement you make will make a difference.

Start Decarbonising Your User Journeys Today

Decarbonising user journeys shouldn’t be done as a one-off, reserved for the next time that you decide to redesign or replatform your website; it should happen on a continual basis as part of your broader digital sustainability strategy.

We know that websites are never finished and that the best websites continually improve as both user and business needs change. I’d like to encourage people to adopt the same mindset when it comes to minimising the environmental impact of their websites.

Decarbonising will happen most effectively when digital professionals challenge themselves on a daily basis to ‘minimise’ the things they are working on.

“

This avoids building ‘carbon debt’ that consists of compounding technical and design debt within our websites, which is always harder to retrospectively remove than avoid in the first place.

By taking a pragmatic approach, such as optimising high-value user journeys and aligning with business metrics such as performance, we stand the best possible chance of making digital sustainability a priority.

You’ll have noticed that, other than using website carbon calculator tools, this approach doesn’t require any skills that don’t already exist within typical digital teams today. This is great because it means you’ve already got the skills that you need to do this important work.

I would encourage everyone to raise the issue of the environmental impact of the internet in their next team meeting and to try this decarbonising approach to create better outcomes for people, profit, performance, purpose, and the planet.

Good luck!

(yk)

Functional Personas With AI: A Lean, Practical Workflow

Functional Personas With AI: A Lean, Practical Workflow Functional Personas With AI: A Lean, Practical Workflow Paul Boag 2025-09-16T08:00:00+00:00 2025-09-17T15:32:19+00:00 Traditional personas suck for UX work. They obsess over marketing metrics like age, income, and job titles while missing what actually matters in design: what […]

Accessibility

Functional Personas With AI: A Lean, Practical Workflow

Paul Boag 2025-09-16T08:00:00+00:00

2025-09-17T15:32:19+00:00

Traditional personas suck for UX work. They obsess over marketing metrics like age, income, and job titles while missing what actually matters in design: what people are trying to accomplish.

Functional personas, on the other hand, focus on what people are trying to do, not who they are on paper. With a simple AI‑assisted workflow, you can build and maintain personas that actually guide design, content, and conversion decisions.

- Keep users front of mind with task‑driven personas,

- Skip fragile demographics; center on goals, questions, and blockers,

- Use AI to process your messy inputs fast and fill research gaps,

- Validate lightly, ship confidently, and keep them updated.

In this article, I want to breathe new life into a stale UX asset.

For too long, personas have been something that many of us just created, despite the considerable work that goes into them, only to find they have limited usefulness.

I know that many of you may have given up on them entirely, but I am hoping in this post to encourage you that it is possible to create truly useful personas in a lightweight way.

Why Personas Still Matter

Personas give you a shared lens. When everyone uses the same reference point, you cut debate and make better calls. For UX designers, developers, and digital teams, that shared lens keeps you from designing in silos and helps you prioritize work that genuinely improves the experience.

I use personas as a quick test: Would this change help this user complete their task faster, with fewer doubts? If the answer is no (or a shrug), it’s probably a sign the idea isn’t worth pursuing.

From Demographics To Function

Traditional personas tell you someone’s age, job title, or favorite brand. That makes a nice poster, but it rarely changes design or copy.

Functional personas flip the script. They describe:

- Goals & tasks: What the person is here to achieve.

- Questions & objections: What they need to know before they act.

- Touchpoints: How the person interacts with the organization.

- Service gaps: How the company might be letting this persona down.

When you center on tasks and friction, you get direct lines from user needs to UI decisions, content, and conversion paths.

But remember, this list isn’t set in stone — adapt it to what’s actually useful in your specific situation.

One of the biggest problems with traditional personas was following a rigid template regardless of whether it made sense for your project. We must not fall into that same mistake with functional personas.

“

The Benefits of Functional Personas

For small startups, functional personas reduce wasted effort. For enterprise teams, they keep sprawling projects grounded in what matters most.

However, because of the way we are going to produce our personas, they provide certain benefits in either case:

- Lighten the load: They’re easier to update without large research cycles.

- Stay current: Because they are easy to produce, we can update them more often.

- Tie to outcomes: Tasks, objections, and proof points map straight to funnels, flows, and product decisions.

We can deliver these benefits because we are going to use AI to help us, rather than carrying out a lot of time-consuming new research.

How AI Helps Us Get There

Of course, doing fresh research is always preferable. But in many cases, it is not feasible due to time or budget constraints. I would argue that using AI to help us create personas based on existing assets is preferable to having no focus on user attention at all.

AI tools can chew through the inputs you already have (surveys, analytics, chat logs, reviews) and surface patterns you can act on. They also help you scan public conversations around your product category to fill gaps.

I therefore recommend using AI to:

- Synthesize inputs: Turn scattered notes into clean themes.

- Spot segments by need: Group people by jobs‑to‑be‑done, not demographics.

- Draft quickly: Produce first‑pass personas and sample journeys in minutes.

- Iterate with stakeholders: Update on the fly as you get feedback.

AI doesn’t remove the need for traditional research. Rather, it is a way of extracting more value from the scattered insights into users that already exist within an organization or online.

“

The Workflow

Here’s how to move from scattered inputs to usable personas. Each step builds on the last, so treat it as a cycle you can repeat as projects evolve.

1. Set Up A Dedicated Workspace

Create a dedicated space within your AI tool for this work. Most AI platforms offer project management features that let you organize files and conversations:

- In ChatGPT and Claude, use “Projects” to store context and instructions.

- In Perplexity, Gemini and CoPilot similar functionality is referred to as “Spaces.”

This project space becomes your central repository where all uploaded documents, research data, and generated personas live together. The AI will maintain context between sessions, so you won’t have to re-upload materials each time you iterate. This structured approach makes your workflow more efficient and helps the AI deliver more consistent results.

2. Write Clear Instructions

Next, you can brief your AI project so that it understands what it wants from you. For example:

“Act as a user researcher. Create realistic, functional personas using the project files and public research. Segment by needs, tasks, questions, pain points, and goals. Show your reasoning.”

Asking for a rationale gives you a paper trail you can defend to stakeholders.

3. Upload What You’ve Got (Even If It’s Messy)

This is where things get really powerful.

Upload everything (and I mean everything) you can put your hands on relating to the user. Old surveys, past personas, analytics screenshots, FAQs, support tickets, review snippets; dump them all in. The more varied the sources, the stronger the triangulation.

4. Run Focused External Research

Once you have done that, you can supplement that data by getting AI to carry out “deep research” about your brand. Have AI scan recent (I often focus on the last year) public conversations for your brand, product space, or competitors. Look for:

- Who’s talking and what they’re trying to do;

- Common questions and blockers;

- Phrases people use (great for copywriting).

Save the report you get back into your project.

5. Propose Segments By Need

Once you have done that, ask AI to suggest segments based on tasks and friction points (not demographics). Push back until each segment is distinct, observable, and actionable. If two would behave the same way in your flow, merge them.

This takes a little bit of trial and error and is where your experience really comes into play.

6. Generate Draft Personas

Now you have your segments, the next step is to draft your personas. Use a simple template so the document is read and used. If your personas become too complicated, people will not read them. Each persona should:

- State goals and tasks,

- List objections and blockers,

- Highlight pain points,

- Show touchpoints,

- Identify service gaps.

Below is a sample template you can work with:

# Persona Title: e.g. Savvy Shopper

- Person's Name: e.g. John Smith.

- Age: e.g. 24

- Job: e.g. Social Media Manager

"A quote that sums up the persona's general attitude"

## Primary Goal

What they’re here to achieve (1–2 lines).

## Key Tasks

• Task 1

• Task 2

• Task 3

## Questions & Objections

• What do they need to know before they act?

• What might make them hesitate?

## Pain Points

• Where do they get stuck?

• What feels risky, slow, or confusing?

## Touchpoints

• What channels are they most commonly interacting with?

## Service Gaps

• How is the organization currently failing this persona?

Remember, you should customize this to reflect what will prove useful within your organization.

7. Validate

It is important to validate that what the AI has produced is realistic. Obviously, no persona is a true representation as it is a snapshot in time of a Hypothetical user. However, we do want it to be as accurate as possible.

Share your drafts with colleagues who interact regularly with real users — people in support cells or research teams. Where possible, test with a handful of users. Then cut anything that you can’t defend or correct any errors that are identified.

Troubleshooting & Guardrails

As you work through the above process, you will encounter problems. Here are common pitfalls and how to avoid them:

- Too many personas?

Merge until each one changes a design or copy decision. Three strong personas beat seven weak ones. - Stakeholder wants demographics?

Only include details that affect behavior. Otherwise, leave them out. Suggest separate personas for other functions (such as marketing). - AI hallucinations?

Always ask for a rationale or sources. Cross‑check with your own data and customer‑facing teams. - Not enough data?

Mark assumptions clearly, then validate with quick interviews, surveys, or usability tests.

Making Personas Useful In Practice

The most important thing to remember is to actually use your personas once they’ve been created. They can easily become forgotten PDFs rather than active tools. Instead, personas should shape your work and be referenced regularly. Here are some ways you can put personas to work:

- Navigation & IA: Structure menus by top tasks.

- Content & Proof: Map objections to FAQs, case studies, and microcopy.

- Flows & UI: Streamline steps to match how people think.

- Conversion: Match CTAs to personas’ readiness, goals, and pain points.

- Measurement: Track KPIs that map to personas, not vanity metrics.

With this approach, personas evolve from static deliverables into dynamic reference points your whole team can rely on.

Keep Them Alive

Treat personas as a living toolkit. Schedule a refresh every quarter or after major product changes. Rerun the research pass, regenerate summaries, and archive outdated assumptions. The goal isn’t perfection; it’s keeping them relevant enough to guide decisions.

Bottom Line

Functional personas are faster to build, easier to maintain, and better aligned with real user behavior. By combining AI’s speed with human judgment, you can create personas that don’t just sit in a slide deck; they actively shape better products, clearer interfaces, and smoother experiences.

(yk)

From Data To Decisions: UX Strategies For Real-Time Dashboards

From Data To Decisions: UX Strategies For Real-Time Dashboards From Data To Decisions: UX Strategies For Real-Time Dashboards Karan Rawal 2025-09-12T15:00:00+00:00 2025-09-17T15:32:19+00:00 I once worked with a fleet operations team that monitored dozens of vehicles in multiple cities. Their dashboard showed fuel consumption, live GPS […]

Accessibility

From Data To Decisions: UX Strategies For Real-Time Dashboards

Karan Rawal 2025-09-12T15:00:00+00:00

2025-09-17T15:32:19+00:00

I once worked with a fleet operations team that monitored dozens of vehicles in multiple cities. Their dashboard showed fuel consumption, live GPS locations, and real-time driver updates. Yet the team struggled to see what needed urgent attention. The problem was not a lack of data but a lack of clear indicators to support decision-making. There were no priorities, alerts, or context to highlight what mattered most at any moment.

Real-time dashboards are now critical decision-making tools in industries like logistics, manufacturing, finance, and healthcare. However, many of them fail to help users make timely and confident decisions, even when they show live data.

Designing for real-time use is very different from designing static dashboards. The challenge is not only presenting metrics but enabling decisions under pressure. Real-time users face limited time and a high cognitive load. They need clarity on actions, not just access to raw data.