How To Design For (And With) Deaf People

Practical UX guidelines to keep in mind for 466 million people who experience hearing loss. More design patterns in Smart Interface Design Patterns, a friendly video course on UX and design patterns b

Accessibility

Building An Offline-Friendly Image Upload System

Poor internet connectivity doesn’t have to mean poor UX. With PWA technologies like IndexedDB, service workers, and the Background Sync API, you can build an offline-friendly image upload system tha

Javascript

Creating The “Moving Highlight” Navigation Bar With JavaScript And CSS

In this tutorial, Blake Lundquist walks us through two methods of creating the “moving-highlight” navigation pattern using only plain JavaScript and CSS. The first technique uses the getBoundingCl

Javascript

Build A Static RSS Reader To Fight Your Inner FOMO

Build A Static RSS Reader To Fight Your Inner FOMO Build A Static RSS Reader To Fight Your Inner FOMO Karin Hendrikse 2024-10-07T13:00:00+00:00 2025-06-25T15:04:30+00:00 In a fast-paced industry like tech, it can be hard to deal with the fear of missing out on important news. […]

Accessibility

Build A Static RSS Reader To Fight Your Inner FOMO

Karin Hendrikse 2024-10-07T13:00:00+00:00

2025-06-25T15:04:30+00:00

In a fast-paced industry like tech, it can be hard to deal with the fear of missing out on important news. But, as many of us know, there’s an absolutely huge amount of information coming in daily, and finding the right time and balance to keep up can be difficult, if not stressful. A classic piece of technology like an RSS feed is a delightful way of taking back ownership of our own time. In this article, we will create a static Really Simple Syndication (RSS) reader that will bring you the latest curated news only once (yes: once) a day.

We’ll obviously work with RSS technology in the process, but we’re also going to combine it with some things that maybe you haven’t tried before, including Astro (the static site framework), TypeScript (for JavaScript goodies), a package called rss-parser (for connecting things together), as well as scheduled functions and build hooks provided by Netlify (although there are other services that do this).

I chose these technologies purely because I really, really enjoy them! There may be other solutions out there that are more performant, come with more features, or are simply more comfortable to you — and in those cases, I encourage you to swap in whatever you’d like. The most important thing is getting the end result!

The Plan

Here’s how this will go. Astro generates the website. I made the intentional decision to use a static site because I want the different RSS feeds to be fetched only once during build time, and that’s something we can control each time the site is “rebuilt” and redeployed with updates. That’s where Netlify’s scheduled functions come into play, as they let us trigger rebuilds automatically at specific times. There is no need to manually check for updates and deploy them! Cron jobs can just as readily do this if you prefer a server-side solution.

During the triggered rebuild, we’ll let the rss-parser package do exactly what it says it does: parse a list of RSS feeds that are contained in an array. The package also allows us to set a filter for the fetched results so that we only get ones from the past day, week, and so on. Personally, I only render the news from the last seven days to prevent content overload. We’ll get there!

But first…

What Is RSS?

RSS is a web feed technology that you can feed into a reader or news aggregator. Because RSS is standardized, you know what to expect when it comes to the feed’s format. That means we have a ton of fun possibilities when it comes to handling the data that the feed provides. Most news websites have their own RSS feed that you can subscribe to (this is Smashing Magazine’s RSS feed: https://www.smashingmagazine.com/feed/). An RSS feed is capable of updating every time a site publishes new content, which means it can be a quick source of the latest news, but we can tailor that frequency as well.

RSS feeds are written in an Extensible Markup Language (XML) format and have specific elements that can be used within it. Instead of focusing too much on the technicalities here, I’ll give you a link to the RSS specification. Don’t worry; that page should be scannable enough for you to find the most pertinent information you need, like the kinds of elements that are supported and what they represent. For this tutorial, we’re only using the following elements: <title>, <link>, <description>, <item>, and <pubDate>. We’ll also let our RSS parser package do some of the work for us.

Creating The State Site

We’ll start by creating our Astro site! In your terminal run pnpm create astro@latest. You can use any package manager you want — I’m simply trying out pnpm for myself.

After running the command, Astro’s chat-based helper, Houston, walks through some setup questions to get things started.

astro Launch sequence initiated.

dir Where should we create your new project?

./rss-buddy

tmpl How would you like to start your new project?

Include sample files

ts Do you plan to write TypeScript?

Yes

use How strict should TypeScript be?

Strict

deps Install dependencies?

Yes

git Initialize a new git repository?

Yes

I like to use Astro’s sample files so I can get started quickly, but we’re going to clean them up a bit in the process. Let’s clean up the src/pages/index.astro file by removing everything inside of the <main></main> tags. Then we’re good to go!

From there, we can spin things by running pnpm start. Your terminal will tell you which localhost address you can find your site at.

Pulling Information From RSS feeds

The src/pages/index.astro file is where we will make an array of RSS feeds we want to follow. We will be using Astro’s template syntax, so between the two code fences (—), create an array of feedSources and add some feeds. If you need inspiration, you can copy this:

const feedSources = [

'https://www.smashingmagazine.com/feed/',

'https://developer.mozilla.org/en-US/blog/rss.xml',

// etc.

]

Now we’ll install the rss-parser package in our project by running pnpm install rss-parser. This package is a small library that turns the XML that we get from fetching an RSS feed into JavaScript objects. This makes it easy for us to read our RSS feeds and manipulate the data any way we want.

Once the package is installed, open the src/pages/index.astro file, and at the top, we’ll import the rss-parser and instantiate the Partner class.

import Parser from 'rss-parser';

const parser = new Parser();

We use this parser to read our RSS feeds and (surprise!) parse them to JavaScript. We’re going to be dealing with a list of promises here. Normally, I would probably use Promise.all(), but the thing is, this is supposed to be a complicated experience. If one of the feeds doesn’t work for some reason, I’d prefer to simply ignore it.

Why? Well, because Promise.all() rejects everything even if only one of its promises is rejected. That might mean that if one feed doesn’t behave the way I’d expect it to, my entire page would be blank when I grab my hot beverage to read the news in the morning. I do not want to start my day confronted by an error.

Instead, I’ll opt to use Promise.allSettled(). This method will actually let all promises complete even if one of them fails. In our case, this means any feed that errors will just be ignored, which is perfect.

Let’s add this to the src/pages/index.astro file:

interface FeedItem {

feed?: string;

title?: string;

link?: string;

date?: Date;

}

const feedItems: FeedItem[] = [];

await Promise.allSettled(

feedSources.map(async (source) => {

try {

const feed = await parser.parseURL(source);

feed.items.forEach((item) => {

const date = item.pubDate ? new Date(item.pubDate) : undefined;

feedItems.push({

feed: feed.title,

title: item.title,

link: item.link,

date,

});

});

} catch (error) {

console.error(`Error fetching feed from ${source}:`, error);

}

})

);

This creates an array (or more) named feedItems. For each URL in the feedSources array we created earlier, the rss-parser retrieves the items and, yes, parses them into JavaScript. Then, we return whatever data we want! We’ll keep it simple for now and only return the following:

- The feed title,

- The title of the feed item,

- The link to the item,

- And the item’s published date.

The next step is to ensure that all items are sorted by date so we’ll truly get the “latest” news. Add this small piece of code to our work:

const sortedFeedItems = feedItems.sort((a, b) => (b.date ?? new Date()).getTime() - (a.date ?? new Date()).getTime());

Oh, and… remember when I said I didn’t want this RSS reader to render anything older than seven days? Let’s tackle that right now since we’re already in this code.

We’ll make a new variable called sevenDaysAgo and assign it a date. We’ll then set that date to seven days ago and use that logic before we add a new item to our feedItems array.

This is what the src/pages/index.astro file should now look like at this point:

---

import Layout from '../layouts/Layout.astro';

import Parser from 'rss-parser';

const parser = new Parser();

const sevenDaysAgo = new Date();

sevenDaysAgo.setDate(sevenDaysAgo.getDate() - 7);

const feedSources = [

'https://www.smashingmagazine.com/feed/',

'https://developer.mozilla.org/en-US/blog/rss.xml',

]

interface FeedItem {

feed?: string;

title?: string;

link?: string;

date?: Date;

}

const feedItems: FeedItem[] = [];

await Promise.allSettled(

feedSources.map(async (source) => {

try {

const feed = await parser.parseURL(source);

feed.items.forEach((item) => {

const date = item.pubDate ? new Date(item.pubDate) : undefined;

if (date && date >= sevenDaysAgo) {

feedItems.push({

feed: feed.title,

title: item.title,

link: item.link,

date,

});

}

});

} catch (error) {

console.error(`Error fetching feed from ${source}:`, error);

}

})

);

const sortedFeedItems = feedItems.sort((a, b) => (b.date ?? new Date()).getTime() - (a.date ?? new Date()).getTime());

---

<Layout title="Welcome to Astro.">

<main>

</main>

</Layout>

Rendering XML Data

It’s time to show our news articles on the Astro site! To keep this simple, we’ll format the items in an unordered list rather than some other fancy layout.

All we need to do is update the <Layout> element in the file with the XML objects sprinkled in for a feed item’s title, URL, and publish date.

<Layout title="Welcome to Astro.">

<main>

{sortedFeedItems.map(item => (

<ul>

<li>

<a href={item.link}>{item.title}</a>

<p>{item.feed}</p>

<p>{item.date}</p>

</li>

</ul>

))}

</main>

</Layout>

Go ahead and run pnpm start from the terminal. The page should display an unordered list of feed items. Of course, everything is styled at the moment, but luckily for you, you can make it look exactly like you want with CSS!

And remember that there are even more fields available in the XML for each item if you want to display more information. If you run the following snippet in your DevTools console, you’ll see all of the fields you have at your disposal:

feed.items.forEach(item => {}

Scheduling Daily Static Site Builds

We’re nearly done! The feeds are being fetched, and they are returning data back to us in JavaScript for use in our Astro page template. Since feeds are updated whenever new content is published, we need a way to fetch the latest items from it.

We want to avoid doing any of this manually. So, let’s set this site on Netlify to gain access to their scheduled functions that trigger a rebuild and their build hooks that do the building. Again, other services do this, and you’re welcome to roll this work with another provider — I’m just partial to Netlify since I work there. In any case, you can follow Netlify’s documentation for setting up a new site.

Once your site is hosted and live, you are ready to schedule your rebuilds. A build hook gives you a URL to use to trigger the new build, looking something like this:

https://api.netlify.com/build_hooks/your-build-hook-id

Let’s trigger builds every day at midnight. We’ll use Netlify’s scheduled functions. That’s really why I’m using Netlify to host this in the first place. Having them at the ready via the host greatly simplifies things since there’s no server work or complicated configurations to get this going. Set it and forget it!

We’ll install @netlify/functions (instructions) to the project and then create the following file in the project’s root directory: netlify/functions/deploy.ts.

This is what we want to add to that file:

// netlify/functions/deploy.ts

import type { Config } from '@netlify/functions';

const BUILD_HOOK =

'https://api.netlify.com/build_hooks/your-build-hook-id'; // replace me!

export default async (req: Request) => {

await fetch(BUILD_HOOK, {

method: 'POST',

})

};

export const config: Config = {

schedule: '0 0 * * *',

};

If you commit your code and push it, your site should re-deploy automatically. From that point on, it follows a schedule that rebuilds the site every day at midnight, ready for you to take your morning brew and catch up on everything that you think is important.

(gg, yk)

How A Bottom-Up Design Approach Enhances Site Accessibility

How A Bottom-Up Design Approach Enhances Site Accessibility How A Bottom-Up Design Approach Enhances Site Accessibility Eleanor Hecks 2024-10-04T09:00:00+00:00 2025-06-25T15:04:30+00:00 Accessibility is key in modern web design. A site that doesn’t consider how its user experience may differ for various audiences — especially those with […]

Accessibility

How A Bottom-Up Design Approach Enhances Site Accessibility

Eleanor Hecks 2024-10-04T09:00:00+00:00

2025-06-25T15:04:30+00:00

Accessibility is key in modern web design. A site that doesn’t consider how its user experience may differ for various audiences — especially those with disabilities — will fail to engage and serve everyone equally. One of the best ways to prevent this is to approach your site from a bottom-up perspective.

Understanding Bottom-Up Design

Conventional, top-down design approaches start with the big picture before breaking these goals and concepts into smaller details. Bottom-up philosophies, by contrast, consider the minute details first, eventually achieving the broader goal piece by piece.

This alternative way of thinking is important for accessibility in general because it reflects how neurodivergent people commonly think. While non-autistic people tend to think from a top-down perspective, those with autism often employ a bottom-up way of thinking.

Of course, there is considerable variation, and researchers have identified at least three specialist thinking types within the autism spectrum:

- Visual thinkers who think in images;

- Pattern thinkers who think of concepts in terms of patterns and relationships;

- Verbal thinkers who think only in word detail.

Still, research shows that people with autism and ADHD show a bias toward bottom-up thinking rather than the top-down approach you often see in neurotypical users. Consequently, a top-down strategy means you may miss details your audience may notice, and your site may not feel easily usable for all users.

As a real-world example, consider the task of writing an essay. Many students are instructed to start an essay assignment by thinking about the main point they want to convey and then create an outline with points that support the main argument. This is top-down thinking — starting with the big picture of the topic and then gradually breaking down the topic into points and then later into words that articulate these points.

In contrast, someone who uses a bottom-up thinking approach might start an essay with no outline but rather just by freely jotting down every idea that comes to mind as it comes to mind — perhaps starting with one particular idea or example that the writer finds interesting and wants to explore further and branching off from there. Then, once all the ideas have been written out, the writer goes back to group related ideas together and arrange them into a logical outline. This writer starts with the small details of the essay and then works these details into the big picture of the final form.

In web design, in particular, a bottom-up approach means starting with the specifics of the user experience instead of the desired effect. You may determine a readable layout for a single blog post, then ask how that page relates to others and slowly build on these decisions until you have several well-organized website categories.

You may even get more granular. Say you start your site design by placing a menu at the bottom of a mobile site to make it easier to tap with one hand, improving ease of use. Then, you build a drop-down menu around that choice — placing the most popular or needed options at the bottom instead of the top for easy tapping. From there, you may have to rethink larger-scale layouts to work around those interactive elements being lower on the screen, slowly addressing larger categories until you have a finished site design.

In either case, the idea of bottom-up design is to begin with the most specific problems someone might have. You then address them in sequence instead of determining the big picture first.

Benefits Of A Bottom-Up Approach For Accessible Design

While neither bottom-up nor top-down approaches dominate the industry, some web engineers prefer the bottom-up approach due to the various accessibility benefits this process provides. This strategy has several accessibility benefits.

Putting User Needs First

The biggest benefit of bottom-up methods is that they prioritize the user’s needs.

Top-down approaches seem organized, but they often result in a site that reflects the designer’s choices and beliefs more than it serves your audience.

“

Consider some of the complaints that social media users have made over the years related to usability and accessibility for the everyday user. For example, many users complain that their Facebook feed will randomly refresh as they scroll for the sake of providing users with the most up-to-date content. However, the feature makes it virtually impossible to get back to a post a user viewed that they didn’t think to save. Likewise, TikTok’s watch history feature has come and gone over the years and still today is difficult for many users to find without viewing an outside tutorial on the subject.

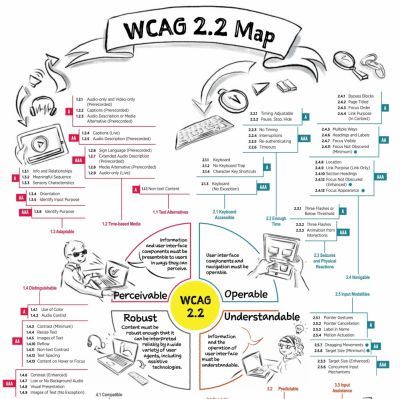

This is a common problem: 95.9% of the largest one million homepages have Web Content Accessibility Guidelines (WCAG) errors. While a bottom-up alternative doesn’t mean you won’t make any mistakes, it may make them less likely, as bottom-up thinking often improves your awareness of new stimuli so you can catch things you’d otherwise overlook. It’s easier to meet user’s needs when you build your entire site around their experience instead of looking at UX as an afterthought.

Consider this example from Berkshire Hathaway, a multi-billion-dollar holding company. The overall design philosophy is understandable: It’s simple and direct, choosing to focus on information instead of fancy aesthetics that may not suit the company image. However, you could argue it loses itself in this big picture.

While it is simple, the lack of menus or color contrast and the small font make it harder to read and a little overwhelming. This confusion can counteract any accessibility benefits of its simplicity.

Alternatively, even a simple website redesign could include intuitive menus, additional contrast, and accessible font for easy navigation across the site.

The homepage for U.K. charity Scope offers a better example of web design centered around users’ needs. Concise, clear menus line the top of the page to aid quicker, easier navigation. The color scheme is simple enough to avoid confusion but provides enough contrast to make everything easy to read — something the sans-serif font further helps.

Ensuring Accessibility From The Start

A top-down method also makes catering to a diverse audience difficult because you may need to shoehorn features into an existing design.

For example, say, a local government agency creates a website focused on providing information and services to a general audience of residents. The site originally featured high-resolution images, bright colors, and interactive charts.

However, they realize the images are not accessible to people navigating the site with screen readers, while multiple layers of submenus are difficult for keyboard-only users. Further, the bright colors make it hard for visually impaired users to read the site’s information.

The agency, realizing these accessibility concerns, adds captions to each image. However, the captions disrupt the originally intended visual aesthetics and user flow. Further, adjusting the bright colors would involve completely rethinking the site’s entire color palette. If these considerations had been made before the site was built, the site build could have specifically accommodated these elements while still creating an aesthetically pleasing and user-friendly result.

Alternatively, a site initially built with high contrast, a calm color scheme, clear typography, simple menus, and reduced imagery would make this site much more accessible to a wide user base from the get-go.

As a real-world example, consider the Awwwards website. There are plenty of menus to condense information and ease navigation without overloading the screen — a solid accessibility choice. However, there does not seem to be consistent thought in these menus’ placement or organization.

There are far too many menus; some are at the top while others are at the bottom, and a scrolling top bar adds further distractions. It seems like Awwwards may have added additional menus as an afterthought to improve navigation. This leads to inconsistencies and crowding because they did not begin with this thought.

In contrast,

Bottom-up alternatives address usability issues from the beginning, which results in a more functional, accessible website.

“

Redesigning a system to address a usability issue it didn’t originally make room for is challenging. It can lead to errors like broken links and other unintended consequences that may hinder access for other visitors. Some sites have even claimed to lose 90% of their traffic after a redesign. While bottom-up approaches won’t eliminate those possibilities, they make them less likely by centering everything around usage from the start.

The website for the Vasa Museum in Stockholm, Sweden, showcases a more cohesive approach to ensuring accessibility. Like Awwwards, it uses menus to aid navigation and organization, but there seems to be more forethought into these features. All menus are at the top, and there are fewer of them, resulting in less clutter and a faster way to find what you’re looking for. The overall design complements this by keeping things simple and neat so that the menus stand out.

Increasing Awareness

Similarly, bottom-up design ensures you don’t miss as many accessibility concerns. When you start at the top, before determining what details fit within it, you may not consider all the factors that influence it. Beginning with the specifics instead makes it easier to spot and address problems you would’ve missed otherwise.

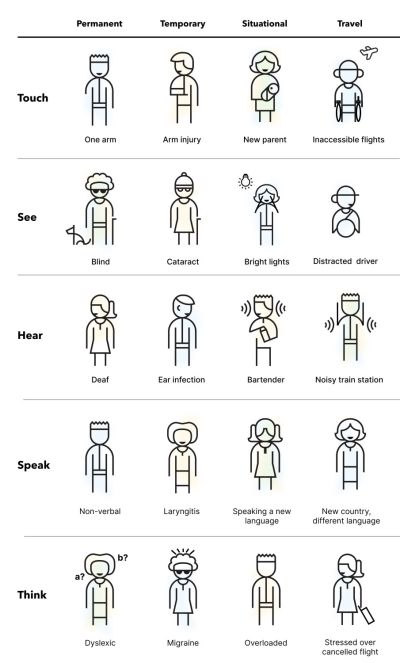

This awareness is particularly important for serving a diverse population. An estimated 16% of the global population — 1.6 billion people — have a significant disability. That means there’s a huge range of varying needs to account for, but most people lack firsthand experience living with these conditions. Consequently, it’s easy to miss things impacting others’ UX. You can overcome that knowledge gap by asking how everyone can use your site first.

Bottom-Up vs. Top-Down: Which Is Best for You?

As these benefits show, a bottom-up design philosophy can be helpful when building an accessible site. Still, top-down methods can be advantageous at times, too. Which is best depends on your situation.

Top-down approaches are a good way to ensure a consistent brand image, as you start with the overall idea and base future decisions on this concept. It also makes it easier to create a design hierarchy to facilitate decision-making within your team. When anyone has a question, they can turn to whoever is above them or refer to the broader goals. Such organization can also mean faster design processes.

Bottom-up methods, by contrast, are better when accessibility for a diverse audience is your main concern. It may be harder to keep everyone on the same overall design philosophy page, but it usually produces a more functional website. You can catch and solve problems early and pay great attention to detail. However, this can mean longer design cycles, which can incur extra costs.

It may come down to what your team is most comfortable with. People think and work differently, with some preferring a top-down approach while others find bottom-up more natural. Combining the two — starting with a top-down model before tackling updates from a bottom-up perspective — can be beneficial, too.

Strategies For Implementing A Bottom-Up Design Model

Should you decide a bottom-up design method is best for your goals, here are some ways you can embrace this philosophy.

Talk To Your Existing User Base

One of the most important factors in bottom-up web design is to center everything around your users. As a result, your existing user base — whether from a separate part of your business or another site you run — is the perfect place to start.

Survey customers and web visitors about their experience on your sites and others. Ask what pain points they have and what features they’d appreciate. Any commonalities between responses deserve attention. You can also turn to WCAG standards for inspiration on accessible functionality, but first-hand user feedback should form the core of your mission.

Look To Past Projects For Accessibility Gaps

Past sites and business projects can also reveal what specifics you should start with. Look for any accessibility gaps by combing through old customer feedback and update histories and using these sites yourself to find issues. Take note of any barriers or usability concerns to address in your next website.

Remember to document everything you find as you go. Up to 90% of organizations’ data is unstructured, making it difficult to analyze later. Reversing that trend by organizing your findings and recording your accessible design process will streamline future accessibility optimization efforts.

Divide Tasks But Communicate Often

Keep in mind that a bottom-up strategy can be time-consuming. One of the reasons why top-down alternatives are popular is because they’re efficient. You can overcome this gap by splitting tasks between smaller teams. However, these groups must communicate frequently to ensure separate design considerations work as a cohesive whole.

A DevOps approach is helpful here. DevOps has helped 49% of its adopters achieve a faster time to market, and 61% report higher-quality deliverables. It also includes space for both detailed work and team-wide meetings to keep everyone on track. Such benefits ensure you can remain productive in a bottom-up strategy.

Accessible Websites Need A Bottom-Up Design Approach

You can’t overstate the importance of accessible website design. By the same token, bottom-up philosophies are crucial in modern site-building. A detail-oriented approach makes it easier to serve a more diverse audience along several fronts. Making the most of this opportunity will both extend your reach to new niches and make the web a more equitable place.

The Web Accessibility Initiative’s WCAG standards are a good place to start. While these guidelines don’t necessarily describe how to apply a bottom-up approach, they do outline critical user needs and accessibility concerns your design should consider. The site also offers a free and comprehensive Digital Accessibility Foundations course for designers and developers.

Familiarizing yourself with these standards and best practices will make it easier to understand your audience before you begin designing your site. You can then build a more accessible platform from the ground up.

Additionally, the following are some valuable related reads that can act as inspiration in accessibility-centered and user-centric design.

- Inclusive Design for a Digital World: Designing with Accessibility in Mind by Regine M. Gilbert

- Practical Web Inclusion and Accessibility: A Comprehensive Guide to Access Needs by Ashley Firth

- Don’t Make Me Think, Revisited: A Common Sense Approach to Web Usability by Steve Krug

By employing bottom-up thinking as well as resources like these in your design approach, you can create websites that not only meet current accessibility standards but actively encourage site use among users of all backgrounds and abilities.

Further Reading On SmashingMag

- “Getting To The Bottom Of Minimum WCAG-Conformant Interactive Element Size,” Eric Bailey

- “How To Make A Strong Case For Accessibility,” Vitaly Friedman

- “A Guide To Accessible Form Validation,” Sandrina Pereira

- “Creating A High-Contrast Design System With CSS Custom Properties,” Brecht De Ruyte

(yk)

Generating Unique Random Numbers In JavaScript Using Sets

Generating Unique Random Numbers In JavaScript Using Sets Generating Unique Random Numbers In JavaScript Using Sets Amejimaobari Ollornwi 2024-08-26T15:00:00+00:00 2025-06-25T15:04:30+00:00 JavaScript comes with a lot of built-in functions that allow you to carry out so many different operations. One of these built-in functions is the […]

Accessibility

Generating Unique Random Numbers In JavaScript Using Sets

Amejimaobari Ollornwi 2024-08-26T15:00:00+00:00

2025-06-25T15:04:30+00:00

JavaScript comes with a lot of built-in functions that allow you to carry out so many different operations. One of these built-in functions is the Math.random() method, which generates a random floating-point number that can then be manipulated into integers.

However, if you wish to generate a series of unique random numbers and create more random effects in your code, you will need to come up with a custom solution for yourself because the Math.random() method on its own cannot do that for you.

In this article, we’re going to be learning how to circumvent this issue and generate a series of unique random numbers using the Set object in JavaScript, which we can then use to create more randomized effects in our code.

Note: This article assumes that you know how to generate random numbers in JavaScript, as well as how to work with sets and arrays.

Generating a Unique Series of Random Numbers

One of the ways to generate a unique series of random numbers in JavaScript is by using Set objects. The reason why we’re making use of sets is because the elements of a set are unique. We can iteratively generate and insert random integers into sets until we get the number of integers we want.

And since sets do not allow duplicate elements, they are going to serve as a filter to remove all of the duplicate numbers that are generated and inserted into them so that we get a set of unique integers.

Here’s how we are going to approach the work:

- Create a

Setobject. - Define how many random numbers to produce and what range of numbers to use.

- Generate each random number and immediately insert the numbers into the

Setuntil theSetis filled with a certain number of them.

The following is a quick example of how the code comes together:

function generateRandomNumbers(count, min, max) {

// 1: Create a `Set` object

let uniqueNumbers = new Set();

while (uniqueNumbers.size < count) {

// 2: Generate each random number

uniqueNumbers.add(Math.floor(Math.random() * (max - min + 1)) + min);

}

// 3: Immediately insert them numbers into the Set...

return Array.from(uniqueNumbers);

}

// ...set how many numbers to generate from a given range

console.log(generateRandomNumbers(5, 5, 10));

What the code does is create a new Set object and then generate and add the random numbers to the set until our desired number of integers has been included in the set. The reason why we’re returning an array is because they are easier to work with.

One thing to note, however, is that the number of integers you want to generate (represented by count in the code) should be less than the upper limit of your range plus one (represented by max + 1 in the code). Otherwise, the code will run forever. You can add an if statement to the code to ensure that this is always the case:

function generateRandomNumbers(count, min, max) {

// if statement checks that `count` is less than `max + 1`

if (count > max + 1) {

return "count cannot be greater than the upper limit of range";

} else {

let uniqueNumbers = new Set();

while (uniqueNumbers.size < count) {

uniqueNumbers.add(Math.floor(Math.random() * (max - min + 1)) + min);

}

return Array.from(uniqueNumbers);

}

}

console.log(generateRandomNumbers(5, 5, 10));

Using the Series of Unique Random Numbers as Array Indexes

It is one thing to generate a series of random numbers. It’s another thing to use them.

Being able to use a series of random numbers with arrays unlocks so many possibilities: you can use them in shuffling playlists in a music app, randomly sampling data for analysis, or, as I did, shuffling the tiles in a memory game.

Let’s take the code from the last example and work off of it to return random letters of the alphabet. First, we’ll construct an array of letters:

const englishAlphabets = [

'A', 'B', 'C', 'D', 'E', 'F', 'G', 'H', 'I', 'J', 'K', 'L', 'M',

'N', 'O', 'P', 'Q', 'R', 'S', 'T', 'U', 'V', 'W', 'X', 'Y', 'Z'

];

// rest of code

Then we map the letters in the range of numbers:

const englishAlphabets = [

'A', 'B', 'C', 'D', 'E', 'F', 'G', 'H', 'I', 'J', 'K', 'L', 'M',

'N', 'O', 'P', 'Q', 'R', 'S', 'T', 'U', 'V', 'W', 'X', 'Y', 'Z'

];

// generateRandomNumbers()

const randomAlphabets = randomIndexes.map((index) => englishAlphabets[index]);

In the original code, the generateRandomNumbers() function is logged to the console. This time, we’ll construct a new variable that calls the function so it can be consumed by randomAlphabets:

const englishAlphabets = [

'A', 'B', 'C', 'D', 'E', 'F', 'G', 'H', 'I', 'J', 'K', 'L', 'M',

'N', 'O', 'P', 'Q', 'R', 'S', 'T', 'U', 'V', 'W', 'X', 'Y', 'Z'

];

// generateRandomNumbers()

const randomIndexes = generateRandomNumbers(5, 0, 25);

const randomAlphabets = randomIndexes.map((index) => englishAlphabets[index]);

Now we can log the output to the console like we did before to see the results:

const englishAlphabets = [

'A', 'B', 'C', 'D', 'E', 'F', 'G', 'H', 'I', 'J', 'K', 'L', 'M',

'N', 'O', 'P', 'Q', 'R', 'S', 'T', 'U', 'V', 'W', 'X', 'Y', 'Z'

];

// generateRandomNumbers()

const randomIndexes = generateRandomNumbers(5, 0, 25);

const randomAlphabets = randomIndexes.map((index) => englishAlphabets[index]);

console.log(randomAlphabets);

And, when we put the generateRandomNumbers() function definition back in, we get the final code:

const englishAlphabets = [

'A', 'B', 'C', 'D', 'E', 'F', 'G', 'H', 'I', 'J', 'K', 'L', 'M',

'N', 'O', 'P', 'Q', 'R', 'S', 'T', 'U', 'V', 'W', 'X', 'Y', 'Z'

];

function generateRandomNumbers(count, min, max) {

if (count > max + 1) {

return "count cannot be greater than the upper limit of range";

} else {

let uniqueNumbers = new Set();

while (uniqueNumbers.size < count) {

uniqueNumbers.add(Math.floor(Math.random() * (max - min + 1)) + min);

}

return Array.from(uniqueNumbers);

}

}

const randomIndexes = generateRandomNumbers(5, 0, 25);

const randomAlphabets = randomIndexes.map((index) => englishAlphabets[index]);

console.log(randomAlphabets);

So, in this example, we created a new array of alphabets by randomly selecting some letters in our englishAlphabets array.

You can pass in a count argument of englishAlphabets.length to the generateRandomNumbers function if you desire to shuffle the elements in the englishAlphabets array instead. This is what I mean:

generateRandomNumbers(englishAlphabets.length, 0, 25);

Wrapping Up

In this article, we’ve discussed how to create randomization in JavaScript by covering how to generate a series of unique random numbers, how to use these random numbers as indexes for arrays, and also some practical applications of randomization.

The best way to learn anything in software development is by consuming content and reinforcing whatever knowledge you’ve gotten from that content by practicing. So, don’t stop here. Run the examples in this tutorial (if you haven’t done so), play around with them, come up with your own unique solutions, and also don’t forget to share your good work. Ciao!

(yk)

Regexes Got Good: The History And Future Of Regular Expressions In JavaScript

Regexes Got Good: The History And Future Of Regular Expressions In JavaScript Regexes Got Good: The History And Future Of Regular Expressions In JavaScript Steven Levithan 2024-08-20T15:00:00+00:00 2025-06-25T15:04:30+00:00 Modern JavaScript regular expressions have come a long way compared to what you might be familiar with. […]

Accessibility

Regexes Got Good: The History And Future Of Regular Expressions In JavaScript

Steven Levithan 2024-08-20T15:00:00+00:00

2025-06-25T15:04:30+00:00

Modern JavaScript regular expressions have come a long way compared to what you might be familiar with. Regexes can be an amazing tool for searching and replacing text, but they have a longstanding reputation (perhaps outdated, as I’ll show) for being difficult to write and understand.

This is especially true in JavaScript-land, where regexes languished for many years, comparatively underpowered compared to their more modern counterparts in PCRE, Perl, .NET, Java, Ruby, C++, and Python. Those days are over.

In this article, I’ll recount the history of improvements to JavaScript regexes (spoiler: ES2018 and ES2024 changed the game), show examples of modern regex features in action, introduce you to a lightweight JavaScript library that makes JavaScript stand alongside or surpass other modern regex flavors, and end with a preview of active proposals that will continue to improve regexes in future versions of JavaScript (with some of them already working in your browser today).

The History of Regular Expressions in JavaScript

ECMAScript 3, standardized in 1999, introduced Perl-inspired regular expressions to the JavaScript language. Although it got enough things right to make regexes pretty useful (and mostly compatible with other Perl-inspired flavors), there were some big omissions, even then. And while JavaScript waited 10 years for its next standardized version with ES5, other programming languages and regex implementations added useful new features that made their regexes more powerful and readable.

But that was then.

Did you know that nearly every new version of JavaScript has made at least minor improvements to regular expressions?

Let’s take a look at them.

Don’t worry if it’s hard to understand what some of the following features mean — we’ll look more closely at several of the key features afterward.

- ES5 (2009) fixed unintuitive behavior by creating a new object every time regex literals are evaluated and allowed regex literals to use unescaped forward slashes within character classes (

/[/]/). - ES6/ES2015 added two new regex flags:

y(sticky), which made it easier to use regexes in parsers, andu(unicode), which added several significant Unicode-related improvements along with strict errors. It also added theRegExp.prototype.flagsgetter, support for subclassingRegExp, and the ability to copy a regex while changing its flags. - ES2018 was the edition that finally made JavaScript regexes pretty good. It added the

s(dotAll) flag, lookbehind, named capture, and Unicode properties (viap{...}andP{...}, which require ES6’s flagu). All of these are extremely useful features, as we’ll see. - ES2020 added the string method

matchAll, which we’ll also see more of shortly. - ES2022 added flag

d(hasIndices), which provides start and end indices for matched substrings. - And finally, ES2024 added flag

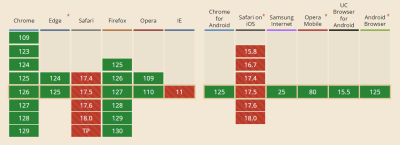

v(unicodeSets) as an upgrade to ES6’s flagu. Thevflag adds a set of multicharacter “properties of strings” top{...}, multicharacter elements within character classes viap{...}andq{...}, nested character classes, set subtraction[A--B]and intersection[A&&B], and different escaping rules within character classes. It also fixed case-insensitive matching for Unicode properties within negated sets[^...].

As for whether you can safely use these features in your code today, the answer is yes! The latest of these features, flag v, is supported in Node.js 20 and 2023-era browsers. The rest are supported in 2021-era browsers or earlier.

Each edition from ES2019 to ES2023 also added additional Unicode properties that can be used via p{...} and P{...}. And to be a completionist, ES2021 added string method replaceAll — although, when given a regex, the only difference from ES3’s replace is that it throws if not using flag g.

Aside: What Makes a Regex Flavor Good?

With all of these changes, how do JavaScript regular expressions now stack up against other flavors? There are multiple ways to think about this, but here are a few key aspects:

- Performance.

This is an important aspect but probably not the main one since mature regex implementations are generally pretty fast. JavaScript is strong on regex performance (at least considering V8’s Irregexp engine, used by Node.js, Chromium-based browsers, and even Firefox; and JavaScriptCore, used by Safari), but it uses a backtracking engine that is missing any syntax for backtracking control — a major limitation that makes ReDoS vulnerability more common. - Support for advanced features that handle common or important use cases.

Here, JavaScript stepped up its game with ES2018 and ES2024. JavaScript is now best in class for some features like lookbehind (with its infinite-length support) and Unicode properties (with multicharacter “properties of strings,” set subtraction and intersection, and script extensions). These features are either not supported or not as robust in many other flavors. - Ability to write readable and maintainable patterns.

Here, native JavaScript has long been the worst of the major flavors since it lacks thex(“extended”) flag that allows insignificant whitespace and comments. Additionally, it lacks regex subroutines and subroutine definition groups (from PCRE and Perl), a powerful set of features that enable writing grammatical regexes that build up complex patterns via composition.

So, it’s a bit of a mixed bag.

JavaScript regexes have become exceptionally powerful, but they’re still missing key features that could make regexes safer, more readable, and more maintainable (all of which hold some people back from using this power).

“

The good news is that all of these holes can be filled by a JavaScript library, which we’ll see later in this article.

Using JavaScript’s Modern Regex Features

Let’s look at a few of the more useful modern regex features that you might be less familiar with. You should know in advance that this is a moderately advanced guide. If you’re relatively new to regex, here are some excellent tutorials you might want to start with:

- RegexLearn and RegexOne are interactive tutorials that include practice problems.

- JavaScript.info’s regular expressions chapter is a detailed and JavaScript-specific guide.

- Demystifying Regular Expressions (video) is an excellent presentation for beginners by Lea Verou at HolyJS 2017.

- Learn Regular Expressions In 20 Minutes (video) is a live syntax walkthrough in a regex tester.

Named Capture

Often, you want to do more than just check whether a regex matches — you want to extract substrings from the match and do something with them in your code. Named capturing groups allow you to do this in a way that makes your regexes and code more readable and self-documenting.

The following example matches a record with two date fields and captures the values:

const record = 'Admitted: 2024-01-01nReleased: 2024-01-03';

const re = /^Admitted: (?<admitted>d{4}-d{2}-d{2})nReleased: (?<released>d{4}-d{2}-d{2})$/;

const match = record.match(re);

console.log(match.groups);

/* → {

admitted: '2024-01-01',

released: '2024-01-03'

} */

Don’t worry — although this regex might be challenging to understand, later, we’ll look at a way to make it much more readable. The key things here are that named capturing groups use the syntax (?<name>...), and their results are stored on the groups object of matches.

You can also use named backreferences to rematch whatever a named capturing group matched via k<name>, and you can use the values within search and replace as follows:

// Change 'FirstName LastName' to 'LastName, FirstName'

const name = 'Shaquille Oatmeal';

name.replace(/(?<first>w+) (?<last>w+)/, '$<last>, $<first>');

// → 'Oatmeal, Shaquille'

For advanced regexers who want to use named backreferences within a replacement callback function, the groups object is provided as the last argument. Here’s a fancy example:

function fahrenheitToCelsius(str) {

const re = /(?<degrees>-?d+(.d+)?)Fb/g;

return str.replace(re, (...args) => {

const groups = args.at(-1);

return Math.round((groups.degrees - 32) * 5/9) + 'C';

});

}

fahrenheitToCelsius('98.6F');

// → '37C'

fahrenheitToCelsius('May 9 high is 40F and low is 21F');

// → 'May 9 high is 4C and low is -6C'

Lookbehind

Lookbehind (introduced in ES2018) is the complement to lookahead, which has always been supported by JavaScript regexes. Lookahead and lookbehind are assertions (similar to ^ for the start of a string or b for word boundaries) that don’t consume any characters as part of the match. Lookbehinds succeed or fail based on whether their subpattern can be found immediately before the current match position.

For example, the following regex uses a lookbehind (?<=...) to match the word “cat” (only the word “cat”) if it’s preceded by “fat ”:

const re = /(?<=fat )cat/g;

'cat, fat cat, brat cat'.replace(re, 'pigeon');

// → 'cat, fat pigeon, brat cat'

You can also use negative lookbehind — written as (?<!...) — to invert the assertion. That would make the regex match any instance of “cat” that’s not preceded by “fat ”.

const re = /(?<!fat )cat/g;

'cat, fat cat, brat cat'.replace(re, 'pigeon');

// → 'pigeon, fat cat, brat pigeon'

JavaScript’s implementation of lookbehind is one of the very best (matched only by .NET). Whereas other regex flavors have inconsistent and complex rules for when and whether they allow variable-length patterns inside lookbehind, JavaScript allows you to look behind for any subpattern.

The matchAll Method

JavaScript’s String.prototype.matchAll was added in ES2020 and makes it easier to operate on regex matches in a loop when you need extended match details. Although other solutions were possible before, matchAll is often easier, and it avoids gotchas, such as the need to guard against infinite loops when looping over the results of regexes that might return zero-length matches.

Since matchAll returns an iterator (rather than an array), it’s easy to use it in a for...of loop.

const re = /(?<char1>w)(?<char2>w)/g;

for (const match of str.matchAll(re)) {

const {char1, char2} = match.groups;

// Print each complete match and matched subpatterns

console.log(`Matched "${match[0]}" with "${char1}" and "${char2}"`);

}

Note: matchAll requires its regexes to use flag g (global). Also, as with other iterators, you can get all of its results as an array using Array.from or array spreading.

const matches = [...str.matchAll(/./g)];

Unicode Properties

Unicode properties (added in ES2018) give you powerful control over multilingual text, using the syntax p{...} and its negated version P{...}. There are hundreds of different properties you can match, which cover a wide variety of Unicode categories, scripts, script extensions, and binary properties.

Note: For more details, check out the documentation on MDN.

Unicode properties require using the flag u (unicode) or v (unicodeSets).

Flag v

Flag v (unicodeSets) was added in ES2024 and is an upgrade to flag u — you can’t use both at the same time. It’s a best practice to always use one of these flags to avoid silently introducing bugs via the default Unicode-unaware mode. The decision on which to use is fairly straightforward. If you’re okay with only supporting environments with flag v (Node.js 20 and 2023-era browsers), then use flag v; otherwise, use flag u.

Flag v adds support for several new regex features, with the coolest probably being set subtraction and intersection. This allows using A--B (within character classes) to match strings in A but not in B or using A&&B to match strings in both A and B. For example:

// Matches all Greek symbols except the letter 'π'

/[p{Script_Extensions=Greek}--π]/v

// Matches only Greek letters

/[p{Script_Extensions=Greek}&&p{Letter}]/v

For more details about flag v, including its other new features, check out this explainer from the Google Chrome team.

A Word on Matching Emoji

Emoji are 🤩🔥😎👌, but how emoji get encoded in text is complicated. If you’re trying to match them with a regex, it’s important to be aware that a single emoji can be composed of one or many individual Unicode code points. Many people (and libraries!) who roll their own emoji regexes miss this point (or implement it poorly) and end up with bugs.

The following details for the emoji “👩🏻🏫” (Woman Teacher: Light Skin Tone) show just how complicated emoji can be:

// Code unit length

'👩🏻🏫'.length;

// → 7

// Each astral code point (above uFFFF) is divided into high and low surrogates

// Code point length

[...'👩🏻🏫'].length;

// → 4

// These four code points are: u{1F469} u{1F3FB} u{200D} u{1F3EB}

// u{1F469} combined with u{1F3FB} is '👩🏻'

// u{200D} is a Zero-Width Joiner

// u{1F3EB} is '🏫'

// Grapheme cluster length (user-perceived characters)

[...new Intl.Segmenter().segment('👩🏻🏫')].length;

// → 1

Fortunately, JavaScript added an easy way to match any individual, complete emoji via p{RGI_Emoji}. Since this is a fancy “property of strings” that can match more than one code point at a time, it requires ES2024’s flag v.

If you want to match emojis in environments without v support, check out the excellent libraries emoji-regex and emoji-regex-xs.

Making Your Regexes More Readable, Maintainable, and Resilient

Despite the improvements to regex features over the years, native JavaScript regexes of sufficient complexity can still be outrageously hard to read and maintain.

Regular Expressions are SO EASY!!!! pic.twitter.com/q4GSpbJRbZ

— Garabato Kid (@garabatokid) July 5, 2019

ES2018’s named capture was a great addition that made regexes more self-documenting, and ES6’s String.raw tag allows you to avoid escaping all your backslashes when using the RegExp constructor. But for the most part, that’s it in terms of readability.

However, there’s a lightweight and high-performance JavaScript library named regex (by yours truly) that makes regexes dramatically more readable. It does this by adding key missing features from Perl-Compatible Regular Expressions (PCRE) and outputting native JavaScript regexes. You can also use it as a Babel plugin, which means that regex calls are transpiled at build time, so you get a better developer experience without users paying any runtime cost.

PCRE is a popular C library used by PHP for its regex support, and it’s available in countless other programming languages and tools.

Let’s briefly look at some of the ways the regex library, which provides a template tag named regex, can help you write complex regexes that are actually understandable and maintainable by mortals. Note that all of the new syntax described below works identically in PCRE.

Insignificant Whitespace and Comments

By default, regex allows you to freely add whitespace and line comments (starting with #) to your regexes for readability.

import {regex} from 'regex';

const date = regex`

# Match a date in YYYY-MM-DD format

(?<year> d{4}) - # Year part

(?<month> d{2}) - # Month part

(?<day> d{2}) # Day part

`;

This is equivalent to using PCRE’s xx flag.

Subroutines and Subroutine Definition Groups

Subroutines are written as g<name> (where name refers to a named group), and they treat the referenced group as an independent subpattern that they try to match at the current position. This enables subpattern composition and reuse, which improves readability and maintainability.

For example, the following regex matches an IPv4 address such as “192.168.12.123”:

import {regex} from 'regex';

const ipv4 = regex`b

(?<byte> 25[0-5] | 2[0-4]d | 1dd | [1-9]?d)

# Match the remaining 3 dot-separated bytes

(. g<byte>){3}

b`;

You can take this even further by defining subpatterns for use by reference only via subroutine definition groups. Here’s an example that improves the regex for admittance records that we saw earlier in this article:

const record = 'Admitted: 2024-01-01nReleased: 2024-01-03';

const re = regex`

^ Admitted: (?<admitted> g<date>) n

Released: (?<released> g<date>) $

(?(DEFINE)

(?<date> g<year>-g<month>-g<day>)

(?<year> d{4})

(?<month> d{2})

(?<day> d{2})

)

`;

const match = record.match(re);

console.log(match.groups);

/* → {

admitted: '2024-01-01',

released: '2024-01-03'

} */

A Modern Regex Baseline

regex includes the v flag by default, so you never forget to turn it on. And in environments without native v, it automatically switches to flag u while applying v’s escaping rules, so your regexes are forward and backward-compatible.

It also implicitly enables the emulated flags x (insignificant whitespace and comments) and n (“named capture only” mode) by default, so you don’t have to continually opt into their superior modes. And since it’s a raw string template tag, you don’t have to escape your backslashes like with the RegExp constructor.

Atomic Groups and Possessive Quantifiers Can Prevent Catastrophic Backtracking

Atomic groups and possessive quantifiers are another powerful set of features added by the regex library. Although they’re primarily about performance and resilience against catastrophic backtracking (also known as ReDoS or “regular expression denial of service,” a serious issue where certain regexes can take forever when searching particular, not-quite-matching strings), they can also help with readability by allowing you to write simpler patterns.

Note: You can learn more in the regex documentation.

What’s Next? Upcoming JavaScript Regex Improvements

There are a variety of active proposals for improving regexes in JavaScript. Below, we’ll look at the three that are well on their way to being included in future editions of the language.

Duplicate Named Capturing Groups

This is a Stage 3 (nearly finalized) proposal. Even better is that, as of recently, it works in all major browsers.

When named capturing was first introduced, it required that all (?<name>...) captures use unique names. However, there are cases when you have multiple alternate paths through a regex, and it would simplify your code to reuse the same group names in each alternative.

For example:

/(?<year>d{4})-dd|dd-(?<year>d{4})/

This proposal enables exactly this, preventing a “duplicate capture group name” error with this example. Note that names must still be unique within each alternative path.

Pattern Modifiers (aka Flag Groups)

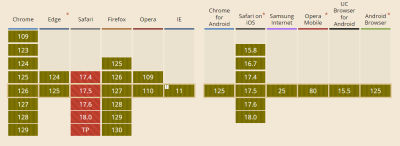

This is another Stage 3 proposal. It’s already supported in Chrome/Edge 125 and Opera 111, and it’s coming soon for Firefox. No word yet on Safari.

Pattern modifiers use (?ims:...), (?-ims:...), or (?im-s:...) to turn the flags i, m, and s on or off for only certain parts of a regex.

For example:

/hello-(?i:world)/

// Matches 'hello-WORLD' but not 'HELLO-WORLD'

Escape Regex Special Characters with RegExp.escape

This proposal recently reached Stage 3 and has been a long time coming. It isn’t yet supported in any major browsers. The proposal does what it says on the tin, providing the function RegExp.escape(str), which returns the string with all regex special characters escaped so you can match them literally.

If you need this functionality today, the most widely-used package (with more than 500 million monthly npm downloads) is escape-string-regexp, an ultra-lightweight, single-purpose utility that does minimal escaping. That’s great for most cases, but if you need assurance that your escaped string can safely be used at any arbitrary position within a regex, escape-string-regexp recommends the regex library that we’ve already looked at in this article. The regex library uses interpolation to escape embedded strings in a context-aware way.

Conclusion

So there you have it: the past, present, and future of JavaScript regular expressions.

If you want to journey even deeper into the lands of regex, check out Awesome Regex for a list of the best regex testers, tutorials, libraries, and other resources. And for a fun regex crossword puzzle, try your hand at regexle.

May your parsing be prosperous and your regexes be readable.

(gg, yk)

How To Build A Multilingual Website With Nuxt.js

How To Build A Multilingual Website With Nuxt.js How To Build A Multilingual Website With Nuxt.js Tim Benniks 2024-08-01T15:00:00+00:00 2025-06-25T15:04:30+00:00 This article is sponsored by Hygraph Internationalization, often abbreviated as i18n, is the process of designing and developing software applications in a way that they […]

Accessibility

How To Build A Multilingual Website With Nuxt.js

Tim Benniks 2024-08-01T15:00:00+00:00

2025-06-25T15:04:30+00:00

This article is sponsored by Hygraph

Internationalization, often abbreviated as i18n, is the process of designing and developing software applications in a way that they can be easily adapted to various spoken languages like English, German, French, and more without requiring substantial changes to the codebase. It involves moving away from hardcoded strings and techniques for translating text, formatting dates and numbers, and handling different character encodings, among other tasks.

Internationalization can give users the choice to access a given website or application in their native language, which can have a positive impression on them, making it crucial for reaching a global audience.

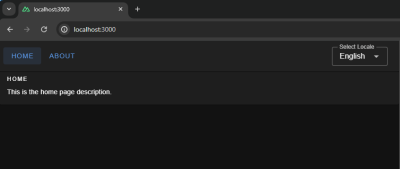

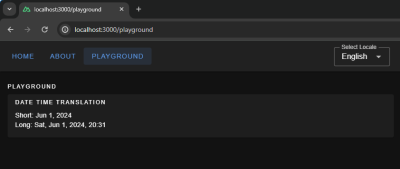

What We’re Making

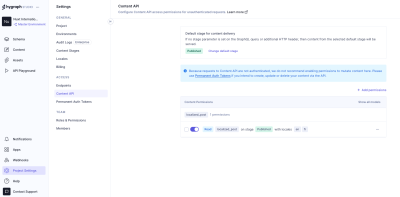

In this tutorial, we’re making a website that puts these i18n pieces together using a combination of libraries and a UI framework. You’ll want to have intermediate proficiency with JavaScript, Vue, and Nuxt to follow along. Throughout this article, we will learn by examples and incrementally build a multilingual Nuxt website. Together, we will learn how to provide i18n support for different languages, lazy-load locale messages, and switch locale on runtime.

After that, we will explore features like interpolation, pluralization, and date/time translations.

And finally, we will fetch dynamic localized content from an API server using Hygraph as our API server to get localized content. If you do not have a Hygraph account please create one for free before jumping in.

As a final detail, we will use Vuetify as our UI framework, but please feel free to use another framework if you want. The final code for what we’re building is published in a GitHub repository for reference. And finally, you can also take a look at the final result in a live demo.

The nuxt-i18n Library

nuxt-i18n is a library for implementing internationalization in Nuxt.js applications, and it’s what we will be using in this tutorial. The library is built on top of Vue I18n, which, again, is the de facto standard library for implementing i18n in Vue applications.

What makes nuxt-i18n ideal for our work is that it provides the comprehensive set of features included in Vue I18n while adding more functionalities that are specific to Nuxt, like lazy loading locale messages, route generation and redirection for different locales, SEO metadata per locale, locale-specific domains, and more.

Initial Setup

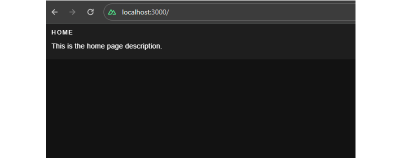

Start a new Nuxt.js project and set it up with a UI framework of your choice. Again, I will be using Vue to establish the interface for this tutorial.

Let us add a basic layout for our website and set up some sample Vue templates.

First, a “Blog” page:

<!-- pages/blog.vue -->

<template>

<div>

<v-card color="cardBackground">

<v-card-title class="text-overline">

Home

</v-card-title>

<v-card-text>

This is the home page description

</v-card-text>

</v-card>

</div>

</template>

Next, an “About” page:

<!-- pages/about.vue -->

<template>

<div>

<v-card color="cardBackground">

<v-card-title class="text-overline">

About

</v-card-title>

<v-card-text>

This is the about page description

</v-card-text>

</v-card>

</div>

</template>

This gives us a bit of a boilerplate that we can integrate our i18n work into.

Translating Plain Text

The page templates look good, but notice how the text is hardcoded. As far as i18n goes, hardcoded content is difficult to translate into different locales. That is where the nuxt-i18n library comes in, providing the language-specific strings we need for the Vue components in the templates.

We’ll start by installing the library via the command line:

npx nuxi@latest module add i18n

Inside the nuxt.config.ts file, we need to ensure that we have @nuxtjs/i18n inside the modules array. We can use the i18n property to provide module-specific configurations.

// nuxt.config.ts

export default defineNuxtConfig({

// ...

modules: [

...

"@nuxtjs/i18n",

// ...

],

i18n: {

// nuxt-i18n module configurations here

}

// ...

});

Since the nuxt-i18n library is built on top of the Vue I18n library, we can utilize its features in our Nuxt application as well. Let us create a new file, i18n.config.ts, which we will use to provide all vue-i18n configurations.

// i18n.config.ts

export default defineI18nConfig(() => ({

legacy: false,

locale: "en",

messages: {

en: {

homePage: {

title: "Home",

description: "This is the home page description."

},

aboutPage: {

title: "About",

description: "This is the about page description."

},

},

},

}));

Here, we have specified internationalization configurations, like using the en locale, and added messages for the en locale. These messages can be used inside the markup in the templates we made with the help of a $t function from Vue I18n.

Next, we need to link the i18n.config.ts configurations in our Nuxt config file.

// nuxt.config.ts

export default defineNuxtConfig({

...

i18n: {

vueI18n: "./i18n.config.ts"

}

...

});

Now, we can use the $t function in our components — as shown below — to parse strings from our internationalization configurations.

Note: There’s no need to import $t since we have Nuxt’s default auto-import functionality.

<!-- i18n.config.ts -->

<template>

<div>

<v-card color="cardBackground">

<v-card-title class="text-overline">

{{ $t("homePage.title") }}

</v-card-title>

<v-card-text>

{{ $t("homePage.description") }}

</v-card-text>

</v-card>

</div>

</template>

Lazy Loading Translations

We have the title and description served from the configurations. Next, we can add more languages to the same config. For example, here’s how we can establish translations for English (en), French (fr) and Spanish (es):

// i18n.config.ts

export default defineI18nConfig(() => ({

legacy: false,

locale: "en",

messages: {

en: {

// English

},

fr: {

// French

},

es: {

// Spanish

}

},

}));

For a production website with a lot of content that needs translating, it would be unwise to bundle all of the messages from different locales in the main bundle. Instead, we should use the nuxt-i18 lazy loading feature asynchronously load only the required language rather than all of them at once. Also, having messages for all locales in a single configuration file can become difficult to manage over time, and breaking them up like this makes things easier to find.

Let’s set up the lazy loading feature in nuxt.config.ts:

// etc.

i18n: {

vueI18n: "./i18n.config.ts",

lazy: true,

langDir: "locales",

locales: [

{

code: "en",

file: "en.json",

name: "English",

},

{

code: "es",

file: "es.json",

name: "Spanish",

},

{

code: "fr",

file: "fr.json",

name: "French",

},

],

defaultLocale: "en",

strategy: "no_prefix",

},

// etc.

This enables lazy loading and specifies the locales directory that will contain our locale files. The locales array configuration specifies from which files Nuxt.js should pick up messages for a specific language.

Now, we can create individual files for each language. I’ll drop all three of them right here:

// locales/en.json

{

"homePage": {

"title": "Home",

"description": "This is the home page description."

},

"aboutPage": {

"title": "About",

"description": "This is the about page description."

},

"selectLocale": {

"label": "Select Locale"

},

"navbar": {

"homeButton": "Home",

"aboutButton": "About"

}

}

// locales/fr.json

{

"homePage": {

"title": "Bienvenue sur la page d'accueil",

"description": "Ceci est la description de la page d'accueil."

},

"aboutPage": {

"title": "À propos de nous",

"description": "Ceci est la description de la page à propos de nous."

},

"selectLocale": {

"label": "Sélectionner la langue"

},

"navbar": {

"homeButton": "Accueil",

"aboutButton": "À propos"

}

}

// locales/es.json

{

"homePage": {

"title": "Bienvenido a la página de inicio",

"description": "Esta es la descripción de la página de inicio."

},

"aboutPage": {

"title": "Sobre nosotros",

"description": "Esta es la descripción de la página sobre nosotros."

},

"selectLocale": {

"label": "Seleccione el idioma"

},

"navbar": {

"homeButton": "Inicio",

"aboutButton": "Acerca de"

}

}

We have set up lazy loading, added multiple languages to our application, and moved our locale messages to separate files. The user gets the right locale for the right message, and the locale messages are kept in a maintainable manner inside the code base.

Switching Between Languages

We have different locales, but to see them in action, we will build a component that can be used to switch between the available locales.

<!-- components/select-locale.vue -->

<script setup>

const { locale, locales, setLocale } = useI18n();

const language = computed({

get: () => locale.value,

set: (value) => setLocale(value),

});

</script>

<template>

<v-select

:label="$t('selectLocale.label')"

variant="outlined"

color="primary"

density="compact"

:items="locales"

item-title="name"

item-value="code"

v-model="language"

></v-select>

</template>

This component uses the useI18n hook provided by the Vue I18n library and a computed property language to get and set the global locale from a <select> input. To make this even more like a real-world website, we’ll include a small navigation bar that links up all of the website’s pages.

<!-- components/select-locale.vue -->

<template>

<v-app-bar app :elevation="2" class="px-2">

<div>

<v-btn color="button" to="/">

{{ $t("navbar.homeButton") }}

</v-btn>

<v-btn color="button" to="/about">

{{ $t("navbar.aboutButton") }}

</v-btn>

</div>

<v-spacer />

<div class="mr-4 mt-6">

<SelectLocale />

</div>

</v-app-bar>

</template>

That’s it! Now, we can switch between languages on the fly.

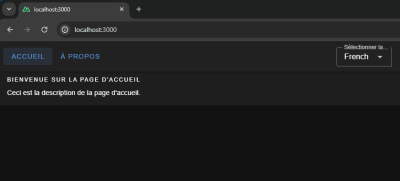

We have a basic layout, but I thought we’d take this a step further and build a playground page we can use to explore more i18n features that are pretty useful when building a multilingual website.

Interpolation and Pluralization

Interpolation and pluralization are internationalization techniques for handling dynamic content and grammatical variations across different languages. Interpolation allows developers to insert dynamic variables or expressions into translated strings. Pluralization addresses the complexities of plural forms in languages by selecting the appropriate grammatical form based on numeric values. With the help of interpolation and pluralization, we can create more natural and accurate translations.

To use pluralization in our Nuxt app, we’ll first add a configuration to the English locale file.

// locales/en.json

{

// etc.

"playgroundPage": {

"pluralization": {

"title": "Pluralization",

"apple": "No Apple | One Apple | {count} Apples",

"addApple": "Add"

}

}

// etc.

}

The pluralization configuration set up for the key apple defines an output — No Apple — if a count of 0 is passed to it, a second output — One Apple — if a count of 1 is passed, and a third — 2 Apples, 3 Apples, and so on — if the count passed in is greater than 1.

Here is how we can use it in your component: Whenever you click on the add button, you will see pluralization in action, changing the strings.

<!-- pages/playground.vue -->

<script setup>

let appleCount = ref(0);

const addApple = () => {

appleCount.value += 1;

};

</script>

<template>

<v-container fluid>

<!-- PLURALIZATION EXAMPLE -->

<v-card color="cardBackground">

<v-card-title class="text-overline">

{{ $t("playgroundPage.pluralization.title") }}

</v-card-title>

<v-card-text>

{{ $t("playgroundPage.pluralization.apple", { count: appleCount }) }}

</v-card-text>

<v-card-actions>

<v-btn

@click="addApple"

color="primary"

variant="outlined"

density="comfortable"

>{{ $t("playgroundPage.pluralization.addApple") }}</v-btn

>

</v-card-actions>

</v-card>

</v-container>

</template>

To use interpolation in our Nuxt app, first, add a configuration in the English locale file:

// locales/en.json

{

...

"playgroundPage": {

...

"interpolation": {

"title": "Interpolation",

"sayHello": "Hello, {name}",

"hobby": "My favourite hobby is {0}.",

"email": "You can reach out to me at {account}{'@'}{domain}.com"

},

// etc.

}

// etc.

}

The message for sayHello expects an object passed to it having a key name when invoked — a process known as named interpolation.

The message hobby expects an array to be passed to it and will pick up the 0th element, which is known as list interpolation.

The message email expects an object with keys account, and domain and joins both with a literal string "@". This is known as literal interpolation.

Below is an example of how to use it in the Vue components:

<!-- pages/playground.vue -->

<template>

<v-container fluid>

<!-- INTERPOLATION EXAMPLE -->

<v-card color="cardBackground">

<v-card-title class="text-overline">

{{ $t("playgroundPage.interpolation.title") }}

</v-card-title>

<v-card-text>

<p>

{{

$t("playgroundPage.interpolation.sayHello", {

name: "Jane",

})

}}

</p>

<p>

{{

$t("playgroundPage.interpolation.hobby", ["Football", "Cricket"])

}}

</p>

<p>

{{

$t("playgroundPage.interpolation.email", {

account: "johndoe",

domain: "hygraph",

})

}}

</p>

</v-card-text>

</v-card>

</v-container>

</template>

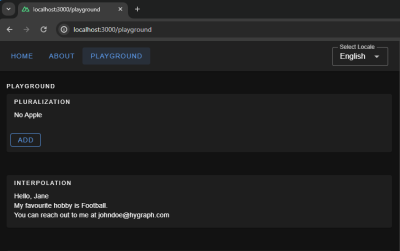

Date & Time Translations

Translating dates and times involves translating date and time formats according to the conventions of different locales. We can use Vue I18n’s features for formatting date strings, handling time zones, and translating day and month names for managing date time translations. We can give the configuration for the same using the datetimeFormats key inside the vue-i18n config object.

// i18n.config.ts

export default defineI18nConfig(() => ({

fallbackLocale: "en",

datetimeFormats: {

en: {

short: {

year: "numeric",

month: "short",

day: "numeric",

},

long: {

year: "numeric",

month: "short",

day: "numeric",

weekday: "short",

hour: "numeric",

minute: "numeric",

hour12: false,

},

},

fr: {

short: {

year: "numeric",

month: "short",

day: "numeric",

},

long: {

year: "numeric",

month: "short",

day: "numeric",

weekday: "long",

hour: "numeric",

minute: "numeric",

hour12: true,

},

},

es: {

short: {

year: "numeric",

month: "short",

day: "numeric",

},

long: {

year: "2-digit",

month: "short",

day: "numeric",

weekday: "long",

hour: "numeric",

minute: "numeric",

hour12: true,

},

},

},

}));

Here, we have set up short and long formats for all three languages. If you are coding along, you will be able to see available configurations for fields, like month and year, thanks to TypeScript and Intellisense features provided by your code editor. To display the translated dates and times in components, we should use the $d function and pass the format to it.

<!-- pages.playground.vue -->

<template>

<v-container fluid>

<!-- DATE TIME TRANSLATIONS EXAMPLE -->

<v-card color="cardBackground">

<v-card-title class="text-overline">

{{ $t("playgroundPage.dateTime.title") }}

</v-card-title>

<v-card-text>

<p>Short: {{ (new Date(), $d(new Date(), "short")) }}</p>

<p>Long: {{ (new Date(), $d(new Date(), "long")) }}</p>

</v-card-text>

</v-card>

</v-container>

</template>

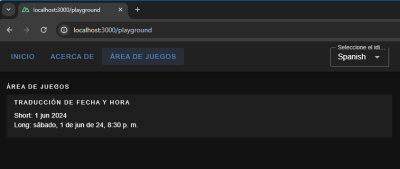

Localization On the Hygraph Side

We saw how to implement localization with static content. Now, we’ll attempt to understand how to fetch dynamic localized content in Nuxt.

We can build a blog page in our Nuxt App that fetches data from a server. The server API should accept a locale and return data in that specific locale.

Hygraph has a flexible localization API that allows you to publish and query localized content. If you haven’t created a free Hygraph account yet, you can do that on the Hygraph website to continue following along.

Go to Project Settings → Locales and add locales for the API.

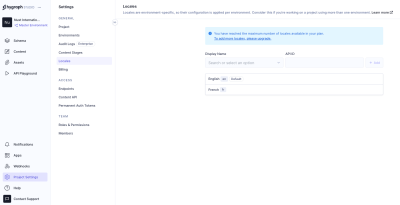

We have added two locales: English and French. Now we need aq localized_post model in our schema that only two fields: title and body. Ensure to make these “Localized” fields while creating them.

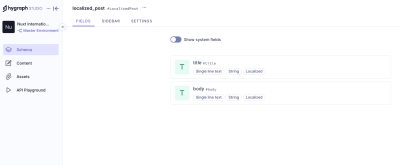

Add permissions to consume the localized content, go to Project settings → Access → API Access → Public Content API, and assign Read permissions to the localized_post model.

Now, we can go to the Hygrapgh API playground and add some localized data to the database with the help of GraphQL mutations. To limit the scope of this example, I am simply adding data from the Hygraph API playground. In an ideal world, a create/update mutation would be triggered from the front end after receiving user input.

Run this mutation in the Hygraph API playground:

mutation createLocalizedPost {

createLocalizedPost(

data: {

title: "A Journey Through the Alps",

body: "Exploring the majestic mountains of the Alps offers a thrilling experience. The stunning landscapes, diverse wildlife, and pristine environment make it a perfect destination for nature lovers.",

localizations: {

create: [