Intent Prototyping: A Practical Guide To Building With Clarity (Part 2)

Ready to move beyond static mockups? Here is a practical, step-by-step guide to Intent Prototyping — a disciplined method that uses AI to turn your design intent (UI sketches, conceptual models,

Ux

Build A Static RSS Reader To Fight Your Inner FOMO

RSS is a classic technology that fetches content from websites and feeds it to anyone who subscribes to it with a URL. It’s based on XML, and we can use it to consume the feeds in our own apps. Kari

Javascript

Freebie: Flat Jewels Icon Set

We’re back, this time with a gem of a giveaway! Courtesy of Pixelkit, we have here 25 handpicked flat icons to be given away to hongkiat.com readers. Available in a variety of sizes – from 32

Freebies

Beyond The Hype: What AI Can Really Do For Product Design

Beyond The Hype: What AI Can Really Do For Product Design Beyond The Hype: What AI Can Really Do For Product Design Nikita Samutin 2025-08-18T13:00:00+00:00 2025-08-21T11:03:55+00:00 These days, it’s easy to find curated lists of AI tools for designers, galleries of generated illustrations, and countless […]

Accessibility

Beyond The Hype: What AI Can Really Do For Product Design

Nikita Samutin 2025-08-18T13:00:00+00:00

2025-08-21T11:03:55+00:00

These days, it’s easy to find curated lists of AI tools for designers, galleries of generated illustrations, and countless prompt libraries. What’s much harder to find is a clear view of how AI is actually integrated into the everyday workflow of a product designer — not for experimentation, but for real, meaningful outcomes.

I’ve gone through that journey myself: testing AI across every major stage of the design process, from ideation and prototyping to visual design and user research. Along the way, I’ve built a simple, repeatable workflow that significantly boosts my productivity.

In this article, I’ll share what’s already working and break down some of the most common objections I’ve encountered — many of which I’ve faced personally.

Stage 1: Idea Generation Without The Clichés

Pushback: “Whenever I ask AI to suggest ideas, I just get a list of clichés. It can’t produce the kind of creative thinking expected from a product designer.”

That’s a fair point. AI doesn’t know the specifics of your product, the full context of your task, or many other critical nuances. The most obvious fix is to “feed it” all the documentation you have. But that’s a common mistake as it often leads to even worse results: the context gets flooded with irrelevant information, and the AI’s answers become vague and unfocused.

Current-gen models can technically process thousands of words, but the longer the input, the higher the risk of missing something important, especially content buried in the middle. This is known as the “lost in the middle” problem.

To get meaningful results, AI doesn’t just need more information — it needs the right information, delivered in the right way. That’s where the RAG approach comes in.

How RAG Works

Think of RAG as a smart assistant working with your personal library of documents. You upload your files, and the assistant reads each one, creating a short summary — a set of bookmarks (semantic tags) that capture the key topics, terms, scenarios, and concepts. These summaries are stored in a kind of “card catalog,” called a vector database.

When you ask a question, the assistant doesn’t reread every document from cover to cover. Instead, it compares your query to the bookmarks, retrieves only the most relevant excerpts (chunks), and sends those to the language model to generate a final answer.

How Is This Different from Just Dumping a Doc into the Chat?

Let’s break it down:

Typical chat interaction

It’s like asking your assistant to read a 100-page book from start to finish every time you have a question. Technically, all the information is “in front of them,” but it’s easy to miss something, especially if it’s in the middle. This is exactly what the “lost in the middle” issue refers to.

RAG approach

You ask your smart assistant a question, and it retrieves only the relevant pages (chunks) from different documents. It’s faster and more accurate, but it introduces a few new risks:

- Ambiguous question

You ask, “How can we make the project safer?” and the assistant brings you documents about cybersecurity, not finance. - Mixed chunks

A single chunk might contain a mix of marketing, design, and engineering notes. That blurs the meaning so the assistant can’t tell what the core topic is. - Semantic gap

You ask, “How can we speed up the app?” but the document says, “Optimize API response time.” For a human, that’s obviously related. For a machine, not always.

These aren’t reasons to avoid RAG or AI altogether. Most of them can be avoided with better preparation of your knowledge base and more precise prompts. So, where do you start?

Start With Three Short, Focused Documents

These three short documents will give your AI assistant just enough context to be genuinely helpful:

- Product Overview & Scenarios

A brief summary of what your product does and the core user scenarios. - Target Audience

Your main user segments and their key needs or goals. - Research & Experiments

Key insights from interviews, surveys, user testing, or product analytics.

Each document should focus on a single topic and ideally stay within 300–500 words. This makes it easier to search and helps ensure that each retrieved chunk is semantically clean and highly relevant.

Language Matters

In practice, RAG works best when both the query and the knowledge base are in English. I ran a small experiment to test this assumption, trying a few different combinations:

- English prompt + English documents: Consistently accurate and relevant results.

- Non-English prompt + English documents: Quality dropped sharply. The AI struggled to match the query with the right content.

- Non-English prompt + non-English documents: The weakest performance. Even though large language models technically support multiple languages, their internal semantic maps are mostly trained in English. Vector search in other languages tends to be far less reliable.

Takeaway: If you want your AI assistant to deliver precise, meaningful responses, do your RAG work entirely in English, both the data and the queries. This advice applies specifically to RAG setups. For regular chat interactions, you’re free to use other languages. A challenge also highlighted in this 2024 study on multilingual retrieval.

From Outsider to Teammate: Giving AI the Context It Needs

Once your AI assistant has proper context, it stops acting like an outsider and starts behaving more like someone who truly understands your product. With well-structured input, it can help you spot blind spots in your thinking, challenge assumptions, and strengthen your ideas — the way a mid-level or senior designer would.

Here’s an example of a prompt that works well for me:

Your task is to perform a comparative analysis of two features: “Group gift contributions” (described in group_goals.txt) and “Personal savings goals” (described in personal_goals.txt).

The goal is to identify potential conflicts in logic, architecture, and user scenarios and suggest visual and conceptual ways to clearly separate these two features in the UI so users can easily understand the difference during actual use.

Please include:

- Possible overlaps in user goals, actions, or scenarios;

- Potential confusion if both features are launched at the same time;

- Any architectural or business-level conflicts (e.g. roles, notifications, access rights, financial logic);

- Suggestions for visual and conceptual separation: naming, color coding, separate sections, or other UI/UX techniques;

- Onboarding screens or explanatory elements that might help users understand both features.

If helpful, include a comparison table with key parameters like purpose, initiator, audience, contribution method, timing, access rights, and so on.

AI Needs Context, Not Just Prompts

If you want AI to go beyond surface-level suggestions and become a real design partner, it needs the right context. Not just more information, but better, more structured information.

Building a usable knowledge base isn’t difficult. And you don’t need a full-blown RAG system to get started. Many of these principles work even in a regular chat: well-organized content and a clear question can dramatically improve how helpful and relevant the AI’s responses are. That’s your first step in turning AI from a novelty into a practical tool in your product design workflow.

Stage 2: Prototyping and Visual Experiments

Pushback: “AI only generates obvious solutions and can’t even build a proper user flow. It’s faster to do it manually.”

That’s a fair concern. AI still performs poorly when it comes to building complete, usable screen flows. But for individual elements, especially when exploring new interaction patterns or visual ideas, it can be surprisingly effective.

For example, I needed to prototype a gamified element for a limited-time promotion. The idea is to give users a lottery ticket they can “flip” to reveal a prize. I couldn’t recreate the 3D animation I had in mind in Figma, either manually or using any available plugins. So I described the idea to Claude 4 in Figma Make and within a few minutes, without writing a single line of code, I had exactly what I needed.

At the prototyping stage, AI can be a strong creative partner in two areas:

- UI element ideation

It can generate dozens of interactive patterns, including ones you might not think of yourself. - Micro-animation generation

It can quickly produce polished animations that make a concept feel real, which is great for stakeholder presentations or as a handoff reference for engineers.

AI can also be applied to multi-screen prototypes, but it’s not as simple as dropping in a set of mockups and getting a fully usable flow. The bigger and more complex the project, the more fine-tuning and manual fixes are required. Where AI already works brilliantly is in focused tasks — individual screens, elements, or animations — where it can kick off the thinking process and save hours of trial and error.

A quick UI prototype of a gamified promo banner created with Claude 4 in Figma Make. No code or plugins needed.

Here’s another valuable way to use AI in design — as a stress-testing tool. Back in 2023, Google Research introduced PromptInfuser, an internal Figma plugin that allowed designers to attach prompts directly to UI elements and simulate semi-functional interactions within real mockups. Their goal wasn’t to generate new UI, but to check how well AI could operate inside existing layouts — placing content into specific containers, handling edge-case inputs, and exposing logic gaps early.

The results were striking: designers using PromptInfuser were up to 40% more effective at catching UI issues and aligning the interface with real-world input — a clear gain in design accuracy, not just speed.

That closely reflects my experience with Claude 4 and Figma Make: when AI operates within a real interface structure, rather than starting from a blank canvas, it becomes a much more reliable partner. It helps test your ideas, not just generate them.

Stage 3: Finalizing The Interface And Visual Style

Pushback: “AI can’t match our visual style. It’s easier to just do it by hand.”

This is one of the most common frustrations when using AI in design. Even if you upload your color palette, fonts, and components, the results often don’t feel like they belong in your product. They tend to be either overly decorative or overly simplified.

And this is a real limitation. In my experience, today’s models still struggle to reliably apply a design system, even if you provide a component structure or JSON files with your styles. I tried several approaches:

- Direct integration with a component library.

I used Figma Make (powered by Claude) and connected our library. This was the least effective method: although the AI attempted to use components, the layouts were often broken, and the visuals were overly conservative. Other designers have run into similar issues, noting that library support in Figma Make is still limited and often unstable. - Uploading styles as JSON.

Instead of a full component library, I tried uploading only the exported styles — colors, fonts — in a JSON format. The results improved: layouts looked more modern, but the AI still made mistakes in how styles were applied. - Two-step approach: structure first, style second.

What worked best was separating the process. First, I asked the AI to generate a layout and composition without any styling. Once I had a solid structure, I followed up with a request to apply the correct styles from the same JSON file. This produced the most usable result — though still far from pixel-perfect.

So yes, AI still can’t help you finalize your UI. It doesn’t replace hand-crafted design work. But it’s very useful in other ways:

- Quickly creating a visual concept for discussion.

- Generating “what if” alternatives to existing mockups.

- Exploring how your interface might look in a different style or direction.

- Acting as a second pair of eyes by giving feedback, pointing out inconsistencies or overlooked issues you might miss when tired or too deep in the work.

AI won’t save you five hours of high-fidelity design time, since you’ll probably spend that long fixing its output. But as a visual sparring partner, it’s already strong. If you treat it like a source of alternatives and fresh perspectives, it becomes a valuable creative collaborator.

“

Stage 4: Product Feedback And Analytics: AI As A Thinking Exosuit

Product designers have come a long way. We used to create interfaces in Photoshop based on predefined specs. Then we delved deeper into UX with mapping user flows, conducting interviews, and understanding user behavior. Now, with AI, we gain access to yet another level: data analysis, which used to be the exclusive domain of product managers and analysts.

As Vitaly Friedman rightly pointed out in one of his columns, trying to replace real UX interviews with AI can lead to false conclusions as models tend to generate an average experience, not a real one. The strength of AI isn’t in inventing data but in processing it at scale.

Let me give a real example. We launched an exit survey for users who were leaving our service. Within a week, we collected over 30,000 responses across seven languages.

Simply counting the percentages for each of the five predefined reasons wasn’t enough. I wanted to know:

- Are there specific times of day when users churn more?

- Do the reasons differ by region?

- Is there a correlation between user exits and system load?

The real challenge was… figuring out what cuts and angles were even worth exploring. The entire technical process, from analysis to visualizations, was done “for me” by Gemini, working inside Google Sheets. This task took me about two hours in total. Without AI, not only would it have taken much longer, but I probably wouldn’t have been able to reach that level of insight on my own at all.

AI enables near real-time work with large data sets. But most importantly, it frees up your time and energy for what’s truly valuable: asking the right questions.

“

A few practical notes: Working with large data sets is still challenging for models without strong reasoning capabilities. In my experiments, I used Gemini embedded in Google Sheets and cross-checked the results using ChatGPT o3. Other models, including the standalone Gemini 2.5 Pro, often produced incorrect outputs or simply refused to complete the task.

AI Is Not An Autopilot But A Co-Pilot

AI in design is only as good as the questions you ask it. It doesn’t do the work for you. It doesn’t replace your thinking. But it helps you move faster, explore more options, validate ideas, and focus on the hard parts instead of burning time on repetitive ones. Sometimes it’s still faster to design things by hand. Sometimes it makes more sense to delegate to a junior designer.

But increasingly, AI is becoming the one who suggests, sharpens, and accelerates. Don’t wait to build the perfect AI workflow. Start small. And that might be the first real step in turning AI from a curiosity into a trusted tool in your product design process.

Let’s Summarize

- If you just paste a full doc into chat, the model often misses important points, especially things buried in the middle. That’s the “lost in the middle” problem.

- The RAG approach helps by pulling only the most relevant pieces from your documents. So responses are faster, more accurate, and grounded in real context.

- Clear, focused prompts work better. Narrow the scope, define the output, and use familiar terms to help the model stay on track.

- A well-structured knowledge bas makes a big difference. Organizing your content into short, topic-specific docs helps reduce noise and keep answers sharp.

- Use English for both your prompts and your documents. Even multilingual models are most reliable when working in English, especially for retrieval.

- Most importantly: treat AI as a creative partner. It won’t replace your skills, but it can spark ideas, catch issues, and speed up the tedious parts.

Further Reading

- “AI-assisted Design Workflows: How UX Teams Move Faster Without Sacrificing Quality”, Cindy Brummer

This piece is a perfect prequel to my article. It explains how to start integrating AI into your design process, how to structure your workflow, and which tasks AI can reasonably take on — before you dive into RAG or idea generation. - “8 essential tips for using Figma Make”, Alexia Danton

While this article focuses on Figma Make, the recommendations are broadly applicable. It offers practical advice that will make your work with AI smoother, especially if you’re experimenting with visual tools and structured prompting. - “What Is Retrieval-Augmented Generation aka RAG”, Rick Merritt

If you want to go deeper into how RAG actually works, this is a great starting point. It breaks down key concepts like vector search and retrieval in plain terms and explains why these methods often outperform long prompts alone.

(yk)

The Power Of The Intl API: A Definitive Guide To Browser-Native Internationalization

The Power Of The <code>Intl</code> API: A Definitive Guide To Browser-Native Internationalization The Power Of The <code>Intl</code> API: A Definitive Guide To Browser-Native Internationalization Fuqiao Xue 2025-08-08T10:00:00+00:00 2025-08-13T15:04:28+00:00 It’s a common misconception that internationalization (i18n) is simply about translating text. While crucial, translation is merely […]

Accessibility

The Power Of The <code>Intl</code> API: A Definitive Guide To Browser-Native Internationalization

Fuqiao Xue 2025-08-08T10:00:00+00:00

2025-08-13T15:04:28+00:00

It’s a common misconception that internationalization (i18n) is simply about translating text. While crucial, translation is merely one facet. One of the complexities lies in adapting information for diverse cultural expectations: How do you display a date in Japan versus Germany? What’s the correct way to pluralize an item in Arabic versus English? How do you sort a list of names in various languages?

Many developers have relied on weighty third-party libraries or, worse, custom-built formatting functions to tackle these challenges. These solutions, while functional, often come with significant overhead: increased bundle size, potential performance bottlenecks, and the constant struggle to keep up with evolving linguistic rules and locale data.

Enter the ECMAScript Internationalization API, more commonly known as the Intl object. This silent powerhouse, built directly into modern JavaScript environments, is an often-underestimated, yet incredibly potent, native, performant, and standards-compliant solution for handling data internationalization. It’s a testament to the web’s commitment to being worldwide, providing a unified and efficient way to format numbers, dates, lists, and more, according to specific locales.

Intl And Locales: More Than Just Language Codes

At the heart of Intl lies the concept of a locale. A locale is far more than just a two-letter language code (like en for English or es for Spanish). It encapsulates the complete context needed to present information appropriately for a specific cultural group. This includes:

- Language: The primary linguistic medium (e.g.,

en,es,fr). - Script: The script (e.g.,

Latnfor Latin,Cyrlfor Cyrillic). For example,zh-Hansfor Simplified Chinese, vs.zh-Hantfor Traditional Chinese. - Region: The geographic area (e.g.,

USfor United States,GBfor Great Britain,DEfor Germany). This is crucial for variations within the same language, such asen-USvs.en-GB, which differ in date, time, and number formatting. - Preferences/Variants: Further specific cultural or linguistic preferences. See “Choosing a Language Tag” from W3C for more information.

Typically, you’ll want to choose the locale according to the language of the web page. This can be determined from the lang attribute:

// Get the page's language from the HTML lang attribute

const pageLocale = document.documentElement.lang || 'en-US'; // Fallback to 'en-US'

Occasionally, you may want to override the page locale with a specific locale, such as when displaying content in multiple languages:

// Force a specific locale regardless of page language

const tutorialFormatter = new Intl.NumberFormat('zh-CN', { style: 'currency', currency: 'CNY' });

console.log(`Chinese example: ${tutorialFormatter.format(199.99)}`); // Output: ¥199.99

In some cases, you might want to use the user’s preferred language:

// Use the user's preferred language

const browserLocale = navigator.language || 'ja-JP';

const formatter = new Intl.NumberFormat(browserLocale, { style: 'currency', currency: 'JPY' });

When you instantiate an Intl formatter, you can optionally pass one or more locale strings. The API will then select the most appropriate locale based on availability and preference.

Core Formatting Powerhouses

The Intl object exposes several constructors, each for a specific formatting task. Let’s delve into the most frequently used ones, along with some powerful, often-overlooked gems.

1. Intl.DateTimeFormat: Dates and Times, Globally

Formatting dates and times is a classic i18n problem. Should it be MM/DD/YYYY or DD.MM.YYYY? Should the month be a number or a full word? Intl.DateTimeFormat handles all this with ease.

const date = new Date(2025, 6, 27, 14, 30, 0); // June 27, 2025, 2:30 PM

// Specific locale and options (e.g., long date, short time)

const options = {

weekday: 'long',

year: 'numeric',

month: 'long',

day: 'numeric',

hour: 'numeric',

minute: 'numeric',

timeZoneName: 'shortOffset' // e.g., "GMT+8"

};

console.log(new Intl.DateTimeFormat('en-US', options).format(date));

// "Friday, June 27, 2025 at 2:30 PM GMT+8"

console.log(new Intl.DateTimeFormat('de-DE', options).format(date));

// "Freitag, 27. Juni 2025 um 14:30 GMT+8"

// Using dateStyle and timeStyle for common patterns

console.log(new Intl.DateTimeFormat('en-GB', { dateStyle: 'full', timeStyle: 'short' }).format(date));

// "Friday 27 June 2025 at 14:30"

console.log(new Intl.DateTimeFormat('ja-JP', { dateStyle: 'long', timeStyle: 'short' }).format(date));

// "2025年6月27日 14:30"

The flexibility of options for DateTimeFormat is vast, allowing control over year, month, day, weekday, hour, minute, second, time zone, and more.

2. Intl.NumberFormat: Numbers With Cultural Nuance

Beyond simple decimal places, numbers require careful handling: thousands separators, decimal markers, currency symbols, and percentage signs vary wildly across locales.

const price = 123456.789;

// Currency formatting

console.log(new Intl.NumberFormat('en-US', { style: 'currency', currency: 'USD' }).format(price));

// "$123,456.79" (auto-rounds)

console.log(new Intl.NumberFormat('de-DE', { style: 'currency', currency: 'EUR' }).format(price));

// "123.456,79 €"

// Units

console.log(new Intl.NumberFormat('en-US', { style: 'unit', unit: 'meter', unitDisplay: 'long' }).format(100));

// "100 meters"

console.log(new Intl.NumberFormat('fr-FR', { style: 'unit', unit: 'kilogram', unitDisplay: 'short' }).format(5.5));

// "5,5 kg"

Options like minimumFractionDigits, maximumFractionDigits, and notation (e.g., scientific, compact) provide even finer control.

3. Intl.ListFormat: Natural Language Lists

Presenting lists of items is surprisingly tricky. English uses “and” for conjunction and “or” for disjunction. Many languages have different conjunctions, and some require specific punctuation.

This API simplifies a task that would otherwise require complex conditional logic:

const items = ['apples', 'oranges', 'bananas'];

// Conjunction ("and") list

console.log(new Intl.ListFormat('en-US', { type: 'conjunction' }).format(items));

// "apples, oranges, and bananas"

console.log(new Intl.ListFormat('de-DE', { type: 'conjunction' }).format(items));

// "Äpfel, Orangen und Bananen"

// Disjunction ("or") list

console.log(new Intl.ListFormat('en-US', { type: 'disjunction' }).format(items));

// "apples, oranges, or bananas"

console.log(new Intl.ListFormat('fr-FR', { type: 'disjunction' }).format(items));

// "apples, oranges ou bananas"

4. Intl.RelativeTimeFormat: Human-Friendly Timestamps

Displaying “2 days ago” or “in 3 months” is common in UI, but localizing these phrases accurately requires extensive data. Intl.RelativeTimeFormat automates this.

const rtf = new Intl.RelativeTimeFormat('en-US', { numeric: 'auto' });

console.log(rtf.format(-1, 'day')); // "yesterday"

console.log(rtf.format(1, 'day')); // "tomorrow"

console.log(rtf.format(-7, 'day')); // "7 days ago"

console.log(rtf.format(3, 'month')); // "in 3 months"

console.log(rtf.format(-2, 'year')); // "2 years ago"

// French example:

const frRtf = new Intl.RelativeTimeFormat('fr-FR', { numeric: 'auto', style: 'long' });

console.log(frRtf.format(-1, 'day')); // "hier"

console.log(frRtf.format(1, 'day')); // "demain"

console.log(frRtf.format(-7, 'day')); // "il y a 7 jours"

console.log(frRtf.format(3, 'month')); // "dans 3 mois"

The numeric: 'always' option would force “1 day ago” instead of “yesterday”.

5. Intl.PluralRules: Mastering Pluralization

This is arguably one of the most critical aspects of i18n. Different languages have vastly different pluralization rules (e.g., English has singular/plural, Arabic has zero, one, two, many…). Intl.PluralRules allows you to determine the “plural category” for a given number in a specific locale.

const prEn = new Intl.PluralRules('en-US');

console.log(prEn.select(0)); // "other" (for "0 items")

console.log(prEn.select(1)); // "one" (for "1 item")

console.log(prEn.select(2)); // "other" (for "2 items")

const prAr = new Intl.PluralRules('ar-EG');

console.log(prAr.select(0)); // "zero"

console.log(prAr.select(1)); // "one"

console.log(prAr.select(2)); // "two"

console.log(prAr.select(10)); // "few"

console.log(prAr.select(100)); // "other"

This API doesn’t pluralize text directly, but it provides the essential classification needed to select the correct translation string from your message bundles. For example, if you have message keys like item.one, item.other, you’d use pr.select(count) to pick the right one.

6. Intl.DisplayNames: Localized Names For Everything

Need to display the name of a language, a region, or a script in the user’s preferred language? Intl.DisplayNames is your comprehensive solution.

// Display language names in English

const langNamesEn = new Intl.DisplayNames(['en'], { type: 'language' });

console.log(langNamesEn.of('fr')); // "French"

console.log(langNamesEn.of('es-MX')); // "Mexican Spanish"

// Display language names in French

const langNamesFr = new Intl.DisplayNames(['fr'], { type: 'language' });

console.log(langNamesFr.of('en')); // "anglais"

console.log(langNamesFr.of('zh-Hans')); // "chinois (simplifié)"

// Display region names

const regionNamesEn = new Intl.DisplayNames(['en'], { type: 'region' });

console.log(regionNamesEn.of('US')); // "United States"

console.log(regionNamesEn.of('DE')); // "Germany"

// Display script names

const scriptNamesEn = new Intl.DisplayNames(['en'], { type: 'script' });

console.log(scriptNamesEn.of('Latn')); // "Latin"

console.log(scriptNamesEn.of('Arab')); // "Arabic"

With Intl.DisplayNames, you avoid hardcoding countless mappings for language names, regions, or scripts, keeping your application robust and lean.

Browser Support

You might be wondering about browser compatibility. The good news is that Intl has excellent support across modern browsers. All major browsers (Chrome, Firefox, Safari, Edge) fully support the core functionality discussed (DateTimeFormat, NumberFormat, ListFormat, RelativeTimeFormat, PluralRules, DisplayNames). You can confidently use these APIs without polyfills for the majority of your user base.

Conclusion: Embrace The Global Web With Intl

The Intl API is a cornerstone of modern web development for a global audience. It empowers front-end developers to deliver highly localized user experiences with minimal effort, leveraging the browser’s built-in, optimized capabilities.

By adopting Intl, you reduce dependencies, shrink bundle sizes, and improve performance, all while ensuring your application respects and adapts to the diverse linguistic and cultural expectations of users worldwide. Stop wrestling with custom formatting logic and embrace this standards-compliant tool!

It’s important to remember that Intl handles the formatting of data. While incredibly powerful, it doesn’t solve every aspect of internationalization. Content translation, bidirectional text (RTL/LTR), locale-specific typography, and deep cultural nuances beyond data formatting still require careful consideration. (I may write about these in the future!) However, for presenting dynamic data accurately and intuitively, Intl is the browser-native answer.

Further Reading & Resources

- MDN Web Docs:

- ECMAScript Internationalization API Specification: The official ECMA-402 Standard

- Choosing a Language Tag

(gg, yk)

Automating Design Systems: Tips And Resources For Getting Started

Automating Design Systems: Tips And Resources For Getting Started Automating Design Systems: Tips And Resources For Getting Started Joas Pambou 2025-08-06T10:00:00+00:00 2025-08-07T14:02:50+00:00 A design system is more than just a set of colors and buttons. It’s a shared language that helps designers and developers build […]

Accessibility

Automating Design Systems: Tips And Resources For Getting Started

Joas Pambou 2025-08-06T10:00:00+00:00

2025-08-07T14:02:50+00:00

A design system is more than just a set of colors and buttons. It’s a shared language that helps designers and developers build good products together. At its core, a design system includes tokens (like colors, spacing, fonts), components (such as buttons, forms, navigation), plus the rules and documentation that tie all together across projects.

If you’ve ever used systems like Google Material Design or Shopify Polaris, for example, then you’ve seen how design systems set clear expectations for structure and behavior, making teamwork smoother and faster. But while design systems promote consistency, keeping everything in sync is the hard part. Update a token in Figma, like a color or spacing value, and that change has to show up in the code, the documentation, and everywhere else it’s used.

The same thing goes for components: when a button’s behavior changes, it needs to update across the whole system. That’s where the right tools and a bit of automation can make the difference. They help reduce repetitive work and keep the system easier to manage as it grows.

In this article, we’ll cover a variety of tools and techniques for syncing tokens, updating components, and keeping docs up to date, showing how automation can make all of it easier.

The Building Blocks Of Automation

Let’s start with the basics. Color, typography, spacing, radii, shadows, and all the tiny values that make up your visual language are known as design tokens, and they’re meant to be the single source of truth for the UI. You’ll see them in design software like Figma, in code, in style guides, and in documentation. Smashing Magazine has covered them before in great detail.

The problem is that they often go out of sync, such as when a color or component changes in design but doesn’t get updated in the code. The more your team grows or changes, the more these mismatches show up; not because people aren’t paying attention, but because manual syncing just doesn’t scale. That’s why automating tokens is usually the first thing teams should consider doing when they start building a design system. That way, instead of writing the same color value in Figma and then again in a configuration file, you pull from a shared token source and let that drive both design and development.

There are a few tools that are designed to help make this easier.

Token Studio

Token Studio is a Figma plugin that lets you manage design tokens directly in your file, export them to different formats, and sync them to code.

Specify

Specify lets you collect tokens from Figma and push them to different targets, including GitHub repositories, continuous integration pipelines, documentation, and more.

Design-tokens.dev

Design-tokens.dev is a helpful reference if you want tips for things like how to structure tokens, format them (e.g., JSON, YAML, and so on), and think about token types.

NameDesignTokens.guide

NamedDesignTokens.guide helps with naming conventions, which is honestly a common pain point, especially when you’re working with a large number of tokens.

Once your tokens are set and connected, you’ll spend way less time fixing inconsistencies. It also gives you a solid base to scale, whether that’s adding themes, switching brands, or even building systems for multiple products.

That’s also when naming really starts to count. If your tokens or components aren’t clearly named, things can get confusing quickly.

Note: Vitaly Friedman’s “How to Name Things” is worth checking out if you’re working with larger systems.

From there, it’s all about components. Tokens define the values, but components are what people actually use, e.g., buttons, inputs, cards, dropdowns — you name it. In a perfect setup, you build a component once and reuse it everywhere. But without structure, it’s easy for things to “drift” out of scope. It’s easy to end up with five versions of the same button, and what’s in code doesn’t match what’s in Figma, for example.

Automation doesn’t replace design, but rather, it connects everything to one source.

The Figma component matches the one in production, the documentation updates when the component changes, and the whole team is pulling from the same library instead of rebuilding their own version. This is where real collaboration happens.

Here are a few tools that help make that happen:

| Tool | What It Does |

|---|---|

| UXPin Merge | Lets you design using real code components. What you prototype is what gets built. |

| Supernova | Helps you publish a design system, sync design and code sources, and keep documentation up-to-date. |

| Zeroheight | Turns your Figma components into a central, browsable, and documented system for your whole team. |

How Does Everything Connect?

A lot of the work starts right inside your design application. Once your tokens and components are in place, tools like Supernova help you take it further by extracting design data, syncing it across platforms, and generating production-ready code. You don’t need to write custom scripts or use the Figma API to get value from automation; these tools handle most of it for you.

But for teams that want full control, Figma does offer an API. It lets you do things like the following:

- Pull token values (like colors, spacing, typography) directly from Figma files,

- Track changes to components and variants,

- Tead metadata (like style names, structure, or usage patterns), and

- Map which components are used where in the design.

The Figma API is REST-based, so it works well with custom scripts and automations. You don’t need a huge setup, just the right pieces. On the development side, teams usually use Node.js or Python to handle automation. For example:

- Fetch styles from Figma.

- Convert them into JSON.

- Push the values to a design token repo or directly into the codebase.

You won’t need that level of setup for most use cases, but it’s helpful to know it’s there if your team outgrows no-code tools.

- Where do your tokens and components come from?

- How do updates happen?

- What tools keep everything connected?

The workflow becomes easier to manage once that’s clear, and you spend less time trying to fix changes or mismatches. When tokens, components, and documentation stay in sync, your team moves faster and spends less time fixing the same issues.

Extracting Design Data

Figma is a collaborative design tool used to create UIs: buttons, layouts, styles, components, everything that makes up the visual language of the product. It’s also where all your design data lives, which includes the tokens we talked about earlier. This data is what we’ll extract and eventually connect to your codebase. But first, you’ll need a setup.

To follow along:

- Go to figma.com and create a free account.

- Download the Figma desktop app if you prefer working locally, but keep an eye on system requirements if you’re on an older device.

Once you’re in, you’ll see a home screen that looks something like the following:

From here, it’s time to set up your design tokens. You can either create everything from scratch or use a template from the Figma community to save time. Templates are a great option if you don’t want to build everything yourself. But if you prefer full control, creating your setup totally works too.

There are other ways to get tokens as well. For example, a site like namedesigntokens.guide lets you generate and download tokens in formats like JSON. The only catch is that Figma doesn’t let you import JSON directly, so if you go that route, you’ll need to bring in a middle tool like Specify to bridge that gap. It helps sync tokens between Figma, GitHub, and other places.

For this article, though, we’ll keep it simple and stick with Figma. Pick any design system template from the Figma community to get started; there are plenty to choose from.

Depending on the template you choose, you’ll get a pre-defined set of tokens that includes colors, typography, spacing, components, and more. These templates come in all types: website, e-commerce, portfolio, app UI kits, you name it. For this article, we’ll be using the /Design-System-Template–Community because it includes most of the tokens you’ll need right out of the box. But feel free to pick a different one if you want to try something else.

Once you’ve picked your template, it’s time to download the tokens. We’ll use Supernova, a tool that connects directly to your Figma file and pulls out design tokens, styles, and components. It makes the design-to-code process a lot smoother.

Step 1: Sign Up on Supernova

Go to supernova.io and create an account. Once you’re in, you’ll land on a dashboard that looks like this:

Step 2: Connect Your Figma File

To pull in the tokens, head over to the Data Sources section in Supernova and choose Figma from the list of available sources. (You’ll also see other options like Storybook or Figma variables, but we’re focusing on Figma.) Next, click on Connect a new file, paste the link to your Figma template, and click Import.

Supernova will load the full design system from your template. From your dashboard, you’ll now be able to see all the tokens.

Turning Tokens Into Code

Design tokens are great inside Figma, but the real value shows when you turn them into code. That’s how the developers on your team actually get to use them.

Here’s the problem: Many teams default to copying values manually for things like color, spacing, and typography. But when you make a change to them in Figma, the code is instantly out of sync. That’s why automating this process is such a big win.

Instead of rewriting the same theme setup for every project, you generate it, constantly translating designs into dev-ready assets, and keep everything in sync from one source of truth.

Now that we’ve got all our tokens in Supernova, let’s turn them into code. First, go to the Code Automation tab, then click New Pipeline. You’ll see different options depending on what you want to generate: React Native, CSS-in-JS, Flutter, Godot, and a few others.

Let’s go with the CSS-in-JS option for the sake of demonstration:

After that, you’ll land on a setup screen with three sections: Data, Configuration, and Delivery.

Data

Here, you can pick a theme. At first, it might only give you “Black” as the option; you can select that or leave it empty. It really doesn’t matter for the time being.

Configuration

This is where you control how the code is structured. I picked PascalCase for how token names are formatted. You can also update how things like spacing, colors, or font styles are grouped and saved.

Delivery

This is where you choose how you want the output delivered. I chose “Build Only”, which builds the code for you to download.

Once you’re done, click Save. The pipeline is created, and you’ll see it listed in your dashboard. From here, you can download your token code, which is already generated.

Automating Documentation

So, what’s the point of documentation in a design system?

You can think of it as the instruction manual for your team. It explains what each token or component is, why it exists, and how to use it. Designers, developers, and anyone else on your team can stay on the same page — no guessing, no back-and-forth. Just clear context.

Let’s continue from where we stopped. Supernova is capable of handling your documentation. Head over to the Documentation tab. This is where you can start editing everything about your design system docs, all from the same place.

You can:

- Add descriptions to your tokens,

- Define what each base token is for (as well as what it’s not for),

- Organize sections by colors, typography, spacing, or components, and

- Drop in images, code snippets, or examples.

You’re building the documentation inside the same tool where your tokens live. In other words, there’s no jumping between tools and no additional setup. That’s where the automation kicks in. You edit once, and your docs stay synced with your design source. It all stays in one environment.

Once you’re done, click Publish and you will be presented with a new window asking you to sign in. After that, you’re able to access your live documentation site.

Practical Tips For Automations

Automation is great. It saves hours of manual work and keeps your design system tight across design and code. The trick is knowing when to automate and how to make sure it keeps working over time. You don’t need to automate everything right away. But if you’re doing the same thing over and over again, that’s a kind of red flag.

A few signs that it’s time to consider using automation:

- You’re using the same styles across multiple platforms (like web and mobile).

- You have a shared design system used by more than one team.

- Design tokens change often, and you want updates to flow into code automatically.

- You’re tired of manual updates every time the brand team tweaks a color.

There are three steps you need to consider. Let’s look at each one.

Step 1: Keep An Eye On Tools And API Updates

If your pipeline depends on design tools, like Figma, or platforms, like Supernova, you’ll want to know when changes are made and evaluate how they impact your work, because even small updates can quietly affect your exports.

It’s a good idea to check Figma’s API changelog now and then, especially if something feels off with your token syncing. They often update how variables and components are structured, and that can impact your pipeline. There’s also an RSS feed for product updates.

The same goes for Supernova’s product updates. They regularly roll out improvements that might tweak how your tokens are handled or exported. If you’re using open-source tools like Style Dictionary, keeping an eye on the GitHub repo (particularly the Issues tab) can save you from debugging weird token name changes later.

All of this isn’t about staying glued to release notes, but having a system to check if something suddenly stops working. That way, you’ll catch things before they reach production.

Step 2: Break Your Pipeline Into Smaller Steps

A common trap teams fall into is trying to automate everything in one big run: colors, spacing, themes, components, and docs, all processed in a single click. It sounds convenient, but it’s hard to maintain, and even harder to debug.

It’s much more manageable to split your automation into pieces. For example, having a single workflow that handles your core design tokens (e.g., colors, spacing, and font sizes), another for theme variations (e.g., light and dark themes), and one more for component mapping (e.g., buttons, inputs, and cards). This way, if your team changes how spacing tokens are named in Figma, you only need to update one part of the workflow, not the entire system. It’s also easier to test and reuse smaller steps.

Step 3: Test The Output Every Time

Even if everything runs fine, always take a moment to check the exported output. It doesn’t need to be complicated. A few key things:

- Are the token names clean and readable?

If you see something likePrimaryColorColorText, that’s a red flag. - Did anything disappear or get renamed unexpectedly?

It happens more often than you think, especially with typography or spacing tokens after design changes. - Does the UI still work?

If you’re using something like Tailwind, CSS variables, or custom themes, double-check that the new token values aren’t breaking anything in the design or build process.

To catch issues early, it helps to run tools like ESLint or Stylelint right after the pipeline completes. They’ll flag odd syntax or naming problems before things get shipped.

How AI Can Help

Once your automation is stable, there’s a next layer that can boost your workflow: AI. It’s not just for writing code or generating mockups, but for helping with the small, repetitive things that eat up time in design systems. When used right, AI can assist without replacing your control over the system.

Here’s where it might fit into your workflow:

Naming Suggestions

When you’re dealing with hundreds of tokens, naming them clearly and consistently is a real challenge. Some AI tools can help by suggesting clean, readable names for your tokens or components based on patterns in your design. It’s not perfect, but it’s a good way to kickstart naming, especially for large teams.

Pattern Recognition

AI can also spot repeated styles or usage patterns across your design files. If multiple buttons or cards share similar spacing, shadows, or typography, tools powered by AI can group or suggest components for systemization even before a human notices.

Automated Documentation

Instead of writing everything from scratch, AI can generate first drafts of documentation based on your tokens, styles, and usage. You still need to review and refine, but it takes away the blank-page problem and saves hours.

Here are a few tools that already bring AI into the design and development space in practical ways:

- Uizard: Uizard uses AI to turn wireframes into designs automatically. You can sketch something by hand, and it transforms that into a usable mockup.

- Anima: Anima can convert Figma designs into responsive React code. It also helps fill in real content or layout structures, making it a powerful bridge between design and development, with some AI assistance under the hood.

- Builder.io: Builder uses AI to help generate and edit components visually. It’s especially useful for marketers or non-developers who need to build pages fast. AI helps streamline layout, content blocks, and design rules.

Conclusion

This article is not about achieving complete automation in the technical sense, but more about using smart tools to streamline the menial and manual aspects of working with design systems. Exporting tokens, generating docs, and syncing design with code can be automated, making your process quicker and more reliable with the right setup.

Instead of rebuilding everything from scratch every time, you now have a way to keep things consistent, stay organized, and save time.

Further Reading

- “Design System Guide” by Romina Kavcic

- “Design System In 90 Days” by Vitaly Friedman

(gg, yk)

UX Job Interview Helpers

UX Job Interview Helpers UX Job Interview Helpers Vitaly Friedman 2025-08-05T13:00:00+00:00 2025-08-07T14:02:50+00:00 When talking about job interviews for a UX position, we often discuss how to leave an incredible impression and how to negotiate the right salary. But it’s only one part of the story. […]

Accessibility

UX Job Interview Helpers

Vitaly Friedman 2025-08-05T13:00:00+00:00

2025-08-07T14:02:50+00:00

When talking about job interviews for a UX position, we often discuss how to leave an incredible impression and how to negotiate the right salary. But it’s only one part of the story. The other part is to be prepared, to ask questions, and to listen carefully.

Below, I’ve put together a few useful resources on UX job interviews — from job boards to Notion templates and practical guides. I hope you or your colleagues will find it helpful.

The Design Interview Kit

As you are preparing for that interview, get ready with the Design Interview Kit (Figma), a helpful practical guide that covers how to craft case studies, solve design challenges, write cover letters, present your portfolio, and negotiate your offer. Kindly shared by Oliver Engel.

The Product Designer’s (Job) Interview Playbook (PDF)

The Product Designer’s (Job) Interview Playbook (PDF) is a practical little guide for designers through each interview phase, with helpful tips and strategies on things to keep in mind, talking points, questions to ask, red flags to watch out for and how to tell a compelling story about yourself and your work. Kindly put together by Meghan Logan.

From my side, I can only wholeheartedly recommend to not only speak about your design process. Tell stories about the impact that your design work has produced. Frame your design work as an enabler of business goals and user needs. And include insights about the impact you’ve produced — on business goals, processes, team culture, planning, estimates, and testing.

Also, be very clear about the position that you are applying for. In many companies, titles do matter. There are vast differences in responsibilities and salaries between various levels for designers, so if you see yourself as a senior, review whether it actually reflects in the position.

A Guide To Successful UX Job Interviews (+ Notion template)

Catt Small’s Guide To Successful UX Job Interviews, a wonderful practical series on how to build a referral pipeline, apply for an opening, prepare for screening and interviews, present your work, and manage salary expectations. You can also download a Notion template.

30 Useful Questions To Ask In UX Job Interviews

In her wonderful article, Nati Asher has suggested many useful questions to ask in a job interview when you are applying as a UX candidate. I’ve taken the liberty of revising some of them and added a few more questions that might be worth considering for your next job interview.

- What are the biggest challenges the team faces at the moment?

- What are the team’s main strengths and weaknesses?

- What are the traits and skills that will make me successful in this position?

- Where is the company going in the next 5 years?

- What are the achievements I should aim for over the first 90 days?

- What would make you think “I’m so happy we hired X!”?

- Do you have any doubts or concerns regarding my fit for this position?

- Does the team have any budget for education, research, etc.?

- What is the process of onboarding in the team?

- Who is in the team, and how long have they been in that team?

- Who are the main stakeholders I will work with on a day-to-day basis?

- Which options do you have for user research and accessing users or data?

- Are there analytics, recordings, or other data sources to review?

- How do you measure the impact of design work in your company?

- To what extent does management understand the ROI of good UX?

- How does UX contribute strategically to the company’s success?

- Who has the final say on design, and who decides what gets shipped?

- What part of the design process does the team spend most time on?

- How many projects do designers work on simultaneously?

- How has the organization overcome challenges with remote work?

- Do we have a design system, and in what state is it currently?

- Why does a company want to hire a UX designer?

- How would you describe the ideal candidate for this position?

- What does a career path look like for this role?

- How will my performance be evaluated in this role?

- How long do projects take to launch? Can you give me some examples?

- What are the most immediate projects that need to be addressed?

- How do you see the design team growing in the future?

- What traits make someone successful in this team?

- What’s the most challenging part of leading the design team?

- How does the company ensure it’s upholding its values?

Before a job interview, have your questions ready. Not only will they convey a message that you care about the process and the culture, but also that you understand what is required to be successful. And this fine detail might go a long way.

Don’t Forget About The STAR Method

Interviewers closer to business will expect you to present examples of your work using the STAR method (Situation — Task — Action — Result), and might be utterly confused if you delve into all the fine details of your ideation process or the choice of UX methods you’ve used.

- Situation: Set the scene and give necessary details.

- Task: Explain your responsibilities in that situation.

- Action: Explain what steps you took to address it.

- Result: Share the outcomes your actions achieved.

As Meghan suggests, the interview is all about how your skills add value to the problem the company is currently solving. So ask about the current problems and tasks. Interview the person who interviews you, too — but also explain who you are, your focus areas, your passion points, and how you and your expertise would fit in a product and in the organization.

Wrapping Up

A final note on my end: never take a rejection personally. Very often, the reasons you are given for rejection are only a small part of a much larger picture — and have almost nothing to do with you. It might be that a job description wasn’t quite accurate, or the company is undergoing restructuring, or the finances are too tight after all.

Don’t despair and keep going. Write down your expectations. Job titles matter: be deliberate about them and your level of seniority. Prepare good references. Have your questions ready for that job interview. As Catt Small says, “once you have a foot in the door, you’ve got to kick it wide open”.

You are a bright shining star — don’t you ever forget that.

Job Boards

- Remote + In-person

- IXDA

- Who Is Still Hiring?

- UXPA Job Bank

- Otta

- Boooom

- Black Creatives Job Board

- UX Research Jobs

- UX Content Jobs

- UX Content Collective Jobs

- UX Writing Jobs

Useful Resources

- “How To Be Prepared For UX Job Interviews,” by yours truly

- “UX Job Search Strategies and Templates,” by yours truly

- “How To Ace Your Next Job Interview,” by Startup.jobs

- “Cracking The UX Job Interview,” by Artiom Dashinsky

- “The Product Design Interview Process,” by Tanner Christensen

- “10 Questions To Ask in a UX Interview,” by Ryan Scott

- “Six questions to ask after a UX designer job interview,” by Nick Babich

Meet “Smart Interface Design Patterns”

You can find more details on design patterns and UX in Smart Interface Design Patterns, our 15h-video course with 100s of practical examples from real-life projects — with a live UX training later this year. Everything from mega-dropdowns to complex enterprise tables — with 5 new segments added every year. Jump to a free preview. Use code BIRDIE to save 15% off.

Video + UX Training

$ 495.00 $ 699.00

Get Video + UX Training

25 video lessons (15h) + Live UX Training.

100 days money-back-guarantee.

Video only

40 video lessons (15h). Updated yearly.

Also available as a UX Bundle with 2 video courses.

(yk)

Designing Better UX For Left-Handed People

Designing Better UX For Left-Handed People Designing Better UX For Left-Handed People Vitaly Friedman 2025-07-25T15:00:00+00:00 2025-07-30T15:33:12+00:00 Many products — digital and physical — are focused on “average” users — a statistical representation of the user base, which often overlooks or dismisses anything that deviates from that average, […]

Accessibility

Designing Better UX For Left-Handed People

Vitaly Friedman 2025-07-25T15:00:00+00:00

2025-07-30T15:33:12+00:00

Many products — digital and physical — are focused on “average” users — a statistical representation of the user base, which often overlooks or dismisses anything that deviates from that average, or happens to be an edge case. But people are never edge cases, and “average” users don’t really exist. We must be deliberate and intentional to ensure that our products reflect that.

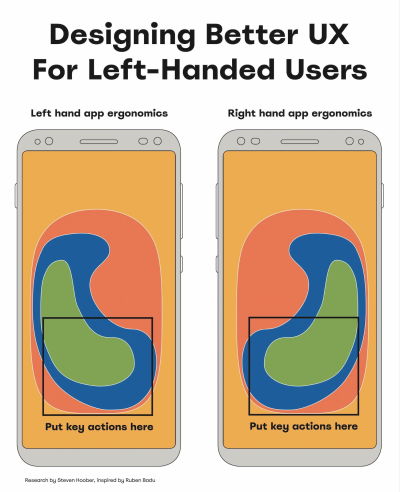

Today, roughly 10% of people are left-handed. Yet most products — digital and physical — aren’t designed with them in mind. And there is rarely a conversation about how a particular digital experience would work better for their needs. So how would it adapt, and what are the issues we should keep in mind? Well, let’s explore what it means for us.

.course-intro{–shadow-color:206deg 31% 60%;background-color:#eaf6ff;border:1px solid #ecf4ff;box-shadow:0 .5px .6px hsl(var(–shadow-color) / .36),0 1.7px 1.9px -.8px hsl(var(–shadow-color) / .36),0 4.2px 4.7px -1.7px hsl(var(–shadow-color) / .36),.1px 10.3px 11.6px -2.5px hsl(var(–shadow-color) / .36);border-radius:11px;padding:1.35rem 1.65rem}@media (prefers-color-scheme:dark){.course-intro{–shadow-color:199deg 63% 6%;border-color:var(–block-separator-color,#244654);background-color:var(–accent-box-color,#19313c)}}

This article is part of our ongoing series on UX. You can find more details on design patterns and UX strategy in Smart Interface Design Patterns 🍣 — with live UX training coming up soon. Jump to table of contents.

Left-Handedness ≠ “Left-Only”

It’s easy to assume that left-handed people are usually left-handed users. However, that’s not necessarily the case. Because most products are designed with right-handed use in mind, many left-handed people have to use their right hand to navigate the physical world.

From very early childhood, left-handed people have to rely on their right hand to use tools and appliances like scissors, openers, fridges, and so on. That’s why left-handed people tend to be ambidextrous, sometimes using different hands for different tasks, and sometimes using different hands for the same tasks interchangeably. However, only 1% of people use both hands equally well (ambidextrous).

In the same way, right-handed people aren’t necessarily right-handed users. It’s common to be using a mobile device in both left and right hands, or both, perhaps with a preference for one. But when it comes to writing, a preference is stronger.

Challenges For Left-Handed Users

Because left-handed users are in the minority, there is less demand for left-handed products, and so typically they are more expensive, and also more difficult to find. Troubles often emerge with seemingly simple tools — scissors, can openers, musical instruments, rulers, microwaves and bank pens.

For example, most scissors are designed with the top blade positioned for right-handed use, which makes cutting difficult and less precise. And in microwaves, buttons and interfaces are nearly always on the right, making left-handed use more difficult.

Now, with digital products, most left-handed people tend to adapt to right-handed tools, which they use daily. Unsurprisingly, many use their right hand to navigate the mouse. However, it’s often quite different on mobile where the left hand is often preferred.

- Don’t make design decisions based on left/right-handedness.

- Allow customizations based on the user’s personal preferences.

- Allow users to re-order columns (incl. the Actions column).

- In forms, place action buttons next to the last user’s interaction.

- Keyboard accessibility helps everyone move faster (Esc).

Usability Guidelines To Support Both Hands

As Ruben Babu writes, we shouldn’t design a fire extinguisher that can’t be used by both hands. Think pull up and pull down, rather than swipe left or right. Minimize the distance to travel with the mouse. And when in doubt, align to the center.

- Bottom left → better for lefties, bottom right → for righties.

- With magnifiers, users can’t spot right-aligned buttons.

- On desktop, align buttons to the left/middle, not right.

- On mobile, most people switch both hands when tapping.

- Key actions → put in middle half to two-thirds of the screen.

A simple way to test the mobile UI is by trying to use the opposite-handed UX test. For key flows, we try to complete them with your non-dominant hand and use the opposite hand to discover UX shortcomings.

For physical products, you might try the oil test. It might be more effective than you might think.

Good UX Works For Both

Our aim isn’t to degrade the UX of right-handed users by meeting the needs of left-handed users. The aim is to create an accessible experience for everyone. Providing a better experience for left-handed people also benefits right-handed people who have a temporary arm disability.

And that’s an often-repeated but also often-overlooked universal principle of usability: better accessibility is better for everyone, even if it might feel that it doesn’t benefit you directly at the moment.

Useful Resources

- “Discover Hidden UX Flaws With the Opposite-Handed UX Test,” by Jeff Huang

- “Right-Aligned Buttons Aren’t More Efficient For Right-Handed People,” by Julia Y.

- “Mobile Accessibility Target Sizes Cheatsheet,” by Vitaly Friedman

- “Why The World Is Not Designed For Left-Handed People,” by Elvis Hsiao

- “Usability For Left Handedness 101”, by Ruben Babu

- Touch Design For Mobile Interfaces, by Steven Hoober

Meet “Smart Interface Design Patterns”

You can find more details on design patterns and UX in Smart Interface Design Patterns, our 15h-video course with 100s of practical examples from real-life projects — with a live UX training later this year. Everything from mega-dropdowns to complex enterprise tables — with 5 new segments added every year. Jump to a free preview. Use code BIRDIE to save 15% off.

Video + UX Training

$ 495.00 $ 699.00

Get Video + UX Training

25 video lessons (15h) + Live UX Training.

100 days money-back-guarantee.

Video only

40 video lessons (15h). Updated yearly.

Also available as a UX Bundle with 2 video courses.

(yk)

Handling JavaScript Event Listeners With Parameters

Handling JavaScript Event Listeners With Parameters Handling JavaScript Event Listeners With Parameters Amejimaobari Ollornwi 2025-07-21T10:00:00+00:00 2025-07-23T15:03:27+00:00 JavaScript event listeners are very important, as they exist in almost every web application that requires interactivity. As common as they are, it is also essential for them to […]

Accessibility

Handling JavaScript Event Listeners With Parameters

Amejimaobari Ollornwi 2025-07-21T10:00:00+00:00

2025-07-23T15:03:27+00:00

JavaScript event listeners are very important, as they exist in almost every web application that requires interactivity. As common as they are, it is also essential for them to be managed properly. Improperly managed event listeners can lead to memory leaks and can sometimes cause performance issues in extreme cases.

Here’s the real problem: JavaScript event listeners are often not removed after they are added. And when they are added, they do not require parameters most of the time — except in rare cases, which makes them a little trickier to handle.

A common scenario where you may need to use parameters with event handlers is when you have a dynamic list of tasks, where each task in the list has a “Delete” button attached to an event handler that uses the task’s ID as a parameter to remove the task. In a situation like this, it is a good idea to remove the event listener once the task has been completed to ensure that the deleted element can be successfully cleaned up, a process known as garbage collection.

A Common Mistake When Adding Event Listeners

A very common mistake when adding parameters to event handlers is calling the function with its parameters inside the addEventListener() method. This is what I mean:

button.addEventListener('click', myFunction(param1, param2));

The browser responds to this line by immediately calling the function, irrespective of whether or not the click event has happened. In other words, the function is invoked right away instead of being deferred, so it never fires when the click event actually occurs.

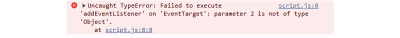

You may also receive the following console error in some cases:

addEventListener on EventTarget: parameter is not of type Object. (Large preview)

This error makes sense because the second parameter of the addEventListener method can only accept a JavaScript function, an object with a handleEvent() method, or simply null. A quick and easy way to avoid this error is by changing the second parameter of the addEventListener method to an arrow or anonymous function.

button.addEventListener('click', (event) => {

myFunction(event, param1, param2); // Runs on click

});

The only hiccup with using arrow and anonymous functions is that they cannot be removed with the traditional removeEventListener() method; you will have to make use of AbortController, which may be overkill for simple cases. AbortController shines when you have multiple event listeners to remove at once.

For simple cases where you have just one or two event listeners to remove, the removeEventListener() method still proves useful. However, in order to make use of it, you’ll need to store your function as a reference to the listener.

Using Parameters With Event Handlers

There are several ways to include parameters with event handlers. However, for the purpose of this demonstration, we are going to constrain our focus to the following two:

Option 1: Arrow And Anonymous Functions

Using arrow and anonymous functions is the fastest and easiest way to get the job done.

To add an event handler with parameters using arrow and anonymous functions, we’ll first need to call the function we’re going to create inside the arrow function attached to the event listener:

const button = document.querySelector("#myButton");

button.addEventListener("click", (event) => {

handleClick(event, "hello", "world");

});

After that, we can create the function with parameters:

function handleClick(event, param1, param2) {

console.log(param1, param2, event.type, event.target);

}

Note that with this method, removing the event listener requires the AbortController. To remove the event listener, we create a new AbortController object and then retrieve the AbortSignal object from it:

const controller = new AbortController();

const { signal } = controller;

Next, we can pass the signal from the controller as an option in the removeEventListener() method:

button.addEventListener("click", (event) => {

handleClick(event, "hello", "world");

}, { signal });

Now we can remove the event listener by calling AbortController.abort():

controller.abort()

Option 2: Closures

Closures in JavaScript are another feature that can help us with event handlers. Remember the mistake that produced a type error? That mistake can also be corrected with closures. Specifically, with closures, a function can access variables from its outer scope.

In other words, we can access the parameters we need in the event handler from the outer function:

function createHandler(message, number) {

// Event handler

return function (event) {

console.log(`${message} ${number} - Clicked element:`, event.target);

};

}

const button = document.querySelector("#myButton");

button.addEventListener("click", createHandler("Hello, world!", 1));

}

This establishes a function that returns another function. The function that is created is then called as the second parameter in the addEventListener() method so that the inner function is returned as the event handler. And with the power of closures, the parameters from the outer function will be made available for use in the inner function.

Notice how the event object is made available to the inner function. This is because the inner function is what is being attached as the event handler. The event object is passed to the function automatically because it’s the event handler.

To remove the event listener, we can use the AbortController like we did before. However, this time, let’s see how we can do that using the removeEventListener() method instead.

In order for the removeEventListener method to work, a reference to the createHandler function needs to be stored and used in the addEventListener method:

function createHandler(message, number) {

return function (event) {

console.log(`${message} ${number} - Clicked element:`, event.target);

};

}

const handler = createHandler("Hello, world!", 1);

button.addEventListener("click", handler);

Now, the event listener can be removed like this:

button.removeEventListener("click", handler);

Conclusion

It is good practice to always remove event listeners whenever they are no longer needed to prevent memory leaks. Most times, event handlers do not require parameters; however, in rare cases, they do. Using JavaScript features like closures, AbortController, and removeEventListener, handling parameters with event handlers is both possible and well-supported.

(gg, yk)

Why Non-Native Content Designers Improve Global UX

Why Non-Native Content Designers Improve Global UX Why Non-Native Content Designers Improve Global UX Oleksii Tkachenko 2025-07-18T13:00:00+00:00 2025-07-23T15:03:27+00:00 A few years ago, I was in a design review at a fintech company, polishing the expense management flows. It was a routine session where we reviewed […]

Accessibility

Why Non-Native Content Designers Improve Global UX

Oleksii Tkachenko 2025-07-18T13:00:00+00:00

2025-07-23T15:03:27+00:00

A few years ago, I was in a design review at a fintech company, polishing the expense management flows. It was a routine session where we reviewed the logic behind content and design decisions.

While looking over the statuses for submitted expenses, I noticed a label saying ‘In approval’. I paused, re-read it again, and asked myself:

“Where is it? Are the results in? Where can I find them? Are they sending me to the app section called “Approval”?”

This tiny label made me question what was happening with my money, and this feeling of uncertainty was quite anxiety-inducing.

My team, all native English speakers, did not flinch, even for a second, and moved forward to discuss other parts of the flow. I was the only non-native speaker in the room, and while the label made perfect sense to them, it still felt off to me.

After a quick discussion, we landed on ‘Pending approval’ — the simplest and widely recognised option internationally. More importantly, this wording makes it clear that there’s an approval process, and it hasn’t taken place yet. There’s no need to go anywhere to do it.

Some might call it nitpicking, but that was exactly the moment I realised how invisible — yet powerful — the non-native speaker’s perspective can be.

In a reality where user testing budgets aren’t unlimited, designing with familiar language patterns from the start helps you prevent costly confusions in the user journey.

“

Those same confusions often lead to:

- Higher rate of customer service queries,

- Lower adoption rates,

- Higher churn,

- Distrust and confusion.

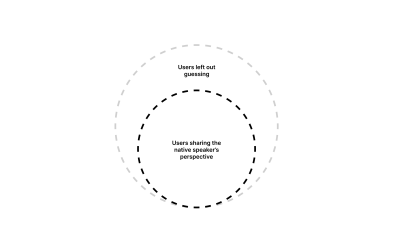

As A Native Speaker, You Don’t See The Whole Picture

Global products are often designed with English as their primary language. This seems logical, but here’s the catch:

Roughly 75% of English-speaking users are not native speakers, which means 3 out of every 4 users.

Native speakers often write on instinct, which works much like autopilot. This can often lead to overconfidence in content that, in reality, is too culturally specific, vague, or complex. And that content may not be understood by 3 in 4 people who read it.

If your team shares the same native language, content clarity remains assumed by default rather than proven through pressure testing.

The price for that is the accessibility of your product. A study by National Library of Medicine found that US adults who had proficiency in English but did not use it as their primary language were significantly less likely to be insured, even when provided with the same level of service as everyone else.

In other words, they did not finish the process of securing a healthcare provider — a process that’s vital to their well-being, in part, due to unclear or inaccessible communication.

If people abandon the process of getting something as vital as healthcare insurance, it’s easy to imagine them dropping out during checkout, account setup, or app onboarding.

Non-native content designers, by contrast, do not write on autopilot. Because of their experience learning English, they’re much more likely to tune into nuances, complexity, and cultural exclusions that natives often overlook. That’s the key to designing for everyone rather than 1 in 4.

Non-native Content Designers Make Your UX Global

Spotting The Clutter And Cognitive Load Issues

When a non-native speaker has to pause, re-read something, or question the meaning of what’s written, they quickly identify it as a friction point in the user experience.

Why it’s important: Every extra second users have to spend understanding your content makes them more likely to abandon the task. This is a high price that companies pay for not prioritising clarity.

Cognitive load is not just about complex sentences but also about the speed. There’s plenty of research confirming that non-native speakers read more slowly than native speakers. This is especially important when you work on the visibility of system status — time-sensitive content that the user needs to scan and understand quickly.

One example you can experience firsthand is an ATM displaying a number of updates and instructions. Even when they’re quite similar, it still overwhelms you when you realise that you missed one, not being able to finish reading.

This kind of rapid-fire updates can increase frustration and the chances of errors.

Always Advocating For Plain English

They tend to review and rewrite things more often to find the easiest way to communicate the message. What a native speaker may consider clear enough might be dense or difficult for a non-native to understand.

Why it’s important: Simple content better scales across countries, languages, and cultures.

Catching Culture-specific Assumptions And References

When things do not make sense, non-native speakers challenge them. Besides the idioms and other obvious traps, native speakers tend to fall into considering their life experience to be shared with most English-speaking users.

Cultural differences might even exist within one globally shared language. Have you tried saying ‘soccer’ instead of ‘football’ in a conversation with someone from the UK? These details may not only cause confusion but also upset people.

Why it’s important: Making sure your product is free from culture-specific references makes your product more inclusive and safeguards you from alienating your users.

They Have Another Level Of Empathy For The Global Audience