Persuasive Design: Ten Years Later

Many product teams still lean on usability improvements and isolated behavioral tweaks to address weak activation, drop-offs, and low retention – only to see results plateau or slip into shallow gam

Ux

Human Strategy In An AI-Accelerated Workflow

UX design is entering a new phase, with designers shifting from makers of outputs to directors of intent. AI can now generate wireframes, prototypes, and even design systems in minutes, but UX has nev

Ux

Now Shipping: Accessible UX Research, A New Smashing Book By Michele Williams

Our newest Smashing Book, “Accessible UX Research” by Michele Williams, is finally shipping worldwide — and we couldn’t be happier! This book is about research, but you’ll also learn about a

Accessibility

Persuasive Design: Ten Years Later

Many product teams still lean on usability improvements and isolated behavioral tweaks to address weak activation, drop-offs, and low retention – only to see results plateau or slip into shallow gam

Ux

Persuasive Design: Ten Years Later

Anders Toxboe

Ten years ago, persuasive design was a relatively new frontier in the field of UX. In a 2015 Smashing article, I was among those who showed a way for practitioners to move from being primarily focused on improving usability and removing friction to also guide users toward a desired outcome. The premise was simple: by leveraging psychology, we could influence user behavior and drive outcomes like higher sign-ups, faster and richer onboarding, and stronger retention and engagement.

A decade later, that promise has proven true — but not in the same way many of us expected. Most product teams still face familiar problems: high bounce rates, weak activation, and users dropping off before experiencing core value. Usability improvements help, but they don’t always address the behavioral gap that sits underneath these patterns.

Persuasive design didn’t disappear — it matured.

Today, the more useful version of this work is often called behavioral design: a way to align product experiences with the real drivers of human behavior, with an ethical mindset. Done well, it can improve conversion, onboarding completion, engagement, and long-term use without slipping into manipulation.

Here’s what I’ll cover:

- What has held up from the last decade of persuasive design;

- What didn’t hold up, especially the limits of pattern-first gamification;

- What changed in how we model behavior, from triggers to context and systems;

- How to use modern behavioral frameworks to improve both discovery and ideation;

- A practical way to run this work as a team, using a five-exercise workshop sequence, you can adapt to your product.

The goal is not to add more tactics to your toolkit. It’s to help you build a repeatable, shared approach to diagnosing behavioral barriers and designing solutions that support both users’ goals and business outcomes.

Is Persuasion The Same As Deception?

Behavioral Design is not about slapping deceptive patterns or superficial “growth hacks” onto your UI. It’s about understanding what truly enables or hinders your users on their way to achieving their goal and then designing experiences that guide them to success.

Behavioral design is more about bridging the gap between what users want (achieving their goals, feeling value) and what businesses need (activation, retention, revenue), creating win-win outcomes where good UX and good business results align.

But like with all powerful tools, they can be used both for good and bad. The difference lies in the intention of the designer. Some designers argue for not promoting behavioral or persuasive design, while others argue that we need to understand the tools to learn how to use them well and how we can easily, and often mindlessly, fall into the trap of promoting an unethical lens.

If we are not enlightened, then how can we judge what represents good and bad practice? If we do not understand how psychology works, then we lack the awareness needed to spot our biases. If we don’t understand these tools, we can’t spot when they’re misused.

The difference between persuasion and deception is intention, plus accountability.

A Decade Later, What Have We learned?

In the early 2010s, many teams treated persuasive design as almost synonymous with gamification. If you added points, badges, and leaderboards, you were doing psychology. And to be fair, those surface mechanics did work in some cases, at least in the short term. They could nudge people through onboarding flows or encourage a few extra logins. But over the decade, their limits became clear. Once the novelty wore off, many of these systems felt shallow. Users learned to ignore streaks that did not connect to anything meaningful or dropped out when they realized the game layer was not helping them reach a real goal.

This is where self-determination theory has quietly reshaped how serious teams think about motivation. It distinguishes between extrinsic motivators, such as rewards, points, and status, and intrinsic drivers like autonomy, competence, and relatedness. Put simply, if your “gamification” fights against what people actually care about, it will eventually fail. The interventions that have survived are the ones that support intrinsic needs. A language learning streak that makes you feel more capable and shows progress can work because it makes the core activity feel more meaningful and manageable. A badge that only exists to move a dashboard number, on the other hand, quickly becomes noise.

Lesson 1: From Quick Fixes To Behavioral Strategy

One key lesson from the past decade is that behavioral design creates the most value when it moves beyond isolated fixes and becomes a deliberate strategy. Many product teams start with a narrow goal: improve a sign-up rate, reduce drop-off, or boost early retention. When standard UX optimizations plateau, they turn to psychology for a quick lift, often with success.

The biggest opportunity is not one more uplift on a stubborn metric, but having a systematic way to understand and shape behavior across the product.

Behavioral design isn’t about hacks.

It’s about helping people succeed.

Common signals are easy to recognize: people sign up but never finish onboarding; they click around once and never return; key features sit unused. A behavioral strategy doesn’t just ask “What can we change on this screen?” It asks what is happening in the user’s mind and context at those moments.

That might lead you to design an onboarding experience that uses curiosity and the goal-gradient effect to guide people to a clear first win, instead of hoping they read a help doc. Or it might lead you to design for exploration and commitment over time: social proof where it actually matters, appropriate challenges that stretch but don’t overwhelm, progressive disclosure so advanced features show up when people are ready, and the right triggers at the most opportune moment instead of random nags.

Great products aren’t just easy to use.

They’re easier to commit to.

Product psychology has shifted from scattered hypotheses to a growing library of repeatable patterns. Those patterns only shine when they sit inside a coherent behavioral model: what users are trying to achieve, what blocks them, and which levers the team will pull at each stage.

Simple nudges, inspired by Thaler and Sunstein, have helped popularize behavioral thinking in design. But we’ve also learned that nudges alone rarely solve deeper behavioral challenges. A behavioral strategy goes further: it blends tactics, grounds them in real motivations, and ties experiments to a clear theory of change. The goal is not a one-off win on today’s dashboard, but a way of working that compounds over time.

Lesson 2: Game Mechanics Alone Are Not Enough

Game mechanics alone are no longer a credible behavioral strategy. Ten years ago, adding points, badges, and leaderboards was almost shorthand for “we’re doing psychology.” Today, most teams have learned the hard way that this is decoration unless it serves a real need.

A behavioral approach starts with a blunt question: What is the game layer in service of, and for whom? Does it help people make progress that matters to them, or does it just keep a dashboard happy? If it ignores intrinsic motivation, it will look clever in a slide deck and brittle in production.

In practice, that means points and streaks are not treated as automatic upgrades anymore. Teams ask whether a mechanic helps users feel more competent, more in control, or more connected to others. A streak only makes sense if it reflects real progress in a skill the user cares about. A leaderboard only adds value if people actually want to compare themselves and if the ranking helps them decide what to do next. If it does not pass those tests, it is clutter, not a motivational engine.

Streaks and badges only work when they support something users truly value.

The most effective products now start with the intrinsic side. They are clear about what the product helps users become or achieve, and only then ask whether a game mechanic can amplify that journey. When game elements are added, they live in the core loop rather than on top of it. They show mastery, mark meaningful milestones, and reinforce self-driven goals. That is the difference between treating gamification as a paint job and using it to support users on a path they already care about.

Lesson 3: From Cause And Effect To Holistic Systems Thinking

Early persuasive design often assumed a simple logic: find the broken step, add the right lever, and users move forward. Nice on a slide, rarely true in reality.

People don’t act for a single reason. They have context, history, competing goals, mood, time pressure, trust issues, and different definitions of success. Two users can take the same step for completely different reasons. The same user can behave differently on a different day.

That’s why systems thinking matters. Behavior is shaped by feedback loops and delays, not just one trigger. Outcomes we care about, trust, competence, and habit, are built over time. A change that boosts this week’s conversion can still weaken next month’s retention.

If you have ever shipped a “conversion win” and then watched support tickets, refunds, or churn go up, you have felt this. The local metric improved. The system got worse.

Your design structures either enable people or box them in. Defaults, navigation, feedback, pacing, rewards — each of these decisions reshapes the system and therefore the journeys people take through it.

So the job is not to perfect a single funnel. It is to build an environment where multiple valid paths can succeed, and where the system supports long-term goals, not just short-term clicks.

The job isn’t to perfect one funnel, but to support multiple valid paths.

A mature behavioral strategy is explicit about that. It is designed for several paths instead of one “happy flow,” supports autonomy instead of forcing compliance, and looks at downstream effects instead of only first-step conversion.

Lesson 4: From Triggers To Context

The same shift has happened in the frameworks we use. A decade ago, the Fogg Behavior Model (FBM) was everywhere. It gave teams a simple trio: motivation, ability, trigger — and a clear message: shouting louder with prompts does not fix low motivation or poor ability. That alone was a useful upgrade.

Fogg’s own work has moved on, too. With Tiny Habits, the focus leans more on identity, emotion, and making behaviors feel easy and personally meaningful. That mirrors a broader shift in the field: away from “fire more prompts” and toward designing environments where the right behavior feels natural.

Teams eventually ran into the same wall: prompts do not fix low capability or missing opportunity. You cannot nag people into skills they do not have or into contexts that do not exist. That is where many teams that work deeply with behavior change have gravitated toward COM-B as a more complete foundation.

COM-B breaks behavior into capability, opportunity, and motivation. It starts with a blunt check: can people actually do this, and does their environment let them? That maps well to modern products, where behavior happens across devices, channels, and moments, not on a single screen. It also plugs into broader behavior change work in health and public policy, so we do not have to reinvent everything inside UX.

Thinking this way nudges teams away from simple cause-and-effect stories. A drop in completion rate is no longer “the button is bad” or “we need more reminders,” but a question about how skills, context, and motivation interact. A capability issue might need a better interface and better education. An opportunity issue might be about device access, timing, or social surroundings, not layout. Motivation might be shaped as much by pricing and brand trust as by any in-product message.

Modern behavioral design is less about activating clicks and more about shaping conditions where action feels easy and meaningful.

This broader lens also makes cross-functional work simpler. Product, design, marketing, and data can share one behavior model and still see their own responsibilities in it. Designers shape perceived capability and opportunity in the interface, marketing shapes motivational framing and triggers, and operations shape the structural opportunity in the service. Instead of everyone pushing their own levers in isolation, COM-B helps teams see that they are working on different parts of the same system.

Lesson 5: Psychology Can Also Be Used To Design And Decode Discovery

COM-B is often used as a bridge between discovery and ideation. On the discovery side, it gives structure to research. You can use it to design interview guides, read analytics, and make sense of observational studies. It was built to diagnose what needs to change for a behavior to shift, which maps neatly onto early product discovery.

Good discovery doesn’t just ask what users say, but examines what their behavior reveals.

Instead of asking “Why did you stop using the product?” and writing down the first answer, you deliberately walk through capability, opportunity, and motivation. You ask things like:

- Can users actually do this, given their skills and knowledge?

- Does their context help or hinder them in practice?

- How strong is their motivation compared with other demands on their time and money?

You walk through recent experiences in detail: which device they used, what time of day it was, who else was around, and what else they were juggling. You talk about how important this behavior is compared with everything else in their life and what trade-offs they make. To participants, these questions feel natural. Under the hood, you are systematically covering all three parts of COM-B, in line with how behavior change practitioners use the model in qualitative work.

You can look at behavioral data in the same way. Funnel drop-offs, time on task, and click patterns are clues: are people stuck because they cannot progress, because the environment gets in the way, or because they do not care enough to continue? Modern analytics tools make it easier to watch what people actually do rather than only what they report, and combining quantitative and qualitative data gives you a fuller picture than either alone.

When there is a gap between what people say and what they do, you treat it as a signal rather than an irritation. Someone might say that saving for retirement is very important, but never set up a recurring transfer. A user might claim that onboarding was simple, while their session shows repeated back and forth between steps. Those mismatches are often where biases, habits, and emotional barriers live. By labelling them in terms of capability, opportunity, and motivation, and linking them to specific barriers like risk aversion, analysis paralysis, status quo bias or present bias, you move from vague “insights” to a structured map of what is actually in the way.

The gap between what people say and what they do is not noise — it’s the map.

The output of this kind of discovery is not just personas and journeys. You also get a clear statement of the current behavior, the target behavior, and the behavioral barriers and enablers that sit between them.

Lesson 6: Use Behavioral Discovery In Your Ideation

The bridge from discovery to ideation can be a single sentence template:

From current behavior to target behavior, by doing X, because of barrier Y.

This “from–to–by–why” framing forces teams to say what they actually believe. You are not just saying “add a checklist.” You are saying: “We believe a checklist will help new users feel more capable, which will increase the chance they complete setup in their first session.” Now it is a behavioral hypothesis you can test with experiments, not just a design idea you hope for.

From there, you can generate several variants that express the same principle in different ways and design experiments around them. You might try a few messages that all lean on loss aversion, or several ways of simplifying a high-friction step, or different forms of social proof that vary in tone and proximity.

The important shift is that you are no longer throwing ideas at the wall. You are deliberately targeting the capability, opportunity, or motivation issues that discovery surfaced, and testing which levers actually work in your context.

Every idea should answer one question: which barrier are we trying to change?

Over time, this loop between behavioral discovery and ideation turns into a local playbook. You learn that in your product, some principles reliably help your users and others fall flat. You also learn that patterns from glowing case studies do not automatically transfer. Even gamification and behavior change research often emphasize context-specific, user-centred implementations rather than generic recipes.

This dual use of psychology in discovery and ideation is one of the bigger shifts of the past decade. A product trio can look at a stubborn drop-off point and ask, together, “Is this a capability, opportunity, or motivation issue?” Then they generate ideas that target that part of the system instead of guessing. That shared language makes behavioral design less of a specialist add-on and more of a normal way for cross-functional teams to reason about their work.

A Decade Later: What Has Proven To Work In Practice

If the first decade of persuasive design taught us anything, it is that behavioral insight is cheap until a team can act on it together.

Methods matter.

Over time, a small set of workshop formats has consistently helped product teams uncover behavioral barriers, align on opportunities, and generate solutions grounded in real psychology instead of surface patterns. As behavioral design has grown from tactical nudges into a strategic discipline, an obvious question keeps coming up: How do teams actually do this work together in practice?

How do product managers, designers, researchers, and engineers move from scattered observations (“people seem confused here”) to a shared behavioral diagnosis, and then to targeted ideas that reflect the real drivers of capability, opportunity, and motivation?

One effective way to make this concrete is through a workshop format. The aim is to help teams:

- Interpret research through a behavioral lens,

- Surface capability, opportunity, and motivation gaps,

- Prioritize high-potential opportunities, and

- Generate ideas that are both psychologically sound and ethically considered.

Real product work is messy and full of feedback loops; nobody follows a perfect step-by-step checklist. But for learning, and especially for introducing behavioral design into a team for the first time, a structured sequence of exercises gives people a mental model. It shows the journey from early discovery to behavioral clarity, from opportunities to ideas, and finally to interventions that have been stress-tested through an ethical lens.

The exercises below are one such recipe. The order is intentional: each step builds on the previous one to move from empathy and insight to prioritized opportunities, concrete concepts, and responsible solutions. No team will follow it letter-perfect every time, but it reflects how behavioral design work tends to unfold when it goes well.

Before diving into the details, here is the full recipe and how each exercise contributes to the bigger behavioral design process:

- Behavioral Empathy Mapping

Builds a shared understanding of the user’s psychological landscape: emotions, habits, misconceptions, and sources of friction. - Behavioral Journey Mapping

Maps the user’s flow over time, and overlays behavioral enablers and obstacles. - Behavior Scoring

Prioritizes which behavioral opportunities to tackle first based on impact, feasibility, and evidence. - Ideas First, Patterns Later

Encourages context-first ideation, then uses persuasive patterns to refine and strengthen promising concepts. - Dark Reality

Evaluates ethical risks, unintended consequences, and potential misuse.

A note on timing: In practice, this sequence can be run in different formats depending on constraints. For a compact format, teams often run Exercises 1–3 in a half-day workshop, and Exercises 4–5 in a second half-day session. With more time, the work can be spread across a full week: discovery synthesis early in the week, prioritization mid-week, and ideation plus ethical review toward the end. The structure matters more than the schedule; the goal is to preserve the progression from understanding → prioritization → ideation → reflection.

Below is a brief walkthrough of each exercise as I typically facilitate them in workshops in tandem with a library of persuasive patterns.

Exercise 1: Behavioral Empathy Mapping

The first step is building a shared, psychologically informed understanding of users. Behavioral Empathy Mapping extends traditional empathy mapping by paying attention to what users attempt, avoid, postpone, misunderstand, or feel uncertain about. These subtle behavioral signals often reveal more than stated needs or pain points.

Goal: Understand what drives or blocks the target behavior by capturing what users think, feel, say, and do — and spotting behavioral barriers and enablers.

Steps:

- On a whiteboard or large paper, draw an empathy map: Thinking & Feeling, Seeing, Saying & Doing, and Hearing.

- Add research insights by letting everyone silently add sticky notes from interviews, data, support logs, or observations into the quadrants. One insight per note.

- Identify barriers and enablers.

Cluster notes that make the behavior harder (barriers) or easier (enablers).

Output: A focused map of the psychological and contextual forces shaping the target behavior, ready to feed into Behavioral Journey Mapping.

Exercise 2: Behavioral Journey Mapping

Once you understand the user’s mindset and context, the next step is to map how those forces play out across time. Behavioral Journey Mapping overlays the user’s goals, actions, emotions, and environment onto the product journey, highlighting the specific moments where behavior tends to stall or shift.

Unlike traditional journey maps, the behavioral version focuses on where capability breaks down, where the environment works against the user, and where motivation fades or conflicts arise. These become early signals of where change is both needed and possible.

The output shows the team precisely where the product is asking too much, where users lack support, or where additional motivation or clarity might be required.

Goal: Map the steps from the user’s starting point to the target behavior, and capture the key enablers and barriers along the way.

Steps:

- Draw a horizontal line from A (starting point) to B (target behavior).

- Have everyone write the steps a user takes from A to B on sticky notes (one per note). Include actions inside and outside the product.

- Place the notes in order along the line. Merge duplicates and align on a shared sequence.

- Extend the vertical axis with two rows:

- Enablers (what could help users move forward),

- Barriers (what could slow or stop users).

- Look for steps with many barriers or few enablers. These are behavioral hot spots.

- Highlight the steps where a good nudge could meaningfully help users complete the journey.

Output: A clear, behavior-focused journey showing where users struggle, why, and which moments offer the most leverage for change.

Exercise 3: Behavior Scoring

With a clearer picture of the user journey and what moments could benefit from a behaviorally helpful hand, you are now ready to identify the behavior it makes most sense to focus on trying to influence.

Goal: Decide which potential target behaviors are worth focusing on first, based on impact, ease of change, and ease of measurement.

Steps:

- List potential target behaviors. Based on the output of the Behavioral Journey Mapping, list behaviors that could potentially be targeted. One behavior per sticky note. Be as concrete as possible (what users do, where, and when).

- Create a table with the following columns:

- Impact of behavior change (how much it could move the goal),

- Ease of change (how realistic it is to influence),

- Ease of measurement (how straightforward it is to track).

- Enter each listed behavior into the table and score them from 0 to 10 in each column.

- Sort behaviors by total score and discuss the highest-scoring ones:

- Do they make sense given what you know about users and constraints?

- Select the primary target behaviors you want to carry into the next exercises.

Optionally, note “bonus behaviors” that might follow as a side effect.

| Potential target behaviors | Impact of behavior change | Ease of change | Ease of measurement | Total |

|---|---|---|---|---|

| … | ||||

| … | ||||

| … |

Output: A small set of prioritized target behaviors with a clear rationale for why they matter now, and a list of lower-priority behaviors you may revisit later.

A filled-out Behavior Scoring table could look like this:

| Potential target behaviors | Impact of behavior change | Ease of change | Ease of measurement | Total |

|---|---|---|---|---|

| User completes onboarding checklist in first session. | 8 | 6 | 9 | 23 |

| User invites at least one teammate within 7 days. | 9 | 4 | 8 | 21 |

| User watches the full product tour video. | 4 | 7 | 6 | 17 |

| User reads help documentation during onboarding. | 3 | 5 | 4 | 12 |

In this case, the checklist completion emerges as the strongest initial focus: it has high impact, is realistically influenceable through design changes, and can be measured reliably. Inviting a teammate may be strategically important, but it may require broader changes beyond interface design, making it a secondary focus.

Exercise 4: Ideas First, Patterns Later

Once the team has agreed on which behavior matters most, the next risk is jumping too quickly to familiar psychological tricks. One of the clearest lessons has been that starting with “the pattern” often leads to generic solutions that feel clever but fail in context.

This exercise deliberately separates idea generation from psychological framing.

Goal: Generate solutions grounded in user context first, then use psychological principles to sharpen and strengthen them.

Steps:

- Start by restating the prioritized target behavior and the key barrier identified during journey mapping. Keep this visible throughout the exercise.

- Then give the team a short, focused ideation window (10–15 minutes).

The rule here is simple: no references to behavioral models, cognitive biases, or persuasive patterns yet. Ideas should come directly from the user context, constraints, and moments uncovered earlier. - Collect ideas on a shared surface and group similar concepts. Look for multiple ways of solving the same underlying problem (cluster them together).

-

Only now do you introduce a library of psychological principles and techniques. I developed the persuasive patterns for this exact purpose. The goal of this step is not to replace ideas, but to refine them:

- Which ideas could be strengthened by reducing friction?

- Which might benefit from clearer feedback, social signals, or better timing?

- Are there alternative ways to achieve the same effect more respectfully or more clearly?

Patterns are used as lenses, not prescriptions. If a pattern does not improve clarity, agency, or usefulness in this context, it is simply ignored.

Output: A refined set of solution concepts that are grounded in real user context and supported, where appropriate, by behavioral principles rather than driven by them.

This sequencing helps teams avoid “pattern-first design,” where ideas are reverse-engineered to fit a theory instead of addressing real human situations.

Exercise 5: Dark Reality

Before ideas turn into experiments or shipped features, they need one final test. Not for feasibility or metrics, but for ethics.

Over the years, this step has proven critical. Many persuasive solutions only reveal their downside when you imagine them working too well, or being applied in the wrong hands, or used on the wrong day by the wrong person.

Goal: Surface ethical risks, unintended consequences, and potential misuse before implementation.

Steps:

- Take one or two of the strongest ideas from the previous exercise.

- Imagine worst-case scenarios by asking the team to deliberately shift perspective:

- What if a competitor used this against us?

- What if this nudges users when they’re stressed, tired, or vulnerable?

- What happens if this works repeatedly over months, not once?

- Could this create pressure, guilt, or dependence?

- Capture concerns around autonomy, trust, fairness, inclusivity, or long-term well-being.

- For each risk, explore ways to soften or counterbalance the effect:

- Clearer intent or transparency,

- Lower frequency or gentler timing,

- Explicit opt-outs,

- Alternative paths forward.

- Some ideas are reshaped. Some are paused.

Some survive intact, but now with greater confidence.

Output: Solutions that have been stress-tested ethically, with known risks acknowledged and mitigated rather than ignored.

Building A Shared Vocabulary For Product Psychology

The teams that get the most out of behavioral design rarely have a single “psychology expert.” Instead, their team shares a vocabulary around product psychology and knows how to communicate around customer problem behaviorally.

A shared vocabulary turns psychology into cross-functional work.

When patterns and principles are shared:

- Product, design, engineering, and marketing can talk about behavior without talking past each other.

- Discovery insights are easier to interpret because common barriers and drivers have names.

- Ideas can be framed as behavioral hypotheses (“we believe this will increase early competence…”) instead of vague guesses.

The Persuasive Patterns collection grew from this need: giving teams a common language and a concrete set of examples to point at. Whether used as a printed deck in a workshop or as long-form references during everyday work, the goal is the same: make product psychology something the whole team can see and discuss.

Persuasive design was often framed as a bag of tricks. Today, the work looks different:

- Game mechanics are used to support intrinsic motivation, not drive vanity engagement.

- Frameworks like COM-B and systems thinking help teams see behavior in context, not as a single trigger.

- Behavioral insight is used to shape discovery and ideation, not just last-minute copy changes.

- Ethics is part of the design brief, not an afterthought.

The next step is not more sophisticated nudges. It is a more systematic practice: simple methods, shared language, and a habit of asking “What is really going on in our users’ lives here?”

If you start by focusing on one behavioral problem, use a couple of the exercises in this article, and give your team a shared set of patterns to reference, you are already practicing persuasive design in the way it has evolved over the last ten years: grounded in evidence, respectful of users, and aimed at outcomes that matter on both sides of the screen.

Human Strategy In An AI-Accelerated Workflow

UX design is entering a new phase, with designers shifting from makers of outputs to directors of intent. AI can now generate wireframes, prototypes, and even design systems in minutes, but UX has nev

Ux

Human Strategy In An AI-Accelerated Workflow

Carrie Webster

I’ve been working in User Experience design for more than twenty years. Long enough to have seen the many job titles, from when stakeholders asked us to “just make it pretty” to when wireframes were delivered as annotated PDFs. I’ve seen many tools come and go over the years, methodologies rise and fall, and entire platforms disappear.

Yet, nothing has unsettled designers quite like AI.

When generative AI tools first entered my workflow, my reaction wasn’t excitement — it was unease, with a little bit of curiosity. Watching an interface appear in seconds, complete with sensible spacing, readable typography, and halfway-decent copy, triggered a very real fear: If a machine can do this, where does that leave me?

That fear is now widespread. Designers at every level ask the same question, often quietly, “Will an AI agent replace me by next week/month/year?” While the difference between next week and next year seems a lot, it depends on where you are in your career and the speed at which your employer chooses to engage with AI tools. I have been lucky in several roles to be working with organisations that haven’t allowed the use of AI tools due to data security concerns. If you’re interested in any of these conversations, you can view the discussions happening on platforms like Reddit.

Fearing the takeover of AI in our roles is not irrational. We’re seeing AI generate wireframes, prototypes, personas, usability summaries, accessibility suggestions, and entire design systems. Tasks that once took days can now literally take minutes.

Here’s the uncomfortable truth: If your role is largely about producing artefacts, drawing buttons, aligning components, or translating instructions into screens, then parts of that work are already being automated.

Still, UX design has never truly been about just creating a user interface.

UX is about navigating ambiguity. It’s about advocating for humans in systems optimised for efficiency. It’s about translating messy human needs and equally messy business goals into experiences that feel coherent, fair, sensible, and usable. It’s about solving human problems by creating a useful and effective user experience.

AI isn’t replacing that work. Rather, it’s amplifying everything around it. The real shift happening is that designers are moving from being makers of outputs to directors of intent. From creators to curators. From hands-on executors to strategic decision-makers. That feels exciting to me. And the creativity and ingenuity this brings to the world of UX.

And that shift doesn’t reduce our value as UX designers, but it does redefine it.

What AI Does Better Than Us (The “Boring” Stuff)

Let’s be clear, AI is better than humans at certain aspects of design work. Fighting that reality only keeps us stuck in fear.

Speed And Volume

AI is exceptionally good at generating large volumes of ideas quickly. For example, layout variations, copy options, component structures, and onboarding flows can all be produced in seconds. In early-stage design, this changes everything. Instead of spending hours sketching three concepts, you can review thirty. That doesn’t eliminate creativity but does expand the playground.

McKinsey estimates that generative AI can reduce the time spent on creative and design-related tasks by up to 70%, particularly during ideation and exploration phases.

AI can also help with the research side of UX, for example, exploring the habits of a certain demographic, and creating personas. While this can reduce research time required, the designer is still required to guardrail this by providing accurate prompts and reviewing generated responses. I have personally found that using AI to assist with the initial research for design projects is incredibly useful, specifically when there is limited time and access to users.

Consistency And Rule Adherence

Design systems live or die by consistency. AI excels at following rules relentlessly, colour tokens, spacing systems, typography scales, and accessibility standards. It doesn’t forget. It doesn’t get tired. It doesn’t “eyeball it.”

AI’s precision makes it incredibly valuable for maintaining large-scale design systems, especially in enterprise or government environments where consistency and compliance matter more than novelty. This is one component of my UX role that I am happy to hand over to AI to manage!

Data Processing At Scale

AI can analyse behavioural data at volumes challenging, if not impossible, for a human team to reasonably process. User journey paths, scroll depth, heatmaps to identify mouse interactions, conversion funnels — AI can identify patterns and anomalies almost instantly.

Behavioural analytics platforms increasingly rely on AI to surface insights that designers might otherwise miss. Contentsquare, an AI-powered analytics platform, talks about the impacts and benefits of utilising behavioural analytics data. I’ve always said that quantitative data tells us the “what”, and qualitative data tells us the “why”. This is the human component of research where we get to connect with the users to understand the reason driving the behaviour.

The key insight here is simple: Analysing large volumes of behavioural data was never where our highest value lay.

If AI can take on repetitive production, system enforcement, and raw data analysis, designers would be free to focus on interpretation, judgment, and human meaning, the hardest parts of the job.

What Humans Do Better Than AI (The “Heart” Stuff)

For all its power, AI has a fundamental limitation: it has never and will never be human.

Empathy Is Lived Experience

AI can describe frustration. It can summarise user feedback. It can mimic empathetic language. But it has never felt the quiet rage of a broken form, the anxiety of submitting sensitive data, or the shame of not understanding an interface that assumes too much.

Empathy in UX isn’t a dataset. It’s a lived, embodied understanding of human vulnerability. This is why user interviews still matter. Why contextual inquiry still matters. Why designers who deeply understand their users consistently make better decisions.

In a previous role where I was designing an incredibly complex fraud alert platform, the key to successful outcomes of that design was based on my understanding of the variety of issues faced by customers. I accessed this information directly from members of the customer-facing team. This information was stored in their brain and based on direct experience with customers. No AI could know or access these goldmines of human experiences.

As the Nielsen Norman Group reminds us, good UX design is not about interfaces. It’s about communication and understanding.

Ethics Require Judgment

AI optimises for the objectives we give it. If the goal is engagement, it will try to maximise engagement — regardless of long-term harm.

It doesn’t inherently recognise dark patterns, manipulation, or emotional exploitation. Infinite scroll, variable rewards, and addictive loops are all patterns AI can enthusiastically optimise unless a human intervenes.

The Center for Humane Technology has documented how algorithmic optimisation can unintentionally undermine wellbeing.

Ethical UX design requires designers who can say, “We could do this, but we shouldn’t.”

Strategy Lives In Context

AI doesn’t sit in stakeholder meetings. It doesn’t hear what’s implied but not stated. It doesn’t understand organisational politics, regulatory nuance, or long-term positioning.

“

This is why senior designers increasingly operate at the intersection of product, strategy, and culture.

The lesson is clear: As AI takes over execution, human designers become the guardians of intent.

How The Daily Work Of A Designer Is Changing

This shift isn’t theoretical. It’s already reshaping daily design practice.

From Designing To Prompting

Designers are moving from manipulating pixels to articulating intent. Clear goals, constraints, and priorities become the input.

Instead of asking AI to “draw a dashboard,” the task becomes:

- “Create a dashboard that reduces cognitive load for first-time users.”

- “Explore layouts optimised for accessibility and low vision.”

Prompting isn’t about clever wording; it’s about clarity of thinking and understanding the intent of the outcomes. You may need to tweak your prompts as you go, but this is all part of the learning process of directing AI to deliver the outcomes needed.

From Making To Choosing

AI produces options. Designers make decisions.

A significant portion of future design work will involve reviewing, critiquing, and refining AI-generated outputs, and then selecting what best serves the user and aligns with ethical, business, and accessibility goals.

This mirrors how experienced designers already work: mentoring juniors, reviewing their concepts, and guiding direction, but at a much greater scale, given the sheer number of design options AI tools can generate.

The Movie Director Metaphor

I often describe the modern designer as a movie director. A director doesn’t operate the camera, build the set, or act every role, but they are responsible for the story, the emotional intent, and the audience experience.

AI tools are the crew. Designers are responsible for the meaning of the story.

A Real-World Shift: What This Looks Like In Practice

To make this less abstract, let’s ground it in a familiar scenario.

Ten years ago, a designer might spend days producing wireframes for a new feature, carefully crafting each screen, annotating every interaction, and defending each decision in reviews. Much of the designer’s perceived value lived in the artefacts themselves.

Today, that same feature can be scaffolded in an afternoon with AI support. But here’s what hasn’t changed — the hard conversations.

The UX designer still has to ask:

- Who is this actually for?

- What problem are we solving, and for whom?

- What happens when this fails?

- Who might this unintentionally exclude or disadvantage?

In practice, I’ve seen senior designers spend less time inside design tools and more time facilitating workshops, synthesising messy inputs, mediating between stakeholders, and protecting user needs when trade-offs arise.

“

Conclusion: How To Prepare Right Now

Don’t panic — practice.

Avoiding AI won’t preserve your relevance. Learning to use it thoughtfully will.

Start small:

- Explore Figma’s AI features.

- Use AI for ideation, not final decisions.

- Treat outputs as conversation starters, not answers.

Confidence comes from familiarity, not avoidance.

Invest In Human Skills.

The most resilient designers will double down on:

- Psychology and behavioural science;

- Communication and facilitation;

- Ethics, accessibility, and inclusion;

- Strategic thinking and storytelling.

These skills compound over time, and they can’t be automated.

The designer’s responsibility in an AI-accelerated world:

There’s an uncomfortable implication in all of this that we don’t talk about enough: when AI makes it easier to design anything, designers become more accountable for what gets released into the world. Bad design used to be excused by constraints. Limited time, limited tools, limited data. Those excuses are disappearing. When AI removes friction from execution, the ethical and strategic responsibility lands squarely on human shoulders.

This is where UX designers can, and must, step up as stewards of quality, accessibility, and humanity in digital systems.

Final Thought

AI won’t take your job. But a designer who knows how to think critically, direct intelligently, and collaborate effectively with AI might take the job of a designer who doesn’t.

The future of UX is no less human. It’s more intentional than ever.

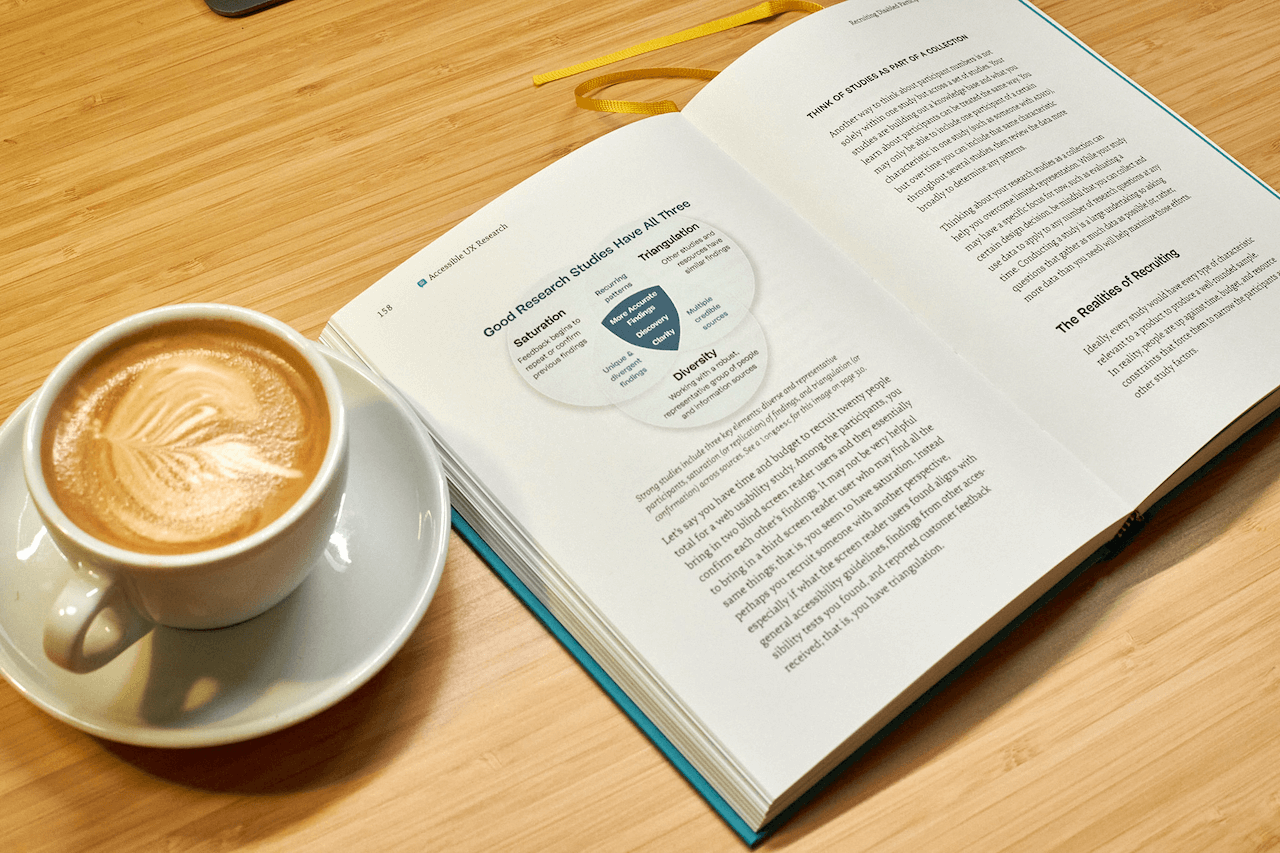

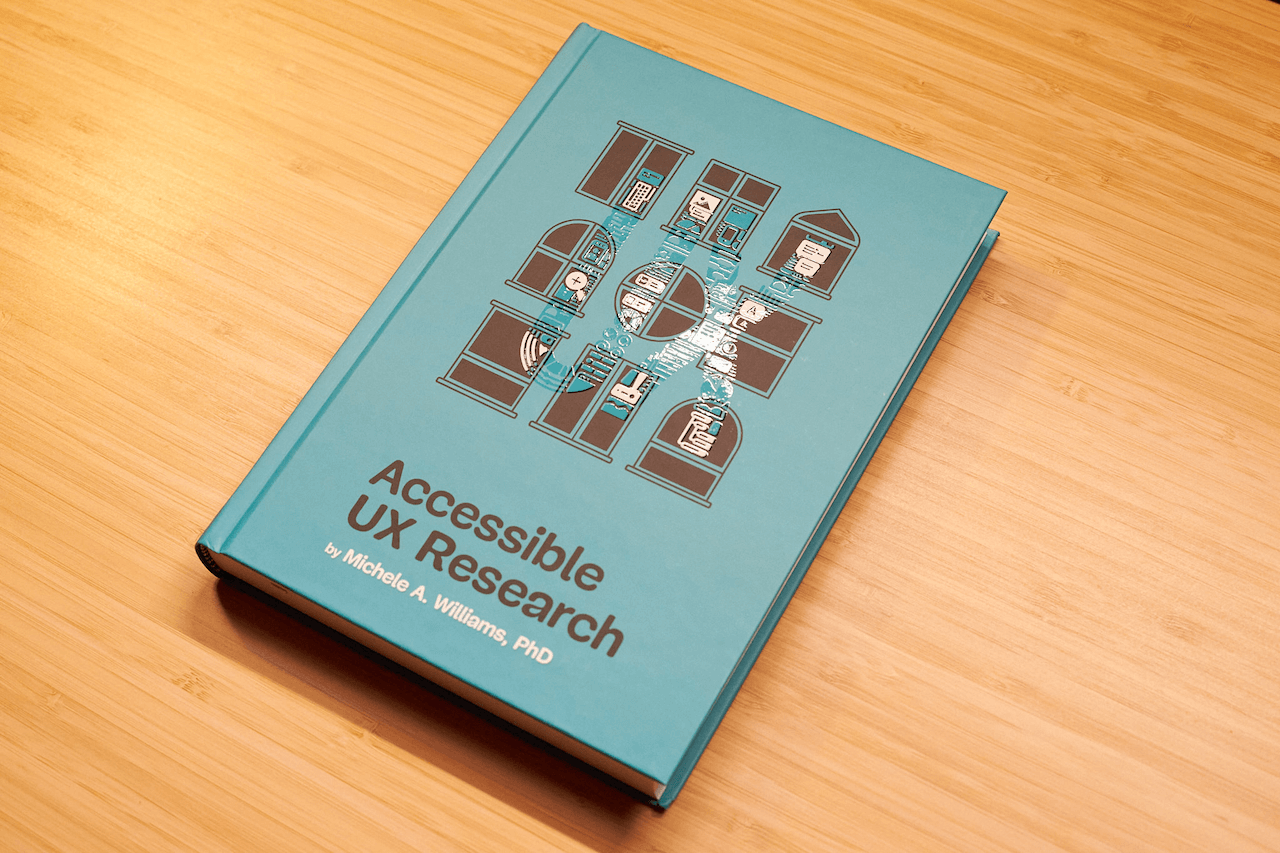

Now Shipping: Accessible UX Research, A New Smashing Book By Michele Williams

Our newest Smashing Book, “Accessible UX Research” by Michele Williams, is finally shipping worldwide — and we couldn’t be happier! This book is about research, but you’ll also learn about a

Accessibility

Now Shipping: Accessible UX Research, A New Smashing Book By Michele Williams

Ari Stiles

Good UX research is at the root of great products. It takes the guesswork out of our designs and helps us solve problems before they grow. One of the best ways to make our research effective is to keep it inclusive — testing with users with different needs and abilities, and using their feedback to build products that work for more people.

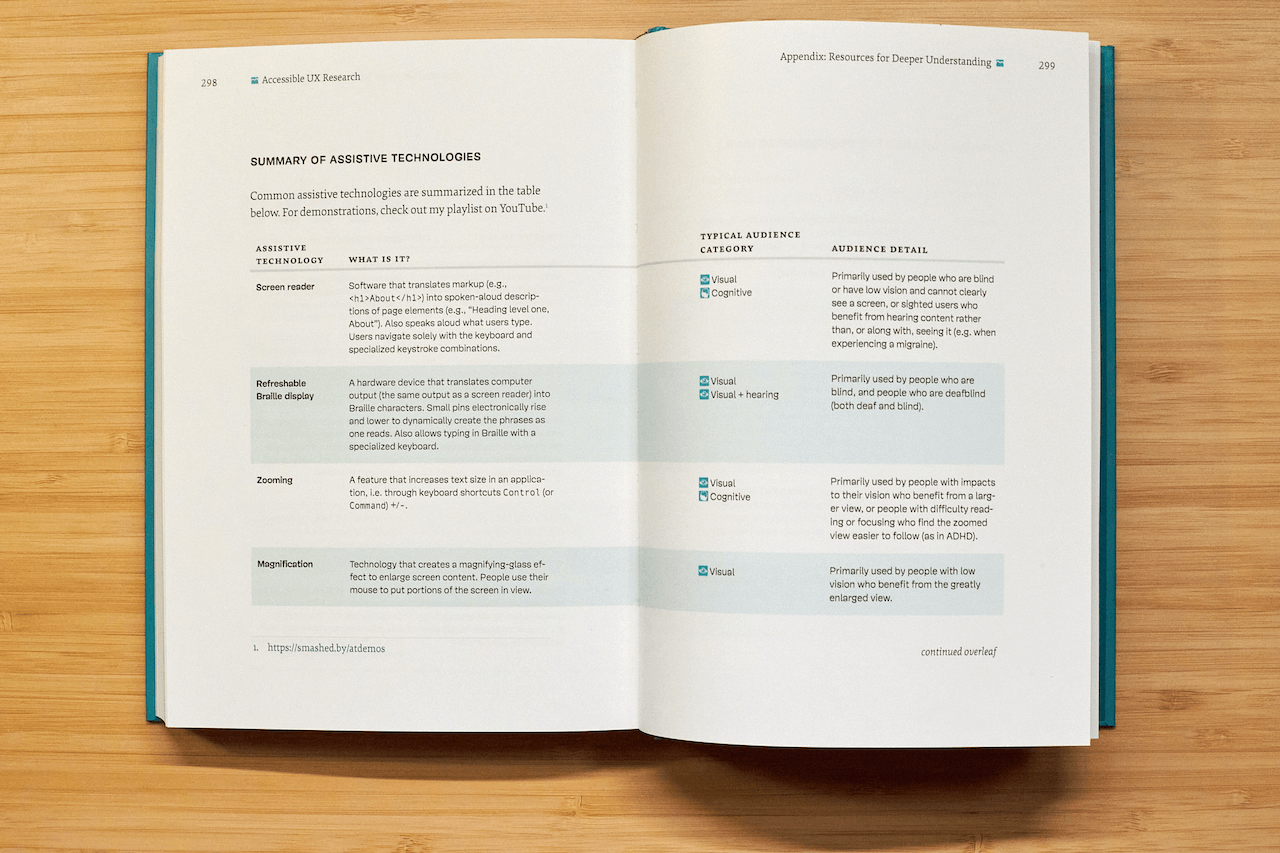

Our newest book, Accessible UX Research, can help you plan and execute great user research. Dr. Michele Williams draws from years of experience to build a clear, easy-to-follow roadmap. This book has something for everyone who wants to build digital products well:

- If you are just getting started with research, you will find helpful tips for making your research more inclusive. You will also get a primer on how to ask better questions, understand your own biases, and how to use your limited time and budget effectively.

- If you are an accessibility-minded professional, you will find a deep well of details on different assistive technologies, how to include them in your testing environment, and ways to record and share your results to create a real impact on the products you make.

- If you are a developer, a manager, or just someone who wants to understand how different abilities impact each user’s experience, you will find the history, clear descriptions, and cultural touchpoints you need in order to make sense of all the accessibility and inclusion recommendations you encounter.

eBook

Free!

DRM-free, of course. ePUB, Kindle, PDF.

Included with your Smashing Membership.

Download PDF, ePUB, Kindle.

Thanks for being smashing! ❤️

About The Book

The book isn’t a checklist for you to complete as a part of your accessibility work. It’s a practical guide to inclusive UX research, from start to finish. If you’ve ever felt unsure how to include disabled participants, or worried about “getting it wrong,” this book is for you. You’ll get clear, practical strategies to make your research more inclusive, effective, and reliable.

Inside, you’ll learn how to:

- Plan research that includes disabled participants from the start,

- Recruit participants with disabilities,

- Facilitate sessions that work for a range of access needs,

- Ask better questions and avoid unintentionally biased research methods,

- Build trust and confidence in your team around accessibility and inclusion.

The book also challenges common assumptions about disability and urges readers to rethink what inclusion really means in UX research and beyond. Let’s move beyond compliance and start doing research that reflects the full diversity of your users. Whether you’re in industry or academia, this book gives you the tools — and the mindset — to make it happen.

324 pages. Written by Dr. Michele A. Williams. Cover art by Espen Brunborg. Download a free sample (PDF, 2.3MB) or get the book right away.

Please note: We’ve found a way to get printed books to our US customers! After several months of dealing with customs and tariff issues, we are happy to announce that all of our books — including this brand-new one — are once again shipping worldwide.

Contents

In Accessible UX Research, Michele Williams takes you on a deep dive into the real world of UX research, with a roadmap for including users with different abilities and needs.

-

1. Disability Mindset+

-

For inclusive research to succeed, we must first confront our mindset about disability, typically influenced by ableism.

-

2. Diversity of Disability+

-

Accessibility is not solely about blind screen reader users; disability categories help us unpack and process the diversity of disabled users.

-

3. Disability in the Stages of UX Research+

-

Disabled participants can and should be part of every research phase — formative, prototype, and summative.

-

4. Recruiting Disabled Participants+

-

Recruiting disabled participants is not always easy, but that simply means we need to learn strategies on where to look.

-

5. Designing Your Research+

-

While our goal is to influence accessible products, our research execution must also be accessible.

-

6. Facilitating An Accessible Study+

-

Preparation and communication with your participants can ensure your study logistics run smoothly.

-

7. Analyzing and Reporting with Accuracy and Impact+

-

How you communicate your findings is just as important as gathering them in the first place — so prepare to be a storyteller, educator, and advocate.

-

8. Disability in the UX Research Field+

-

Inclusion isn’t just for research participants, it’s important for our colleagues as well, as explained by blind UX Researcher Dr. Cynthia Bennett.

-

Appendix+

-

In the appendix, you’ll find helpful summaries and tips to optimize your testing environment and your interactions with participants.

About the Author

Dr. Michele A. Williams is owner of M.A.W. Consulting, LLC – Making Accessibility Work. Her 20+ years of experience include influencing top tech companies as a Senior User Experience (UX) Researcher and Accessibility Specialist and obtaining a PhD in Human-Centered Computing focused on accessibility. An international speaker, published academic author, and patented inventor, she is passionate about educating and advising on technology that does not exclude disabled users.

Dr. Michele A. Williams is owner of M.A.W. Consulting, LLC – Making Accessibility Work. Her 20+ years of experience include influencing top tech companies as a Senior User Experience (UX) Researcher and Accessibility Specialist and obtaining a PhD in Human-Centered Computing focused on accessibility. An international speaker, published academic author, and patented inventor, she is passionate about educating and advising on technology that does not exclude disabled users.

Testimonials

“Accessible UX Research stands as a vital and necessary resource. In addressing disability at the User Experience Research layer, it helps to set an equal and equitable tone for products and features that resonates through the rest of the creation process. The book provides a solid framework for all aspects of conducting research efforts, including not only process considerations, but also importantly the mindset required to approach the work.

This is the book I wish I had when I was first getting started with my accessibility journey. It is a gift, and I feel so fortunate that Michele has chosen to share it with us all.”

Eric Bailey, Accessibility Advocate

“User research in accessibility is non-negotiable for actually meeting users’ needs, and this book is a critical piece in the puzzle of actually doing and integrating that research into accessibility work day to day.”

Devon Pershing, Author of The Accessibility Operations Guidebook

“Our decisions as developers and designers are often based on recommendations, assumptions, and biases. Usually, this doesn’t work, because checking off lists or working solely from our own perspective can never truly represent the depth of human experience. Michele’s book provides you with the strategies you need to conduct UX research with diverse groups of people, challenge your assumptions, and create truly great products.”

Manuel Matuzović, Author of the Web Accessibility Cookbook

“This book is a vital resource on inclusive research. Michele Williams expertly breaks down key concepts, guiding readers through disability models, language, and etiquette. A strong focus on real-world application equips readers to conduct impactful, inclusive research sessions. By emphasizing diverse perspectives and proactive inclusion, the book makes a compelling case for accessibility as a core principle rather than an afterthought. It is a must-read for researchers, product-makers, and advocates!”

Anna E. Cook, Accessibility and Inclusive Design Specialist

Technical Details

- ISBN: 978-3-910835-03-0 (print)

- Quality hardcover, stitched binding, ribbon page marker.

- Free worldwide airmail shipping from Germany.

- eBook available for download as PDF, ePUB, and Amazon Kindle.

eBook

Free!

DRM-free, of course. ePUB, Kindle, PDF.

Included with your Smashing Membership.

Download PDF, ePUB, Kindle.

Thanks for being smashing! ❤️

Community Matters ❤️

Producing a book takes quite a bit of time, and we couldn’t pull it off without the support of our wonderful community. A huge shout-out to Smashing Members for the kind, ongoing support. The eBook is and always will be free for Smashing Members. Plus, Members get a friendly discount when purchasing their printed copy. Just sayin’! 😉

More Smashing Books & Goodies

Promoting best practices and providing you with practical tips to master your daily coding and design challenges has always been (and will be) at the core of everything we do at Smashing.

In the past few years, we were very lucky to have worked together with some talented, caring people from the web community to publish their wealth of experience as printed books that stand the test of time. Trine, Heather, and Steven are three of these people. Have you checked out their books already?

The Ethical Design Handbook

A practical guide on ethical design for digital products.

Understanding Privacy

Everything you need to know to put your users first and make a better web.

Touch Design for Mobile Interfaces

Learn how touchscreen devices really work — and how people really use them.

Setting And Persisting Color Scheme Preferences With CSS And A “Touch” Of JavaScript

There are many ways to approach a “Dark Mode” feature that respects a user’s system color scheme preferences and allows for per-site customization. With recent developments in CSS, core function

Javascript

Setting And Persisting Color Scheme Preferences With CSS And A “Touch” Of JavaScript

Henry Bley-Vroman

Many modern websites give users the power to set a site-specific color scheme preference. A basic implementation is straightforward with JavaScript: listen for when a user changes a checkbox or clicks a button, toggle a class (or attribute) on the <body> element in response, and write the styles for that class to override design with a different color scheme.

CSS’s new :has() pseudo-class, supported by major browsers since December 2023, opens many doors for front-end developers. I’m especially excited about leveraging it to modify UI in response to user interaction without JavaScript. Where previously we have used JavaScript to toggle classes or attributes (or to set styles directly), we can now pair :has() selectors with HTML’s native interactive elements.

Supporting a color scheme preference, like “Dark Mode,” is a great use case. We can use a <select> element anywhere that toggles color schemes based on the selected <option> — no JavaScript needed, save for a sprinkle to save the user’s choice, which we’ll get to further in.

Respecting System Preferences

First, we’ll support a user’s system-wide color scheme preferences by adopting a “Light Mode”-first approach. In other words, we start with a light color scheme by default and swap it out for a dark color scheme for users who prefer it.

The prefers-color-scheme media feature detects the user’s system preference. Wrap “dark” styles in a prefers-color-scheme: dark media query.

selector { /* light styles */ @media (prefers-color-scheme: dark) { /* dark styles */ } } Next, set the color-scheme property to match the preferred color scheme. Setting color-scheme: dark switches the browser into its built-in dark mode, which includes a black default background, white default text, “dark” styles for scrollbars, and other elements that are difficult to target with CSS, and more. I’m using CSS variables to hint that the value is dynamic — and because I like the browser developer tools experience — but plain color-scheme: light and color-scheme: dark would work fine.

:root { /* light styles here */ color-scheme: var(--color-scheme, light); /* system preference is "dark" */ @media (prefers-color-scheme: dark) { --color-scheme: dark; /* any additional dark styles here */ } } Giving Users Control

Now, to support overriding the system preference, let users choose between light (default) and dark color schemes at the page level.

HTML has native elements for handling user interactions. Using one of those controls, rather than, say, a <div> nest, improves the chances that assistive tech users will have a good experience. I’ll use a <select> menu with options for “system,” “light,” and “dark.” A group of <input type="radio"> would work, too, if you wanted the options right on the surface instead of a dropdown menu.

<select id="color-scheme"> <option value="system" selected>System</option> <option value="light">Light</option> <option value="dark">Dark</option> </select> Before CSS gained :has(), responding to the user’s selected <option> required JavaScript, for example, setting an event listener on the <select> to toggle a class or attribute on <html> or <body>.

But now that we have :has(), we can now do this with CSS alone! You’ll save spending any of your performance budget on a dark mode script, plus the control will work even for users who have disabled JavaScript. And any “no-JS” folks on the project will be satisfied.

What we need is a selector that applies to the page when it :has() a select menu with a particular [value]:checked. Let’s translate that into CSS:

:root:has(select option[value="dark"]:checked)We’re defaulting to a light color scheme, so it’s enough to account for two possible dark color scheme scenarios:

- The page-level color preference is “system,” and the system-level preference is “dark.”

- The page-level color preference is “dark”.

The first one is a page-preference-aware iteration of our prefers-color-scheme: dark case. A “dark” system-level preference is no longer enough to warrant dark styles; we need a “dark” system-level preference and a “follow the system-level preference” at the page-level preference. We’ll wrap the prefers-color-scheme media query dark scheme styles with the :has() selector we just wrote:

:root { /* light styles here */ color-scheme: var(--color-scheme, light); /* page preference is "system", and system preference is "dark" */ @media (prefers-color-scheme: dark) { &:has(#color-scheme option[value="system"]:checked) { --color-scheme: dark; /* any additional dark styles, again */ } } } Notice that I’m using CSS Nesting in that last snippet. Baseline 2023 has it pegged as “Newly available across major browsers” which means support is good, but at the time of writing, support on Android browsers not included in Baseline’s core browser set is limited. You can get the same result without nesting.

:root { /* light styles */ color-scheme: var(--color-scheme, light); /* page preference is "dark" */ &:has(#color-scheme option[value="dark"]:checked) { --color-scheme: dark; /* any additional dark styles */ } } For the second dark mode scenario, we’ll use nearly the exact same :has() selector as we did for the first scenario, this time checking whether the “dark” option — rather than the “system” option — is selected:

:root { /* light styles */ color-scheme: var(--color-scheme, light); /* page preference is "dark" */ &:has(#color-scheme option[value="dark"]:checked) { --color-scheme: dark; /* any additional dark styles */ } /* page preference is "system", and system preference is "dark" */ @media (prefers-color-scheme: dark) { &:has(#color-scheme option[value="system"]:checked) { --color-scheme: dark; /* any additional dark styles, again */ } } } Now the page’s styles respond to both changes in users’ system settings and user interaction with the page’s color preference UI — all with CSS!

But the colors change instantly. Let’s smooth the transition.

Respecting Motion Preferences

Instantaneous style changes can feel inelegant in some cases, and this is one of them. So, let’s apply a CSS transition on the :root to “ease” the switch between color schemes. (Transition styles at the :root will cascade down to the rest of the page, which may necessitate adding transition: none or other transition overrides.)

Note that the CSS color-scheme property does not support transitions.

:root { transition-duration: 200ms; transition-property: /* properties changed by your light/dark styles */; } Not all users will consider the addition of a transition a welcome improvement. Querying the prefers-reduced-motion media feature allows us to account for a user’s motion preferences. If the value is set to reduce, then we remove the transition-duration to eliminate unwanted motion.

:root { transition-duration: 200ms; transition-property: /* properties changed by your light/dark styles */; @media screen and (prefers-reduced-motion: reduce) { transition-duration: none; } } Transitions can also produce poor user experiences on devices that render changes slowly, for example, ones with e-ink screens. We can extend our “no motion condition” media query to account for that with the update media feature. If its value is slow, then we remove the transition-duration.

:root { transition-duration: 200ms; transition-property: /* properties changed by your light/dark styles */; @media screen and (prefers-reduced-motion: reduce), (update: slow) { transition-duration: 0s; } } Let’s try out what we have so far in the following demo. Notice that, to work around color-scheme’s lack of transition support, I’ve explicitly styled the properties that should transition during theme changes.

See the Pen [CSS-only theme switcher (requires :has()) [forked]](https://codepen.io/smashingmag/pen/YzMVQja) by Henry.

Not bad! But what happens if the user refreshes the pages or navigates to another page? The reload effectively wipes out the user’s form selection, forcing the user to re-make the selection. That may be acceptable in some contexts, but it’s likely to go against user expectations. Let’s bring in JavaScript for a touch of progressive enhancement in the form of…

Persistence

Here’s a vanilla JavaScript implementation. It’s a naive starting point — the functions and variables aren’t encapsulated but are instead properties on window. You’ll want to adapt this in a way that fits your site’s conventions, framework, library, and so on.

When the user changes the color scheme from the <select> menu, we’ll store the selected <option> value in a new localStorage item called "preferredColorScheme". On subsequent page loads, we’ll check localStorage for the "preferredColorScheme" item. If it exists, and if its value corresponds to one of the form control options, we restore the user’s preference by programmatically updating the menu selection.

/* * If a color scheme preference was previously stored, * select the corresponding option in the color scheme preference UI * unless it is already selected. */ function restoreColorSchemePreference() { const colorScheme = localStorage.getItem(colorSchemeStorageItemName); if (!colorScheme) { // There is no stored preference to restore return; } const option = colorSchemeSelectorEl.querySelector(`[value=${colorScheme}]`); if (!option) { // The stored preference has no corresponding option in the UI. localStorage.removeItem(colorSchemeStorageItemName); return; } if (option.selected) { // The stored preference's corresponding menu option is already selected return; } option.selected = true; } /* * Store an event target's value in localStorage under colorSchemeStorageItemName */ function storeColorSchemePreference({ target }) { const colorScheme = target.querySelector(":checked").value; localStorage.setItem(colorSchemeStorageItemName, colorScheme); } // The name under which the user's color scheme preference will be stored. const colorSchemeStorageItemName = "preferredColorScheme"; // The color scheme preference front-end UI. const colorSchemeSelectorEl = document.querySelector("#color-scheme"); if (colorSchemeSelectorEl) { restoreColorSchemePreference(); // When the user changes their color scheme preference via the UI, // store the new preference. colorSchemeSelectorEl.addEventListener("input", storeColorSchemePreference); } Let’s try that out. Open this demo (perhaps in a new window), use the menu to change the color scheme, and then refresh the page to see your preference persist:

See the Pen [CSS-only theme switcher (requires :has()) with JS persistence [forked]](https://codepen.io/smashingmag/pen/GRLmEXX) by Henry.

If your system color scheme preference is “light” and you set the demo’s color scheme to “dark,” you may get the light mode styles for a moment immediately after reloading the page before the dark mode styles kick in. That’s because CodePen loads its own JavaScript before the demo’s scripts. That is out of my control, but you can take care to improve this persistence on your projects.

Persistence Performance Considerations

Where things can get tricky is restoring the user’s preference immediately after the page loads. If the color scheme preference in localStorage is different from the user’s system-level color scheme preference, it’s possible the user will see the system preference color scheme before the page-level preference is restored. (Users who have selected the “System” option will never get that flash; neither will those whose system settings match their selected option in the form control.)

If your implementation is showing a “flash of inaccurate color theme”, where is the problem happening? Generally speaking, the earlier the scripts appear on the page, the lower the risk. The “best option” for you will depend on your specific stack, of course.

What About Browsers That Don’t Support :has()?

All major browsers support :has() today Lean into modern platforms if you can. But if you do need to consider legacy browsers, like Internet Explorer, there are two directions you can go: either hide or remove the color scheme picker for those browsers or make heavier use of JavaScript.

If you consider color scheme support itself a progressive enhancement, you can entirely hide the selection UI in browsers that don’t support :has():

@supports not selector(:has(body)) { @media (prefers-color-scheme: dark) { :root { /* dark styles here */ } } #color-scheme { display: none; } } Otherwise, you’ll need to rely on a JavaScript solution not only for persistence but for the core functionality. Go back to that traditional event listener toggling a class or attribute.

The CSS-Tricks “Complete Guide to Dark Mode” details several alternative approaches that you might consider as well when working on the legacy side of things.

Designing For Agentic AI: Practical UX Patterns For Control, Consent, And Accountability

Autonomy is an output of a technical system. Trustworthiness is an output of a design process. Here are concrete design patterns, operational frameworks, and organizational practices for building agen

Ux

Designing For Agentic AI: Practical UX Patterns For Control, Consent, And Accountability

Victor Yocco

In the first part of this series, we established the fundamental shift from generative to agentic artificial intelligence. We explored why this leap from suggesting to acting demands a new psychological and methodological toolkit for UX researchers, product managers, and leaders. We defined a taxonomy of agentic behaviors, from suggesting to acting autonomously, outlined the essential research methods, defined the risks of agentic sludge, and established the accountability metrics required to navigate this new territory. We covered the what and the why.

Now, we move from the foundational to the functional. This article provides the how: the concrete design patterns, operational frameworks, and organizational practices essential for building agentic systems that are not only powerful but also transparent, controllable, and worthy of user trust. If our research is the diagnostic tool, these patterns are the treatment plan. They are the practical mechanisms through which we can give users a palpable sense of control, even as we grant AI unprecedented autonomy. The goal is to create an experience where autonomy feels like a privilege granted by the user, not a right seized by the system.

Core UX Patterns For Agentic Systems

Designing for agentic AI is designing for a relationship. This relationship, like any successful partnership, must be built on clear communication, mutual understanding, and established boundaries.

To manage the shift from suggestion to action, we utilize six patterns that follow the functional lifecycle of an agentic interaction:

- Pre-Action (Establishing Intent)

The Intent Preview and Autonomy Dial ensure the user defines the plan and the agent’s boundaries before anything happens. - In-Action (Providing Context)

The Explainable Rationale and Confidence Signal maintain transparency while the agent works, showing the “why” and “how certain.” - Post-Action (Safety and Recovery)

The Action Audit & Undo and Escalation Pathway provide a safety net for errors or high-ambiguity moments.

Below, we will cover each pattern in detail, including recommendations for metrics for success. These targets are representative benchmarks based on industry standards; adjust them based on your specific domain risk.

1. The Intent Preview: Clarifying the What and How

This pattern is the conversational equivalent of saying, “Here’s what I’m about to do. Are you okay with that?” It’s the foundational moment of seeking consent in the user-agent relationship.

Before an agent takes any significant action, the user must have a clear, unambiguous understanding of what is about to happen. The Intent Preview, or Plan Summary, establishes informed consent. It is the conversational pause before action, transforming a black box of autonomous processes into a transparent, reviewable plan.

Psychological Underpinning

Presenting a plan before action reduces cognitive load and eliminates surprise, giving users a moment to verify the agent truly understands their intent.

Anatomy of an Effective Intent Preview:

- Clarity and Conciseness

The preview must be immediately digestible. It should summarize the primary actions and outcomes in plain language, avoiding technical jargon. For instance, instead of “Executing API call to cancel_booking(id: 4A7B),” it should state, “Cancel flight AA123 to San Francisco.” - Sequential Steps

For multi-step operations, the preview should outline the key phases. This reveals the agent’s logic and allows users to spot potential issues in the proposed sequence. - Clear User Actions

The preview is a decision point, not just a notification. It must be accompanied by a clear set of choices. It’s a moment of intentional friction, a ‘speed bump’ in the process designed to ensure the user is making a conscious choice, particularly for irreversible or high-stakes actions.

Let’s revisit our travel assistant scenario from the first part of this series. We use this proactive assistant to illustrate how an agent handles a flight cancellation. The agent has detected a flight cancellation and has formulated a recovery plan.

The Intent Preview would look something like this:

Proposed Plan for Your Trip Disruption

I’ve detected that your 10:05 AM flight has been canceled. Here’s what I plan to do:

- Cancel Flight UA456

Process refund and confirm cancellation details.- Rebook on Flight DL789

Book a confirmed seat on a 2:30 PM non-stop flight, as this is the next available non-stop flight with a confirmed seat.- Update Hotel Reservation

Notify the Marriott that you will be arriving late.- Email Updated Itinerary

Send the new flight and hotel details to you and your assistant, Jane Doe.[ Proceed with this Plan ] [ Edit Plan ] [ Handle it Myself ]

This preview is effective because it provides a complete picture, from cancellation to communication, and offers three distinct paths forward: full consent (Proceed), a desire for modification (Edit Plan), or a full override (Handle it Myself). This multifaceted control is the bedrock of trust.

When to Prioritize This Pattern

This pattern is non-negotiable for any action that is irreversible (e.g., deleting user data), involves a financial transaction of any amount, shares information with other people or systems, or makes a significant change that a user cannot easily undo.

Risk of Omission

Without this, users feel ambushed by the agent’s actions and will disable the feature to regain control.

Metrics for Success:

- Acceptance Ratio

Plans Accepted Without Edit / Total Plans Displayed. Target > 85%. - Override Frequency

Total Handle it Myself Clicks / Total Plans Displayed. A rate > 10% triggers a model review. - Recall Accuracy

Percentage of test participants who can correctly list the plan’s steps 10 seconds after the preview is hidden.

Applying This to High-Stakes Domains

While travel plans are a relatable baseline, this pattern becomes indispensable in complex, high-stakes environments where an error results in more than an inconvenience for an individual traveling. Many of us work in settings where wrong decisions may result in a system outage, putting a patient’s safety at risk, or numerous other catastrophic outcomes that unreliable technology would introduce.

Consider a DevOps Release Agent tasked with managing cloud infrastructure. In this context, the Intent Preview acts as a safety barrier against accidental downtime.

In this interface, the specific terminology (Drain Traffic, Rollback) replaces generalities, and the actions are binary and impactful. The user authorizes a major operational shift based on the agent’s logic, rather than approving a suggestion.

2. The Autonomy Dial: Calibrating Trust With Progressive Authorization

Every healthy relationship has boundaries. The Autonomy Dial is how the user establishes it with their agent, defining what they are comfortable with the agent handling on its own.

Trust is not a binary switch; it’s a spectrum. A user might trust an agent to handle low-stakes tasks autonomously but demand full confirmation for high-stakes decisions. The Autonomy Dial, a form of progressive authorization, allows users to set their preferred level of agent independence, making them active participants in defining the relationship.

Psychological Underpinning

Allowing users to tune the agent’s autonomy grants them a locus of control, letting them match the system’s behavior to their personal risk tolerance.

Implementation

This can be implemented as a simple, clear setting within the application, ideally on a per-task-type basis. Using the taxonomy from our first article, the settings could be:

- Observe & Suggest

I want to be notified of opportunities or issues, but the agent will never propose a plan. - Plan & Propose

The agent can create plans, but I must review every one before any action is taken. - Act with Confirmation

For familiar tasks, the agent can prepare actions, and I will give a final go/no-go confirmation. - Act Autonomously

For pre-approved tasks (e.g., disputing charges under $50), the agent can act independently and notify me after the fact.

An email assistant, for example, could have a separate autonomy dial for scheduling meetings versus sending emails on the user’s behalf. This granularity is key, as it reflects the nuanced reality of a user’s trust.

When to Prioritize This Pattern

Prioritize this in systems where tasks vary widely in risk and personal preference (e.g., financial management tools, communication platforms). It is essential for onboarding, allowing users to start with low autonomy and increase it as their confidence grows.

Risk of Omission

Without this, users who experience a single failure will abandon the agent completely rather than simply dialing back its permissions.

Metrics for Success:

- Trust Density

Percentage breakdown of users per setting (e.g., 20% Suggest, 50% Confirm, 30% Auto). - Setting Churn

Number of Setting Changes / Total Active Users per month. High churn indicates trust volatility.

3. The Explainable Rationale: Answering Why?

After taking an action, a good partner explains their reasoning. This pattern is the open communication that follows an action, answering Why? before it’s even asked. “I did that because you’ve told me in the past that you prefer X.”